Bot releases are visible (Hide)

Published by matiasdelellis 4 months ago

Changelog

All notable changes to this project will be documented in this file.

[0.9.51] - 2024-06-18

- Fix admin page when for any reason disable pdlib.

- Adds batch option for face clustering. Issue #712 (Among many others)

- Shows how many processed images there are in the stats command.

- Add setting to enable facial recognition for all users by default.

Published by matiasdelellis 5 months ago

Changelog

All notable changes to this project will be documented in this file.

[0.9.50] - 2024-05-22

- Enable Nextcloud 29 and drop 27.

- Add support to authorization imaginary with key. PR #746, Issue #700. Thanks to fabalexsie.

- Add button to "Review ignored people". PR #747, Issue #735. Thanks to wronny.

- Add the sixth model to the application. Aka DlibTaguchiHog model. =)

This model was trained from scratch by Taguchi Tokuji to slightly improve the

bias of the original model on non-Caucasian/American people, training with a

greater number of Japanese and others Asians people. It obtained a similar

result in the LFW tests, slightly lower, but within the acceptable margins of

error.

This model should improve the behavior of the application with people with

these traits. - Update translations. Many thanks to everyone!.

For more information about the model, you can see the official website:

Published by matiasdelellis 6 months ago

[0.9.40] - 2024-04-24

- Enable PHP 8.3 and NC28

- Add special modes to background_job command that allows images to be analyzed in multiple processes to improve speed.

- Add special mode to sync-album command to generate combined albums with multiple people. PR #709

Published by matiasdelellis about 1 year ago

Codename???? 🤔

[0.9.31] - 2023-08-24

- Be sure to open the model before getting relevant info. Issue #423

[0.9.30] - 2023-08-23

- Implement the Chinese Whispers Clustering algorithm in native PHP.

- Open the model before requesting information. Issue #679

- If Imaginary is configured, check that it is accessible before using it.

- If Memories is installed, show people's photos in this app.

- Add face thumbnail when search persons.

- Disable auto rotate for HEIF images in imaginary. Issue #662

- Add the option to print the progress in json format.

Why that meme as codename?

To the happiness of many (Issue https://github.com/matiasdelellis/facerecognition/issues/690, https://github.com/matiasdelellis/facerecognition/issues/688, https://github.com/matiasdelellis/facerecognition/issues/687, https://github.com/matiasdelellis/facerecognition/issues/685, https://github.com/matiasdelellis/facerecognition/issues/649, https://github.com/matiasdelellis/facerecognition/issues/632, https://github.com/matiasdelellis/facerecognition/issues/627, https://github.com/matiasdelellis/facerecognition/issues/625, etc..?), Implement the Chinese Whispers Clustering algorithm in native PHP, just means that we do not depend on the pdlib extension, but it goes without saying that its use is still highly recommended.

So, the application can be installed without pdlib or bzip2 installed. But if you want to use models 1, 2, 3, or 4 you still have to rely on these extensions.

Do you insist on not installing these extensions?.

You must configure the external model and select model 5 here, and thus free yourself from these extensions. 😬

Well, You will understand that it is slower, however I must admit that with JIT enabled, it is quite acceptable, and this is the only reason why decided to publish it.

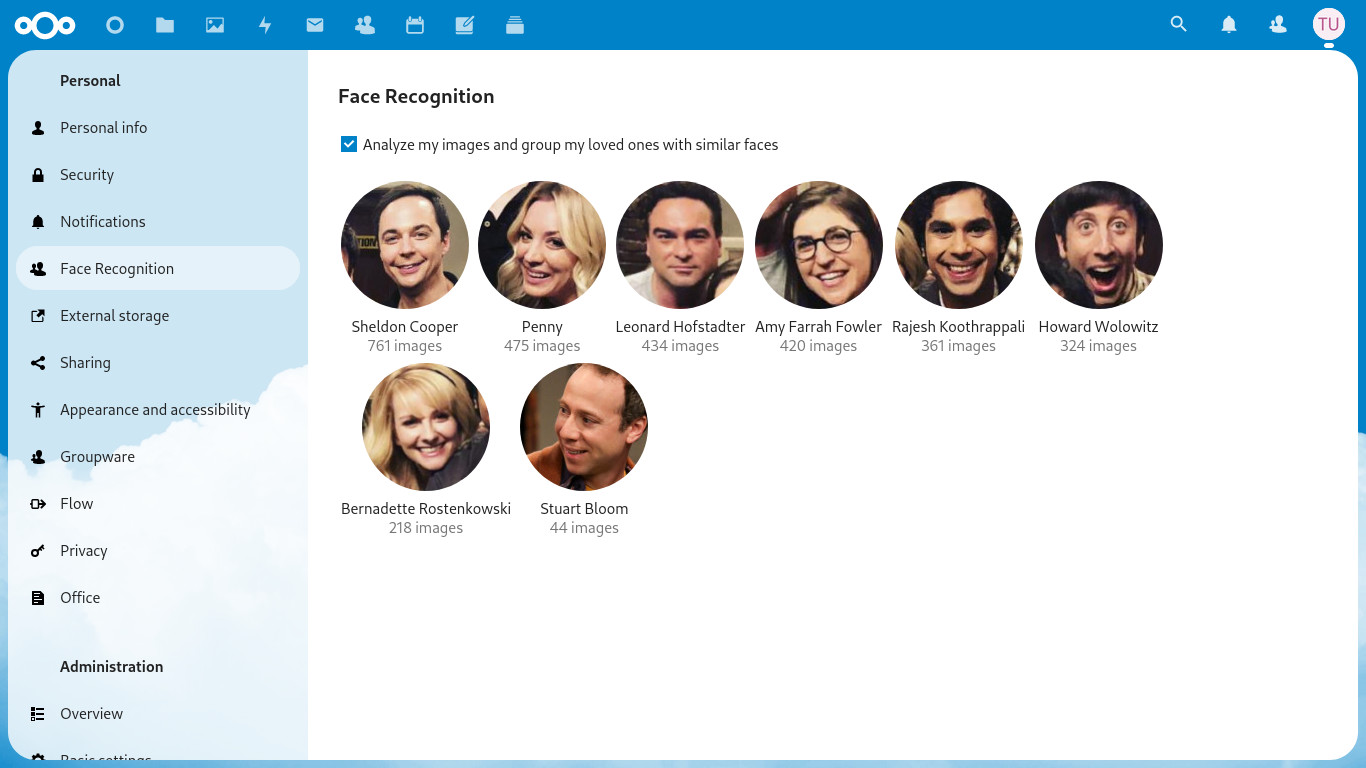

Some statistics

Just I added 2162 Big Bang Theory photos on my test server, resulting in 6059 faces, and I cluster them with both implementations..

Dlib: (Reference)

- User time (seconds): 10.53

- Maximum resident set size (kbytes): 245412

PHP:

- User time (seconds): 45.45

- Maximum resident set size (kbytes): 266060

Time:

=> 45.45/10.53 = 4,316239316

Memory:

=> 266060/245412 = 1,084136065

PHP + JIT

User time (seconds): 16.20

Maximum resident set size (kbytes): 283760

Time:

=> 16.20/10.53 = 1,538461538

Memory:

=> 283760/245412 = 1,156259678

So, as you can see the php implementation is 3.3 times slower, but if you enable JIT, it's only 53 percent slower. I guess it's ok, and the memory didn't increase much. 😄

Note:

Once again I insist on recommending the use of local models (with dlib), and I invite those who want to use it to give a little love to the external model. 😬

Published by matiasdelellis about 1 year ago

Codename???? 🤔

[0.9.30] - 2023-08-23

- Implement the Chinese Whispers Clustering algorithm in native PHP.

- Open the model before requesting information. Issue #679

- If Imaginary is configured, check that it is accessible before using it.

- If Memories is installed, show people's photos in this app.

- Add face thumbnail when search persons.

- Disable auto rotate for HEIF images in imaginary. Issue #662

- Add the option to print the progress in json format.

Why that meme as codename?

To the happiness of many (Issue https://github.com/matiasdelellis/facerecognition/issues/690, https://github.com/matiasdelellis/facerecognition/issues/688, https://github.com/matiasdelellis/facerecognition/issues/687, https://github.com/matiasdelellis/facerecognition/issues/685, https://github.com/matiasdelellis/facerecognition/issues/649, https://github.com/matiasdelellis/facerecognition/issues/632, https://github.com/matiasdelellis/facerecognition/issues/627, https://github.com/matiasdelellis/facerecognition/issues/625, etc..?), Implement the Chinese Whispers Clustering algorithm in native PHP, just means that we do not depend on the pdlib extension, but it goes without saying that its use is still highly recommended.

So, the application can be installed without pdlib or bzip2 installed. But if you want to use models 1, 2, 3, or 4 you still have to rely on these extensions.

Do you insist on not installing these extensions?.

You must configure the external model and select model 5 here, and thus free yourself from these extensions. 😬

Well, You will understand that it is slower, however I must admit that with JIT enabled, it is quite acceptable, and this is the only reason why decided to publish it.

Some statistics

Just I added 2162 Big Bang Theory photos on my test server, resulting in 6059 faces, and I cluster them with both implementations..

Dlib: (Reference)

- User time (seconds): 10.53

- Maximum resident set size (kbytes): 245412

PHP:

- User time (seconds): 45.45

- Maximum resident set size (kbytes): 266060

Memory:

=> 266060/245412 = 1,084136065

Time:

=> 45.45/10.53 = 4,316239316

PHP + JIT

User time (seconds): 16.20

Maximum resident set size (kbytes): 283760

Time:

=> 16.20/10.53 = 1,538461538

Memory:

=> 283760/245412 = 1,156259678

So, as you can see the php implementation is 3.3 times slower, but if you enable JIT, it's only 15 percent slower. I guess it's ok, and the memory didn't increase much. 😄

Note:

Once again I insist on recommending the use of local models (with dlib), and I invite those who want to use it to give a little love to the external model. 😬

Published by matiasdelellis over 1 year ago

Changelog

All notable changes to this project will be documented in this file.

[0.9.20] - 2023-06-14

- Add support for (Now old) Nextcloud 26.

- Add support to NC27 for early testing.

- Clean some code an split great classed to improve maintenance.

- Don't catch Imaginary exceptions. Issue #658

- Update french translation thanks to Jérémie Tarot.

Note:

This is a version made with a bit of shame. I'm short on time, and I was hoping to do a little more before release it, but that nextcloud publishes a new version before enabling the previous one, it presupposes this release. 🤦🏻♂️ 😞

It's actually well tested in NC26, but I'd like to improve some things soon. Not so in NC27, I hope to hear your reports.. 🙈

Published by matiasdelellis over 1 year ago

[0.9.12] - 2023-03-25

- Add support for using imaginary to create the temporary files.

This add support for images heic, tiff, and many more. Issue #494,

#215 and #348 among many other reports. - Memory optimization in face clustering task. Part of issue #339

In my tests, it reduces between 33% and 39% of memory, and as an

additional improvement, there was also a reduction in time of around

19%. There are still several improvements to be made, but it is a

good step. - Modernizes the construction of the javascript code. Issue #613

- Fix Unhandled exception and Albums are not being created. Issue #634

Note:

About Imaginary, if it is installed correctly it works automatically, however you still have to select the types of files you want to read. So you must add this configuration in config/config.php

+ 'enabledFaceRecognitionMimetype' => array(

+ 0 => 'image/jpeg',

+ 1 => 'image/png',

+ 2 => 'image/heic',

+ 3 => 'image/tiff',

+ 4 => 'image/webp',

+ ),

Finally adds the --crawl-missing option to face:background_job that forces a search for the files to analyze the new allowed types. 😄

Published by matiasdelellis almost 2 years ago

Changelog

All notable changes to this project will be documented in this file.

[0.9.11] - 2022-12-28

- Fix migrations on PostgreSQL. Issue #619 and #615

- Fix OCS Api (API V1). Thanks to nkming2

[0.9.10] - 2022-12-12

- Just bump version, to remove beta label and allow installing in NC25

-

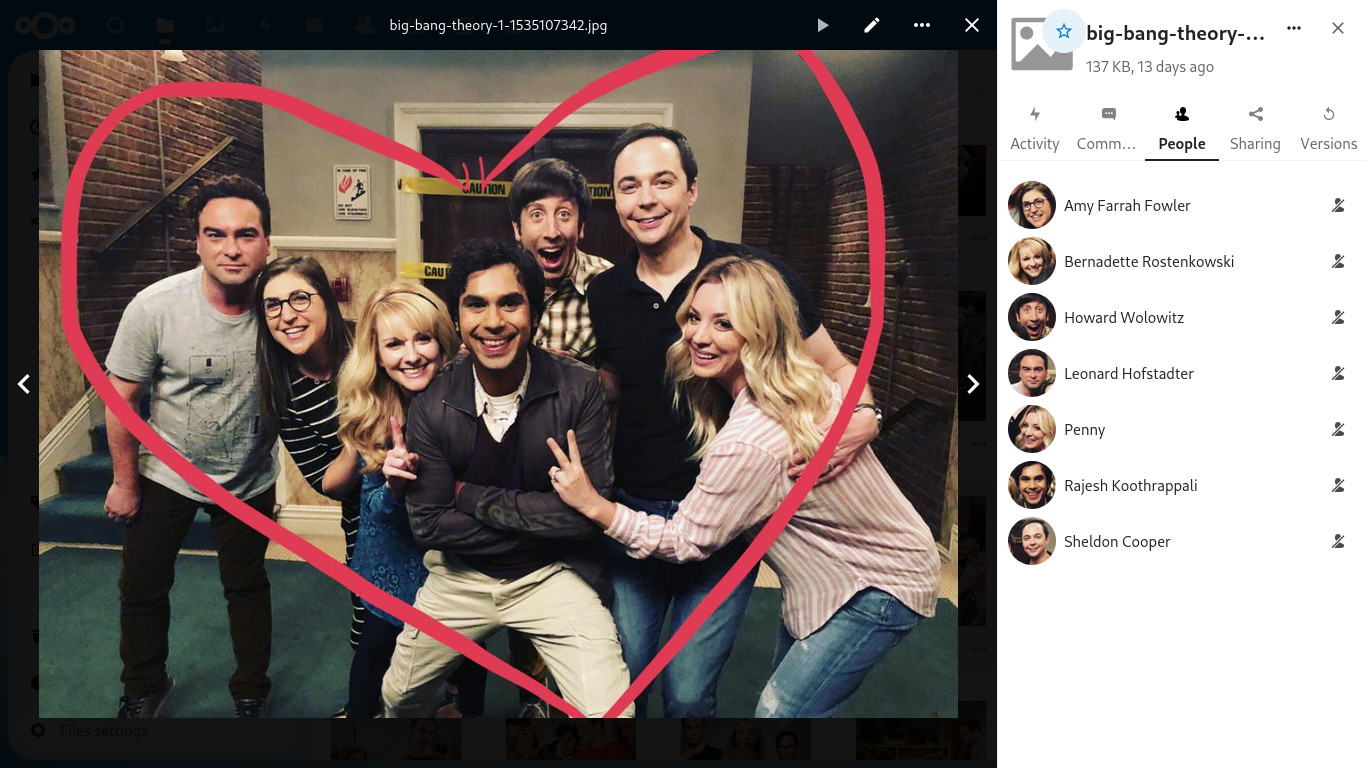

Gratitude: @pulsejet, very kindly accept the integration of this application into your super cool photo gallery called Memories.

If you didn't know about this project, I invite you to give it a try, that will pleasantly surprise you. 🎉

Thanks again. 😃 - New Russian translation thanks to Regardo.

Of course a photo is better than a thousand words.

Published by matiasdelellis almost 2 years ago

Changelog

All notable changes to this project will be documented in this file.

[0.9.10] - 2022-12-12

- Just bump version, to remove beta label and allow installing in NC25

-

Gratitude: @pulsejet, very kindly accept the integration of this application into your super cool photo gallery called Memories.

If you didn't know about this project, I invite you to give it a try, that will pleasantly surprise you. 🎉

Thanks again. 😃 - New Russian translation thanks to Regardo.

Of course a photo is better than a thousand words.

Published by matiasdelellis almost 2 years ago

[0.9.10-beta.2] - 2022-11-17

Added

- Adds an API version 2 that theoretically is enough for any client.

- Note that can change minimally until we release the stable version.

Fixed

- Only fix tests.

Changed

- Use x, y, width, height to save face detections on database.

Published by matiasdelellis about 2 years ago

[0.9.10-beta.1] - 2022-09-27

Added

- Support Nextcloud 25

- A little love to the whole application to improve styles and texts.

- Show the image viewer when click come image on our "gallery".

Is press Control+Click it will open the file as before. - Don´t allow run two face:commands simultaneously to prevent errors.

- Some optimizations on several queries of main view.

- Add a new command face:sync-albums to create photos albums of persons.

Fixed

- Rephrase I'm not sure button to better indicate what it does. Issue #544

Changed

- Change Person to people since Persons in a very formal word of lawyers.

- Edit people's names in the same side tab instead of using dialogs.

This is really forced by changes to the viewer which retains focus, and

avoids typing anywhere.

It also has a regression that misses the autocomplete. :disapointed: - Typo fix. Just use plurals on stats table.

- Show the faces of the latest photos, and sort photos by upload order.

Translations

- Update German translation thanks to lollos78.

- Update Italian (Italy) translation thanks to lollos78.

- Update of many other translations. Thank you so much everyone.

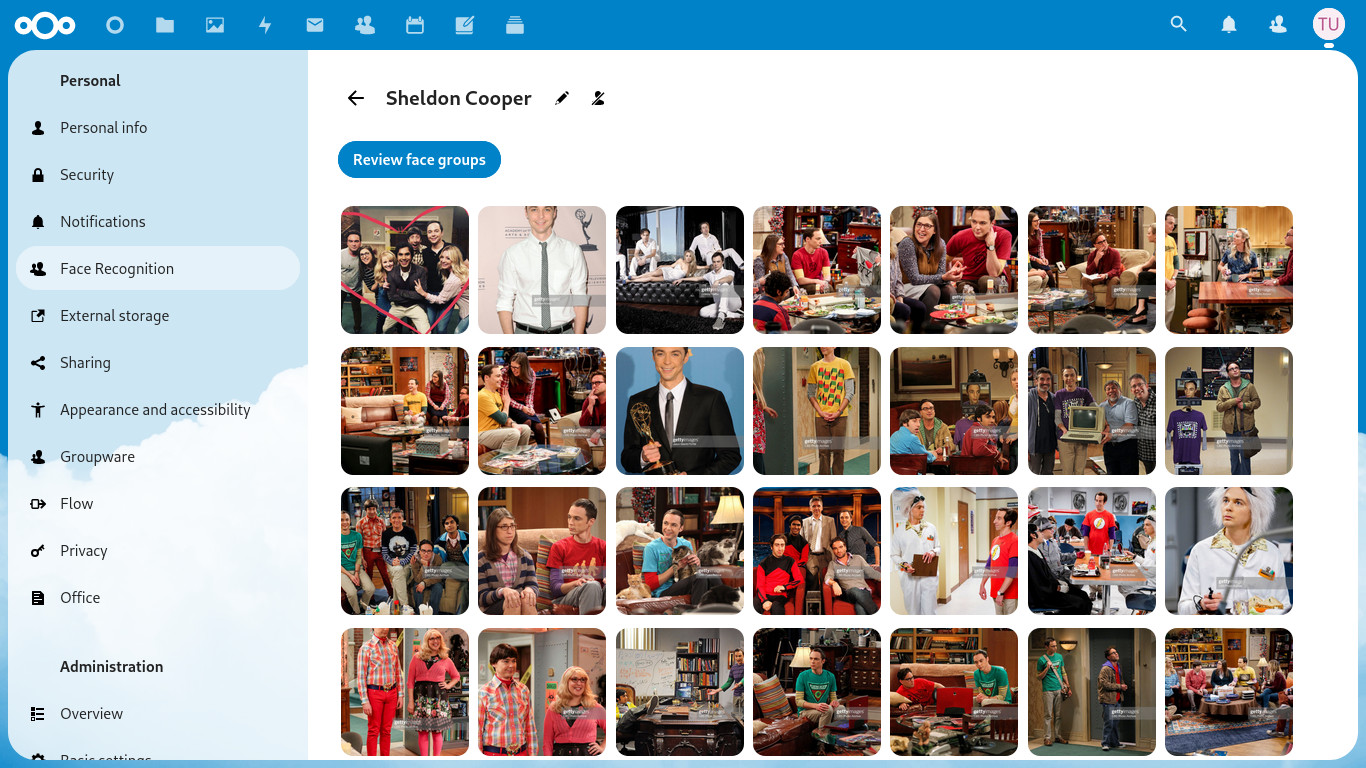

Some new screenshots...

Published by matiasdelellis over 2 years ago

Just fix to update NC24... 🙈

Published by matiasdelellis almost 3 years ago

NOTE:

Absolutely all users must configure the new setting for the maximum memory for image processing

occ face:setup --memory 2GB

Changelog

[0.9.1] - 2021-12-15

Fixed

- Fix Ignore persons feature. Issue #542

Translations

- Update Czech translation thanks to Pavel Borecki.

- Update Italian translation thanks to axl84.

[0.9.0] - 2021-12-13

Added

- Add an extra step to setup. You must indicate exactly how much memory you want

to assing for image processing. Seeocc face:setup --memorydoc on readme. - Adds the option to effectively ignore persons when assigning names. See issue

#486 #504. - It also allows you to hide persons that you have already named. Issue #405

- Implement the option of: This is not such a person. Issue #506 and part of

#158 - Enable NC23

Translations

- Update some translations. Thank you so much everyone.

Screenshots

Ignore irrelevant persons:

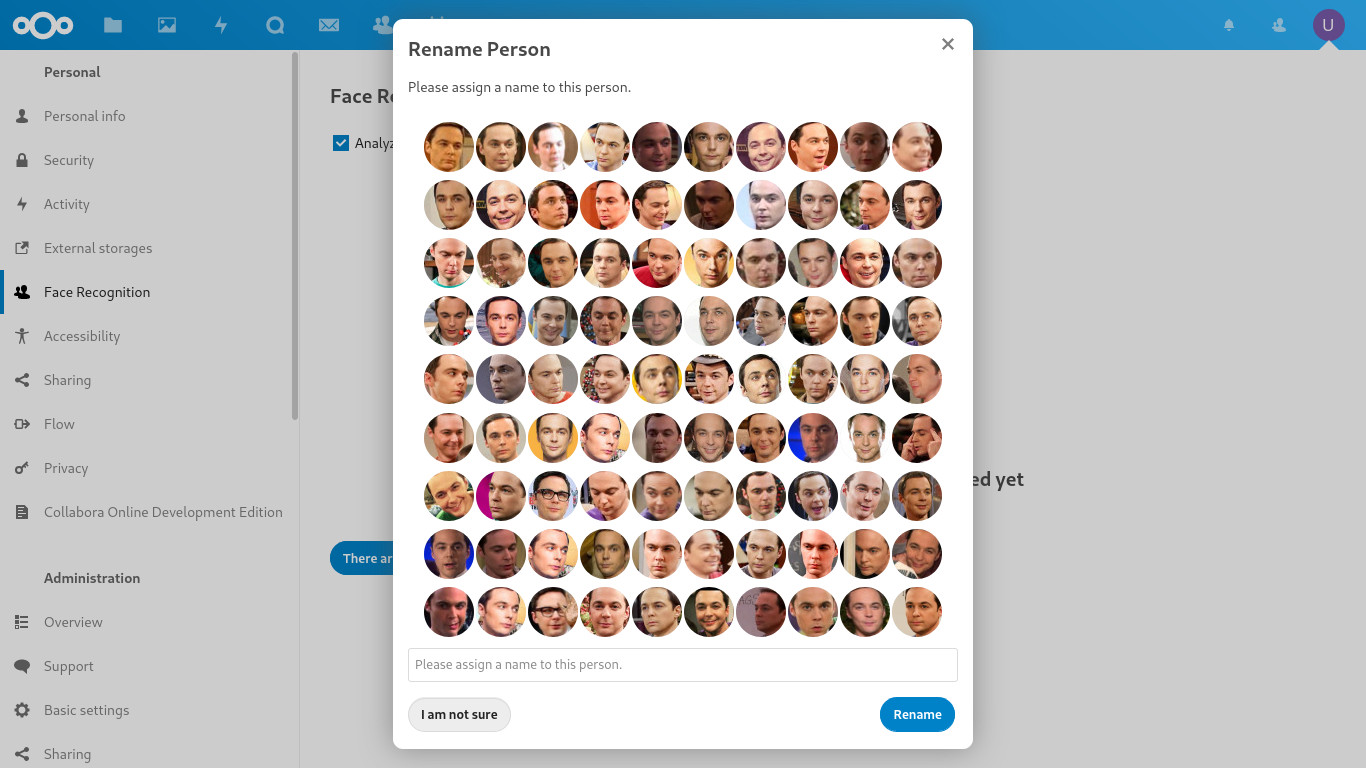

Clustering algorithm correction

D'OH!. This is not Sheldon Copper.

It's Rajesh!. 😅

Detail of the wrong cluster before.

Detail of cluster fixed later..

Published by matiasdelellis almost 3 years ago

NOTE:

Absolutely all users must configure the new setting for the maximum memory for image processing

occ face:setup --memory 2GB

Changelog

[0.9.0] - 2021-12-13

Added

- Add an extra step to setup. You must indicate exactly how much memory you want

to assing for image processing. Seeocc face:setup --memorydoc on readme. - Adds the option to effectively ignore persons when assigning names. See issue

#486 #504. - It also allows you to hide persons that you have already named. Issue #405

- Implement the option of: This is not such a person. Issue #506 and part of

#158 - Enable NC23

Translations

- Update some translations. Thank you so much everyone.

Screenshots

Ignore irrelevant persons:

Clustering algorithm correction

D'OH!. This is not Sheldon Copper.

It's Rajesh!. 😅

Detail of the wrong cluster before.

Detail of cluster fixed later..

Published by matiasdelellis almost 3 years ago

Note:

- Big thanks especially to @guystreeter for the research on php8 (See issue #456) and other improvements.

- Also many thanks to @nkming2 for the implementation of the public api, and the first Nectcloud gallery client that includes support for this application. See https://github.com/nkming2/nc-photos

[0.8.5] - 2021-11-20

Added

- Initial support for php 8. See issue #456

- Add link to show photos of person on sidebar.

- Add static analysis, phpunit and lintian test using github workflow.

- Add an real OCS public API to get all persons. See PR #512.

Fixed

- Fix sidebar view when user has disable it.

- Set the Image Area slider to the maximum allowed by the model. See issue #527

- Don't try to force the setCreationTime argument to be DateTime. See PR #526

- Migrate hooks to OCP event listeners. See PR #511

Translations

- New Czech translation thanks to Pavel Borecki, and update others. Thank you so

much everyone.

Published by matiasdelellis over 3 years ago

Changelog

All notable changes to this project will be documented in this file.

[0.8.3] - 2021-07-08

Added

- Initial support for NC22.

- Update translations.

Published by matiasdelellis over 3 years ago

[0.8.2] - 2021-05-17

Added

- Add links in thumbnails of rename persons dialogs. Issue #396

- Initial autocomplete feature for names. Issue #306

Fixed

- Respect .noimage file, since it is also used in Photos. Issue #446

- Fix delete files due some change on ORM with NC21. Issue #471

- Some fixes on make clean.

Translations

- New Italian translation thanks to axl84, and update others. Thank you so much

everyone.

Published by matiasdelellis over 3 years ago

Face Recognition v0.8.1

Note that this is a version, with few features, is partially made only by contributions from our users. So, agian. Thank you very much for your contributions !!! 😄 😃 🥰

Changelog

All notable changes to this project will be documented in this file.

[0.8.1] - 2021-03-18

Fixed

- Register the Hooks within the Bootstrap mechanism, removing many undesirable

logs. Similar to https://github.com/nextcloud/server/issues/22590

Translations

- New Korean (Korea) translation thanks to HyeongJong Choi

- Updating many others translations from Transifex. This time I cannot

individualize your changes to thank you properly, but thank you very much to

all the translators.

[0.8.0] - 2021-03-17

Added

- Increase the supported version only to NC21. Thanks to @szaimen. See issue #429

- Add support for unified search, being able to search the photos of your loved

ones from anywhere in nextcloud. Thanks to @dassio. See PR #344 - Add defer-clustering option. It changes the order of execution of the process

deferring the face clustering at the end of the analysis to get persons in a

simple execution of the command. Thanks to @cliffalbert. See issue #371

Published by matiasdelellis over 3 years ago

Face Recognition v0.8.0

Note that this is a version, with few features, is partially made only by contributions from our users. So, agian. Thank you very much for your contributions !!! 😄 😃 🥰

Changelog

[0.8.0] - 2021-03-17

Added

- Increase the supported version only to NC21. Thanks to @szaimen. See issue #429

- Add support for unified search, being able to search the photos of your loved ones from anywhere in nextcloud. Thanks to @dassio. See PR #344

- Add defer-clustering option. It changes the order of execution of the process deferring the face clustering at the end of the analysis to get persons in a simple execution of the command. Thanks to @cliffalbert. See issue #371

Published by matiasdelellis almost 4 years ago

Codename: He's alive

You could interpret that I am saying that this version is a monster (frankenstein? 🤔), but it is certainly a celebration phrase. There was a lot of previous development that allowed to develop an external model so easily.. 😄 🎉

Then we can discuss privacy, since this model could theoretically be run on Amazon, or google, losing some magic (Do absolutely everything within our personal/secure/private nextcloud instance) of the application. Maybe it is a monster in that sense, but throughout the development of the application I met a lot of people who use the main storage outside their nectcloud instance, already losing part of the grace. On the other hand, there are also other Nextcloud applications that work in a similar way, running external services to relieve the main server. (Ie. Libreoffice online, Talk high performance Backend, etc). And finally there are many users using Nextcloud on small computers like Rasperry Pi. So why prohibit the use of this application to them? Now they can run the process on their personal laptop/computer quickly and safely.. 😁

On the other hand, this external model allows us to "open the game". (I'm not sure if it's a universally understood phrase.. 🤔). That is, I decided to trust the dlib models. I think they work very well, but obviously could improve. There are people who would like to use tensorflow, darknet, opencv, etc.? Ok. Now they can implement their own model to improve quality, speed, etc. I would love to see your results. 😉

So, the benefits far outweigh any concerns, but be responsible. 😉

Changelog

[0.7.2] - 2020-12-10

Added

- Add an external model that allows run the photos analysis outside of your

Nextcoud instance freeing server resources. This is generic and just define a

reference api, and we share a reference example equivalent to model 1. See

issue #210, #238, and PR #389.

See: https://github.com/matiasdelellis/facerecognition-external-model - Allow setting a custom model path, useful for configurations like Object

Storage as Primary Storage. This thanks to David Kang. See #381 and #390. - Add memory info and pdlib version to admin page. PR #385

Translations

- Some messages improved thanks to Robin @Derkades. They were not translated yet

and will probably change again. Please be patient.

Screenshots

| Person View | Photos of person | Person Integration | Assign Name |

|---|---|---|---|

|

|

|

|