mage-ai

🧙 Build, run, and manage data pipelines for integrating and transforming data.

APACHE-2.0 License

Bot releases are hidden (Show)

Published by thomaschung408 over 1 year ago

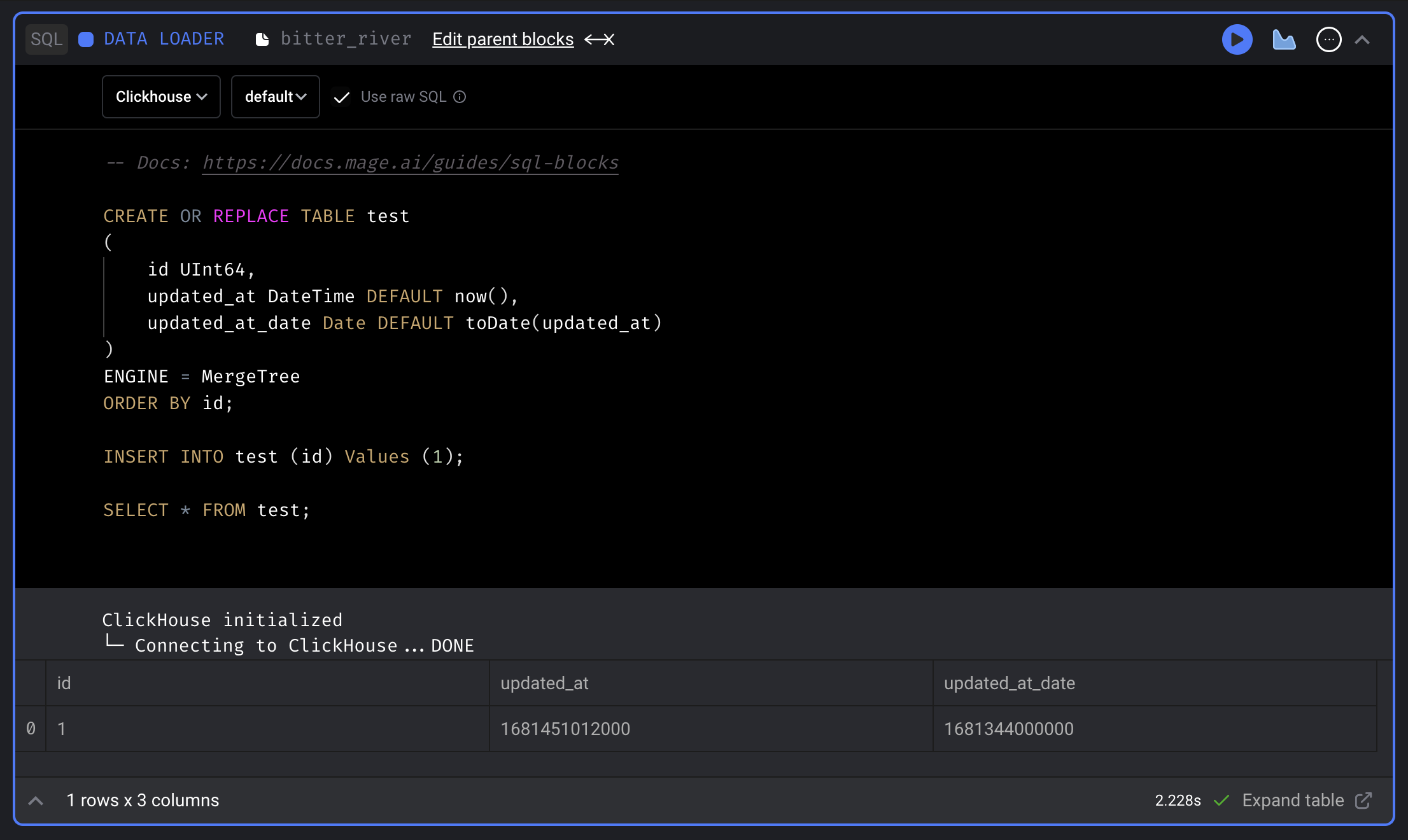

ClickHouse SQL block

Support using SQL block to fetch data from, transform data in and export data to ClickHouse.

Doc: https://docs.mage.ai/integrations/databases/ClickHouse

Trino SQL block

Support using SQL block to fetch data from, transform data in and export data to Trino.

Doc: https://docs.mage.ai/development/blocks/sql/trino

Sentry integration

Enable Sentry integration to track and monitor exceptions in Sentry dashboard.

Doc: https://docs.mage.ai/production/observability/sentry

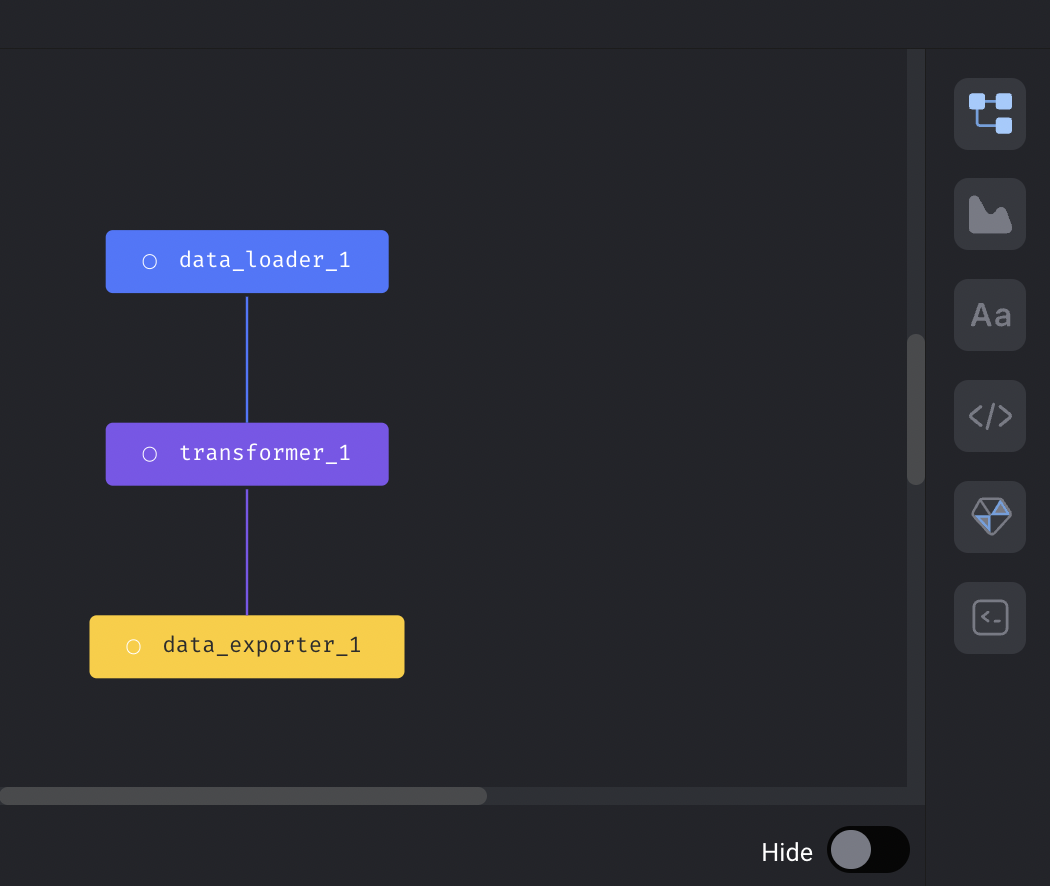

Drag and drop to re-order blocks in pipeline

Mage now supports dragging and dropping blocks to re-order blocks in pipelines.

Streaming pipeline

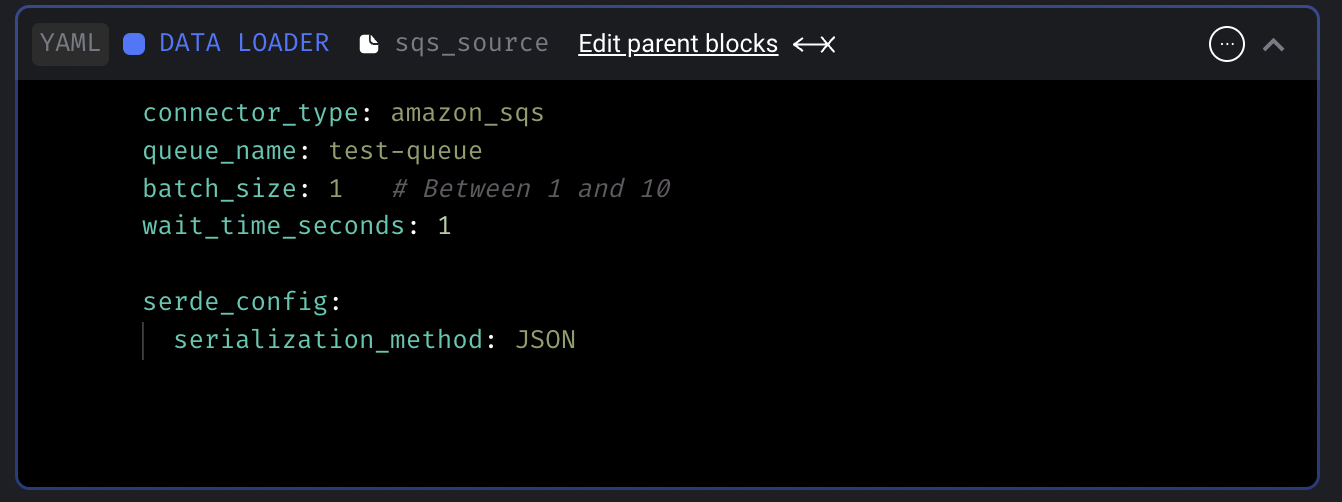

Add AWS SQS streaming source

Support consuming messages from SQS queues in streaming pipelines.

Doc: https://docs.mage.ai/guides/streaming/sources/amazon-sqs

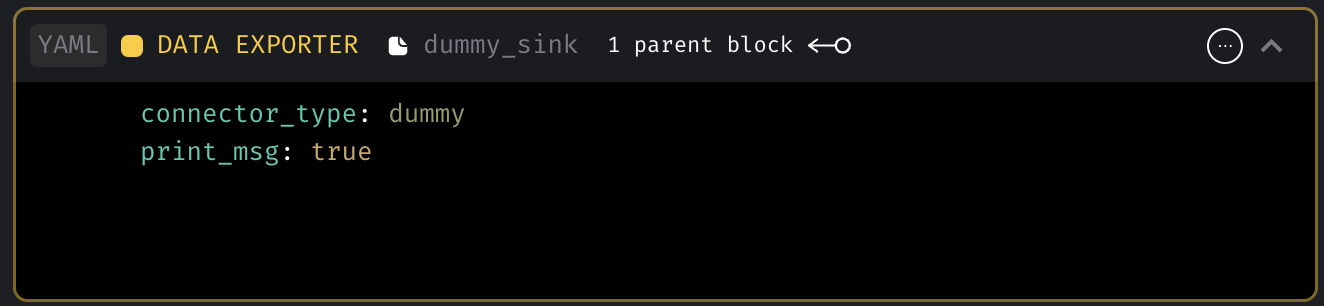

Add dummy streaming sink

Dummy sink will print the message optionally and discard the message. This dummy sink will be useful when users want to trigger other pipelines or 3rd party services using the ingested data in transformer.

Doc: https://docs.mage.ai/guides/streaming/destinations/dummy

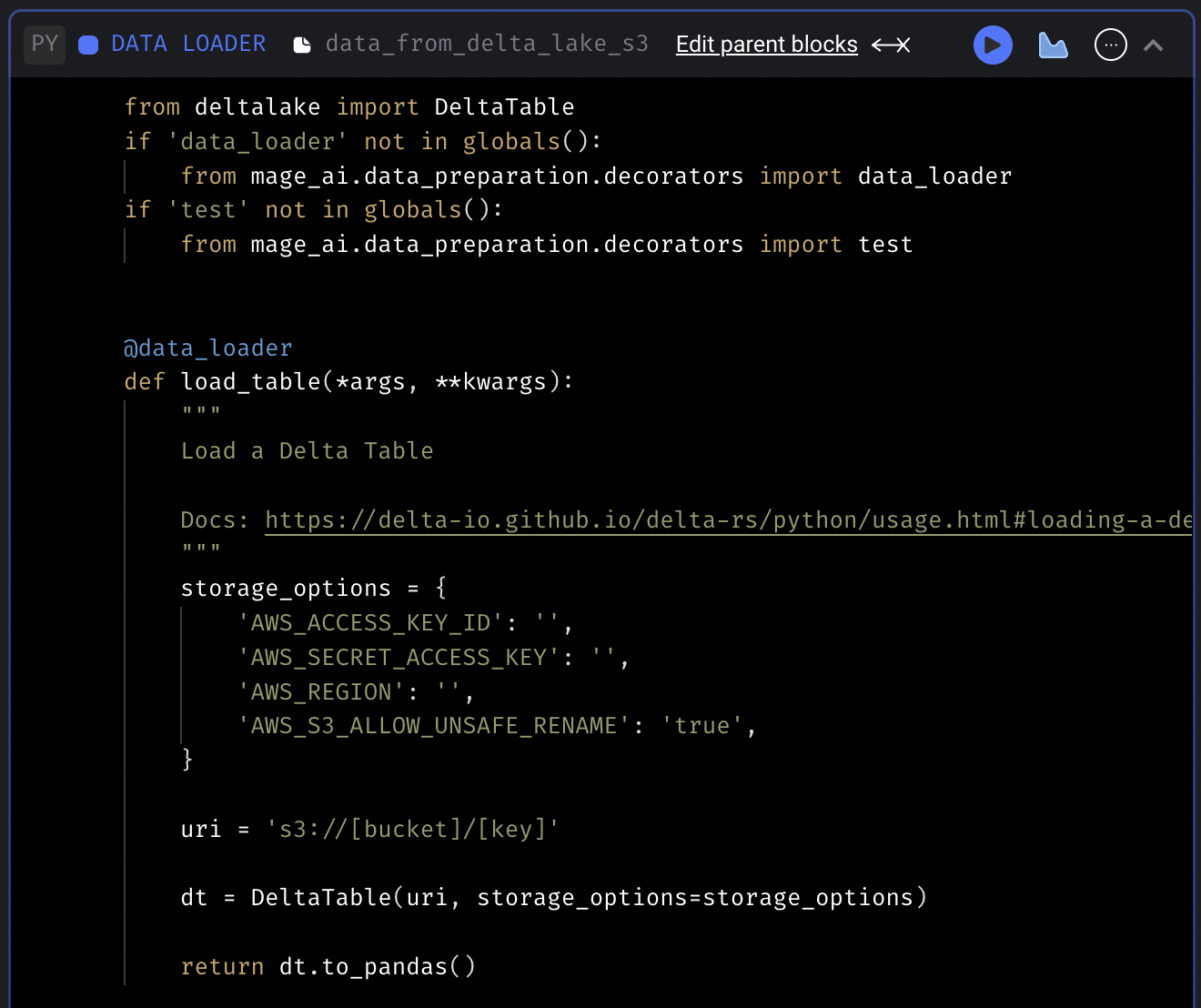

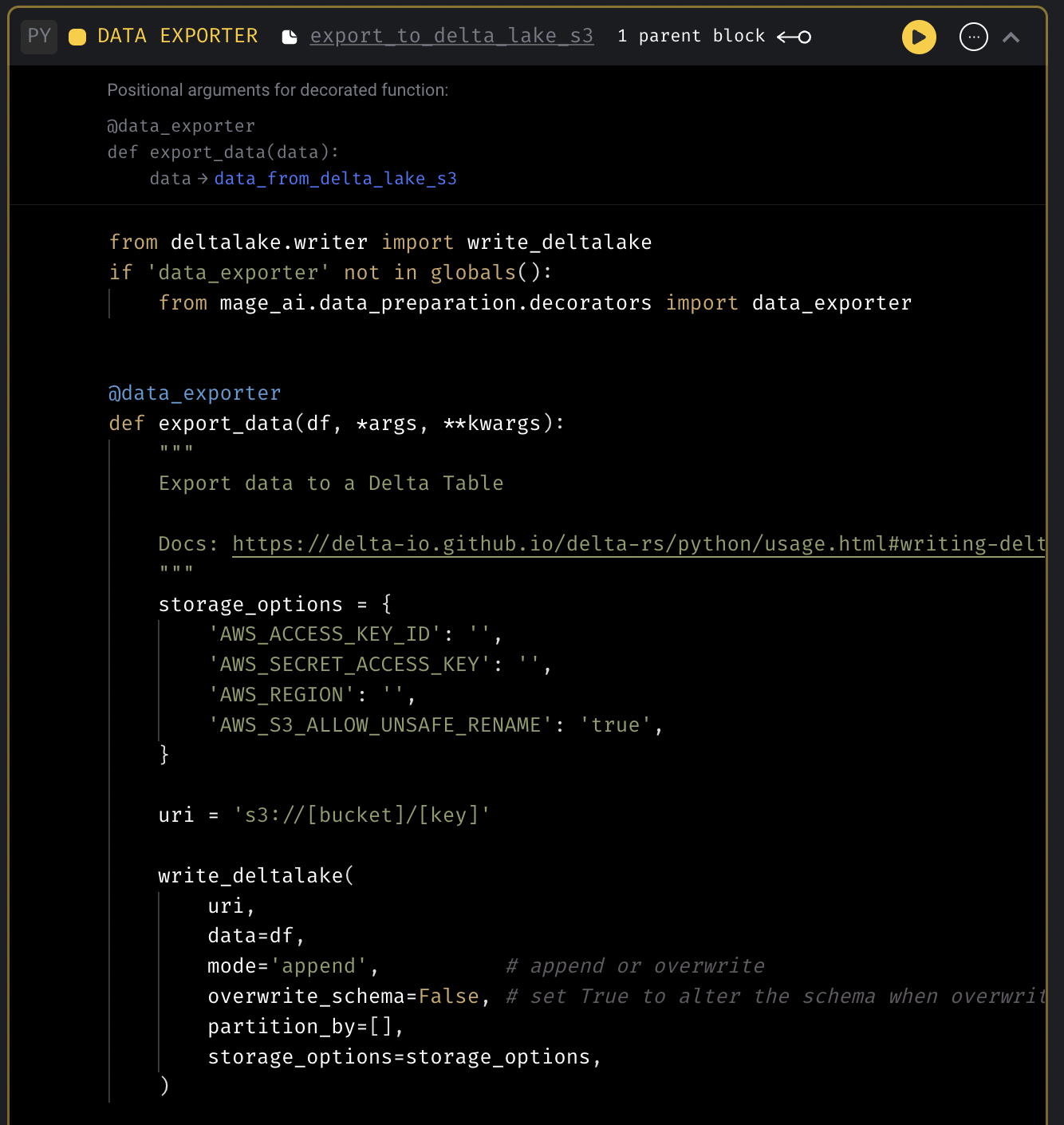

Delta Lake code templates

Add code templates to fetch data from and export data to Delta Lake.

Delta Lake data loader template

Delta Lake data exporter template

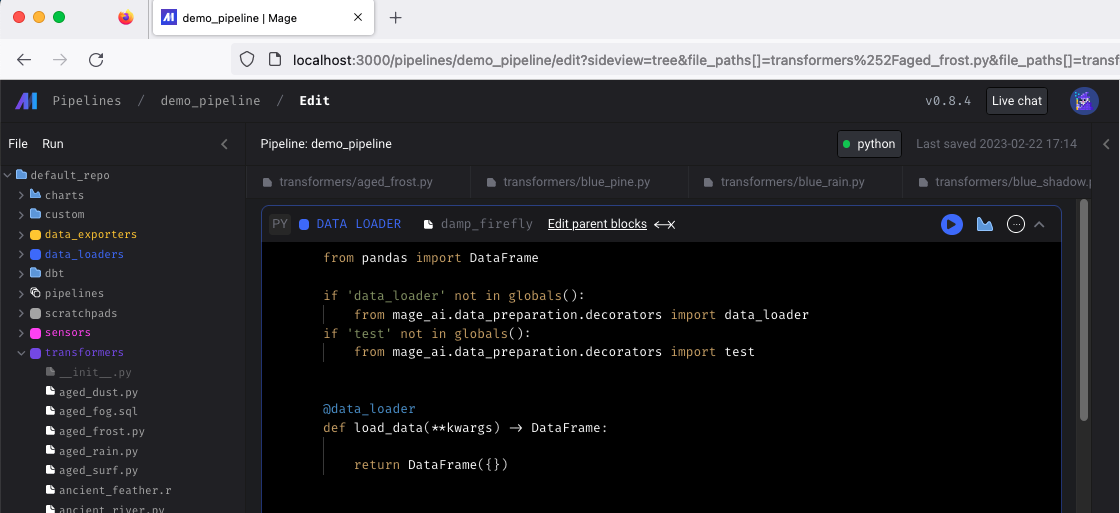

Unit tests for Mage pipelines

Support writing unit tests for Mage pipelines that run in the CI/CD pipeline using mock data.

Doc: https://docs.mage.ai/development/testing/unit-tests

Data integration pipeline

- Chargebee source: Fix load sample data issue

- Redshift destination: Handle unique constraints in destination tables.

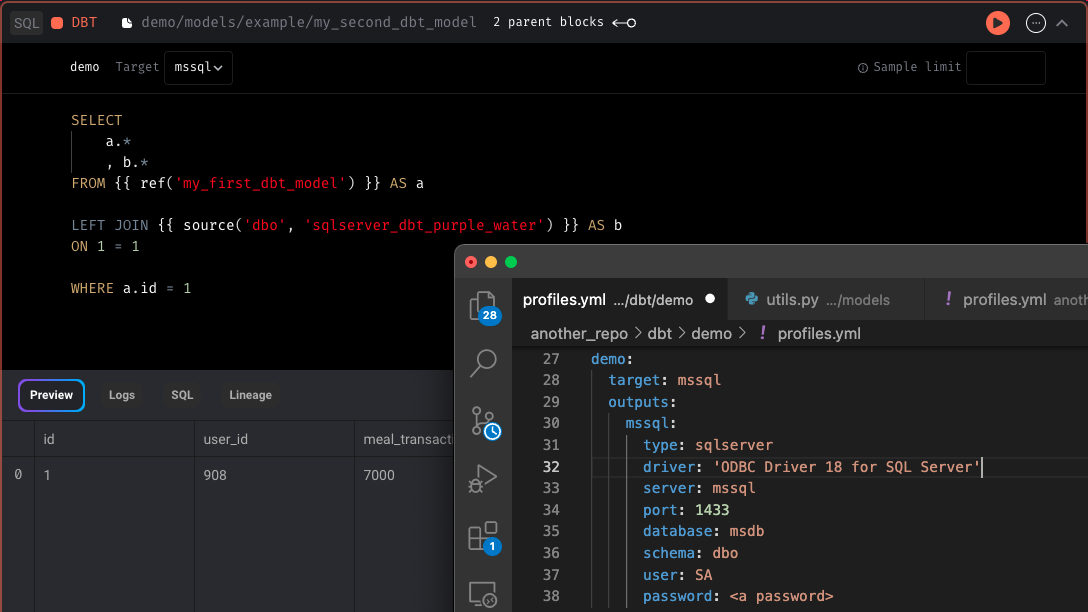

DBT improvements

- If there are two DBT model files in the same directory with the same name but one has an extra

.sqlextension, the wrong file may get deleted if you try to delete the file with the double.sqlextension. - Support using Python block to transform data between DBT model blocks

- Support

+schemain DBT profile

Other bug fixes & polish

- SQL block

- Automatically limit SQL block data fetching while using the notebook but also provide manually override to adjust the limit while using the notebook. Remove these limits when running pipeline end-to-end outside the notebook.

- Only export upstream blocks if current block using raw SQL and its using the variable

- Update SQL block to use

io_config.yamldatabase and schema by default

- Fix timezone in pipeline run execution date.

- Show backfill preview dates in UTC time

- Raise exception when loading empty pipeline config.

- Fix dynamic block creation when reduced block has another dynamic block as downstream block

- Write Spark DataFrame in parquet format instead of csv format

- Disable user authentication when REQUIRE_USER_AUTHENTICATION=0

- Fix loggings for Callback blocks

- Git

- Import git only when the

Gitfeature is used. - Update git actions error message

- Import git only when the

- Notebook

- Fix Notebook page freezing issue

- Make Notebook right vertical navigation sticky

- More documentations

- Add architecture overview diagram and doc: https://docs.mage.ai/production/deploying-to-cloud/architecture

- Add doc for setting up event trigger lambda function: https://docs.mage.ai/guides/triggers/events/aws#set-up-lambda-function

View full Changelog

Published by thomaschung408 over 1 year ago

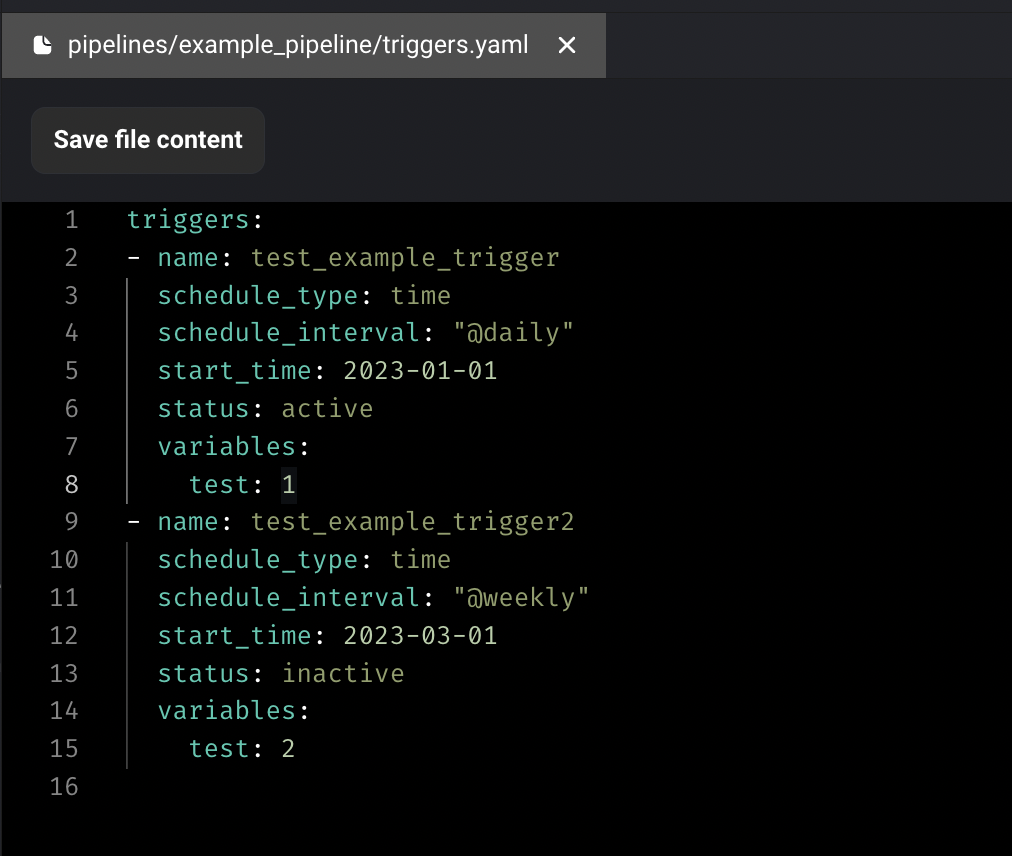

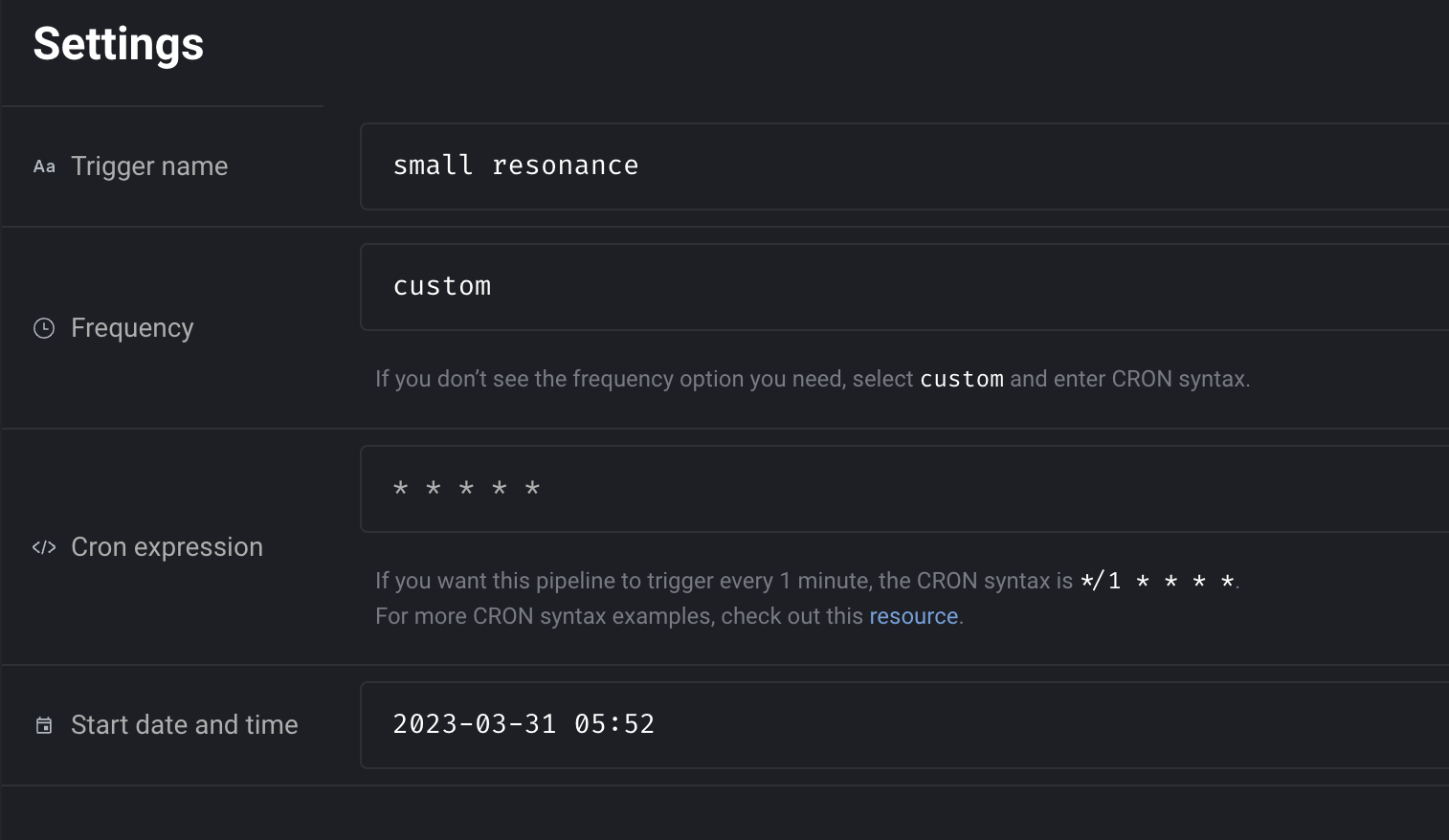

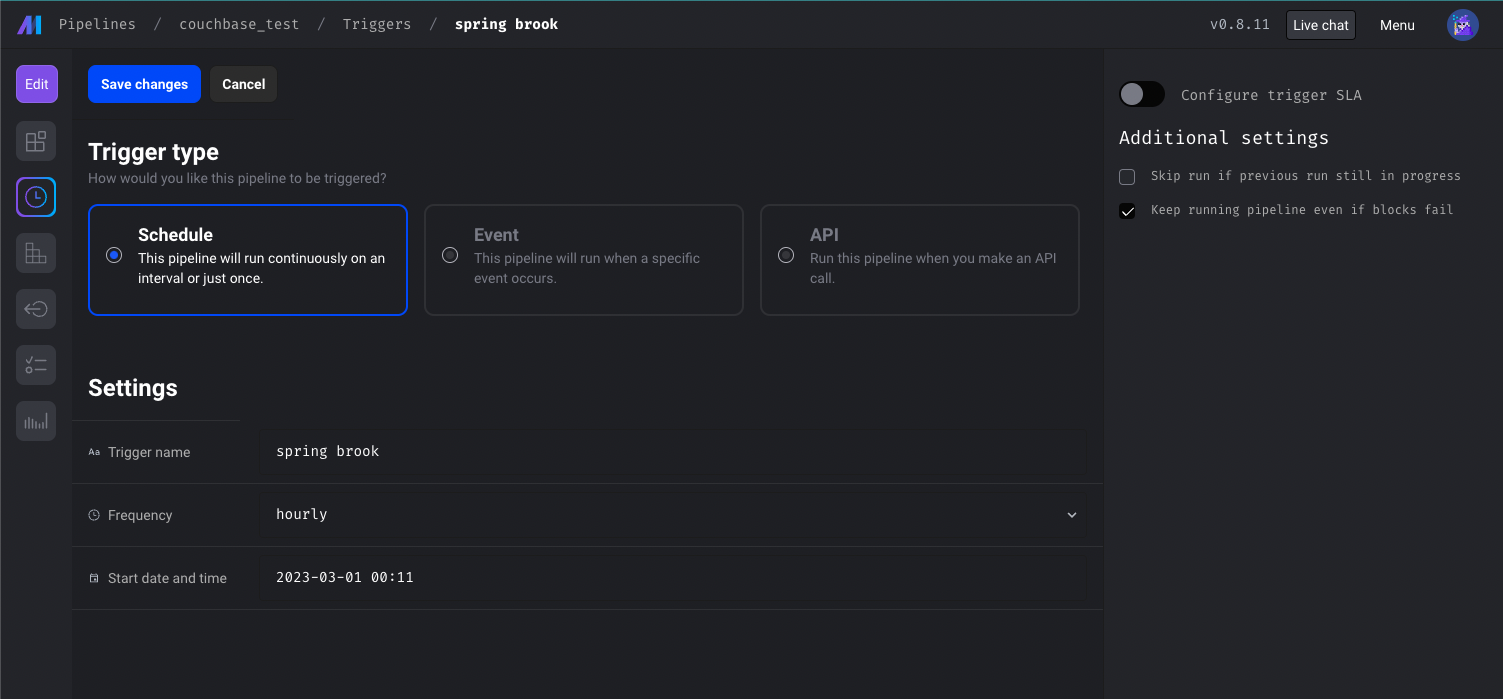

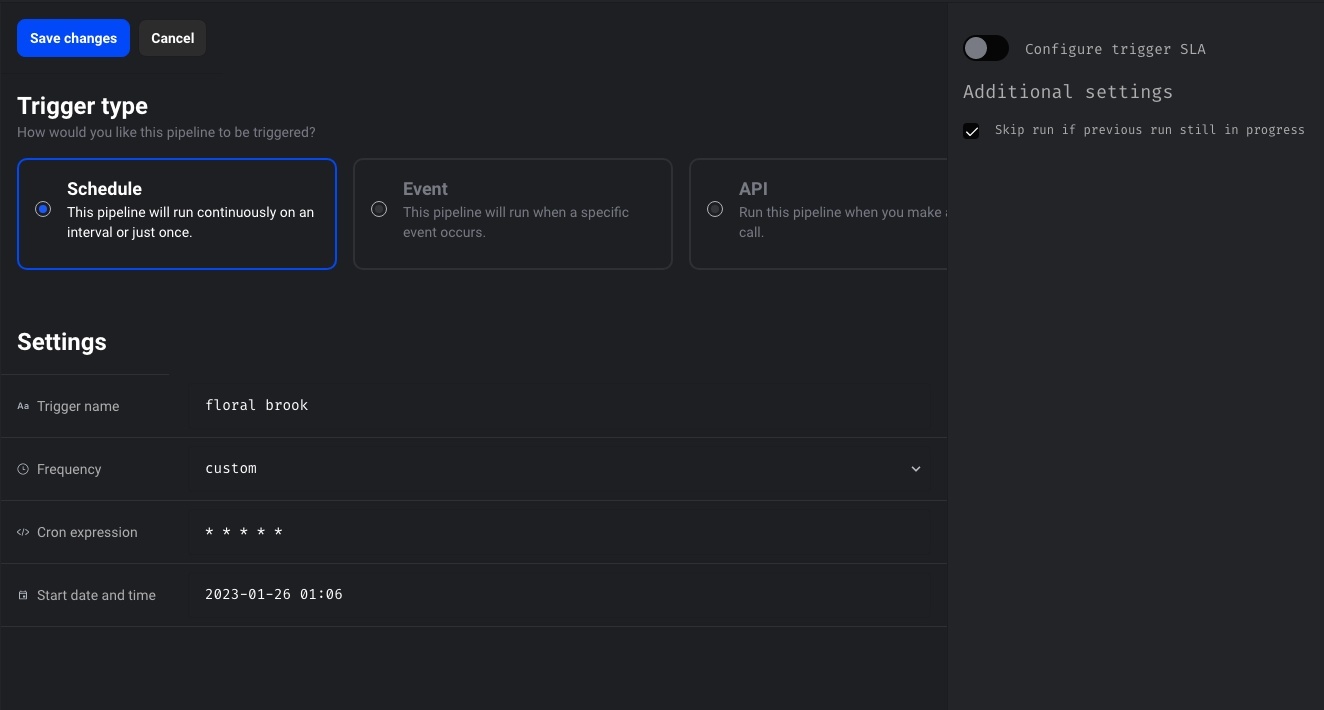

Configure trigger in code

In addition to configuring triggers in UI, Mage also supports configuring triggers in code now. Create a triggers.yaml file under your pipeline folder and enter the triggers config. The triggers will automatically be synced to DB and trigger UI.

Doc: https://docs.mage.ai/guides/triggers/configure-triggers-in-code

Centralize server logger and add verbosity control

Shout out to Dhia Eddine Gharsallaoui for his contribution of centralizing the server loggings and adding verbosity control. User can control the verbosity level of the server logging by setting the SERVER_VERBOSITY environment variables. For example, you can set SERVER_VERBOSITY environment variable to ERROR to only print out errors.

Doc: https://docs.mage.ai/production/observability/logging#server-logging

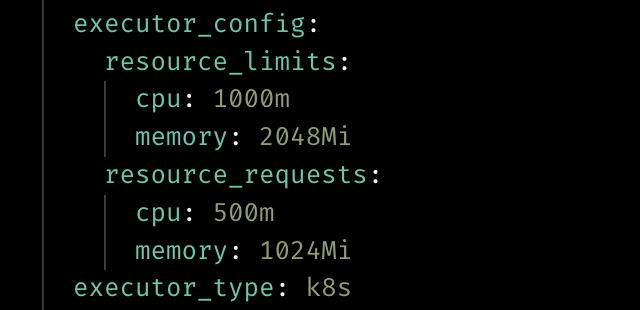

Customize resource for Kubernetes executor

User can customize the resource when using the Kubernetes executor now by adding the executor_config to the block config in pipeline’s metadata.yaml.

Doc: https://docs.mage.ai/production/configuring-production-settings/compute-resource#kubernetes-executor

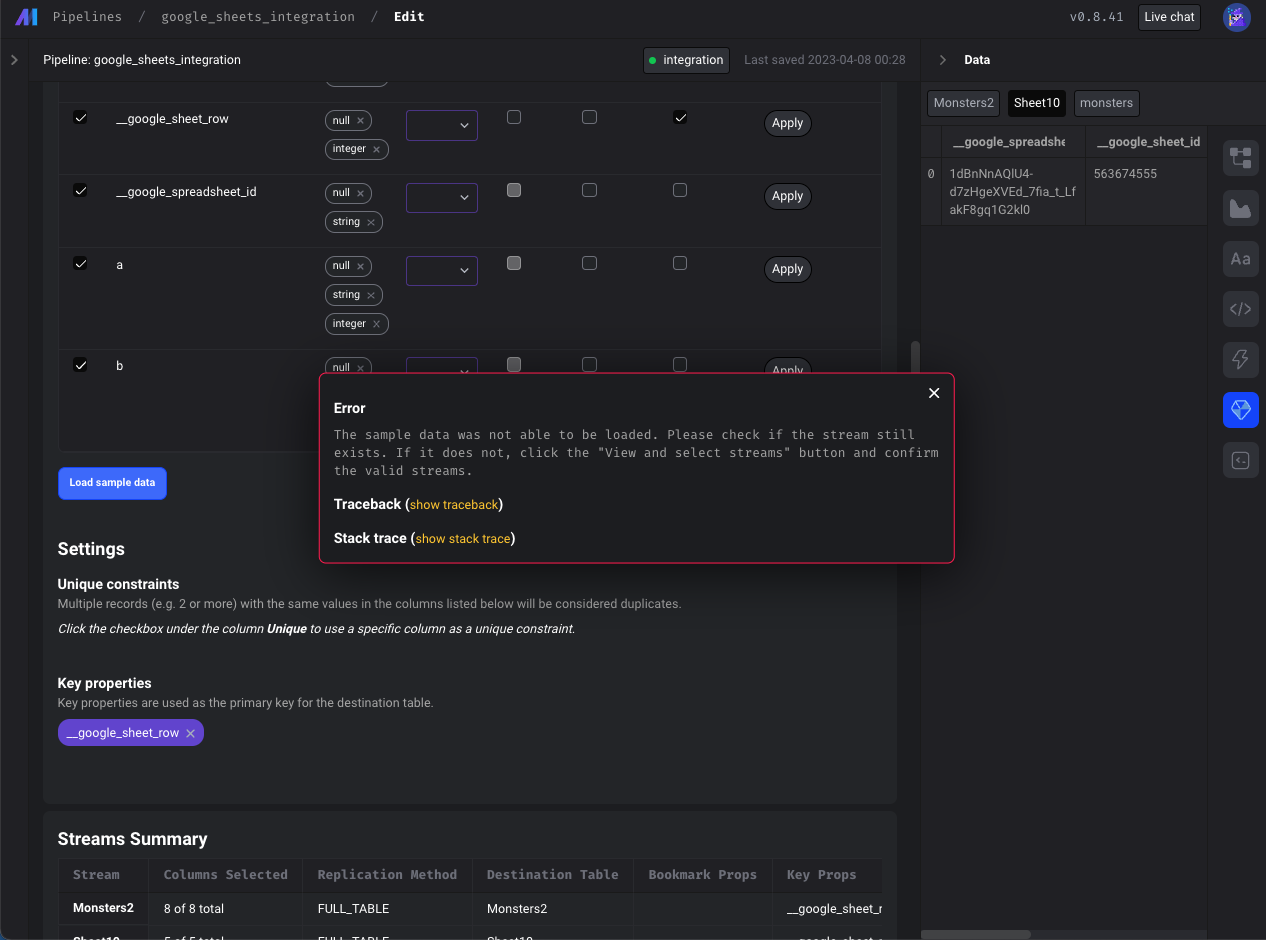

Data integration pipelines

- Google sheets source: Fix loading sample data from Google Sheets

- Postgres source: Allow customizing the publication name for logical replication

- Google search console source: Support email field in google_search_console config

- BigQuery destination: Limit the number of subqueries in BigQuery query

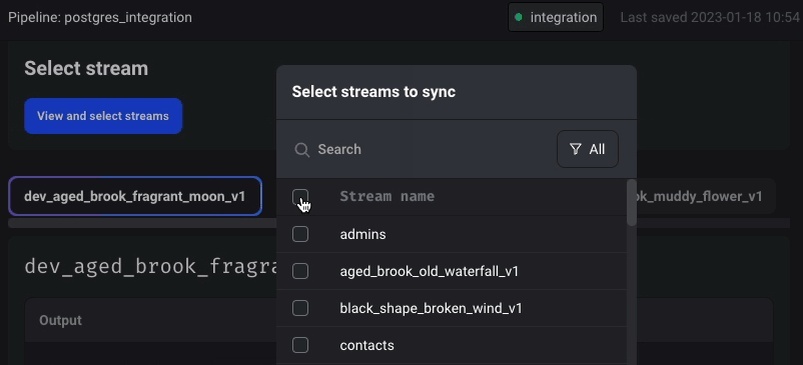

- Show more descriptive error (instead of

{}) when a stream that was previously selected may have been deleted or renamed. If a previously selected stream was deleted or renamed, it will still appear in theSelectStreamsmodal but will automatically be deselected and indicate that the stream is no longer available in red font. User needs to click "Confirm" to remove the deleted stream from the schema.

Terminal improvements

- Use named terminals instead of creating a unique terminal every time Mage connects to the terminal websocket.

- Update terminal for windows. Use

cmdshell command for windows instead of bash. Allow users to overwrite the shell command with theSHELL_COMMANDenvironment variable. - Support copy and pasting multiple commands in terminal at once.

- When changing the path in the terminal, don’t permanently change the path globally for all other processes.

- Show correct logs in terminal when installing requirements.txt.

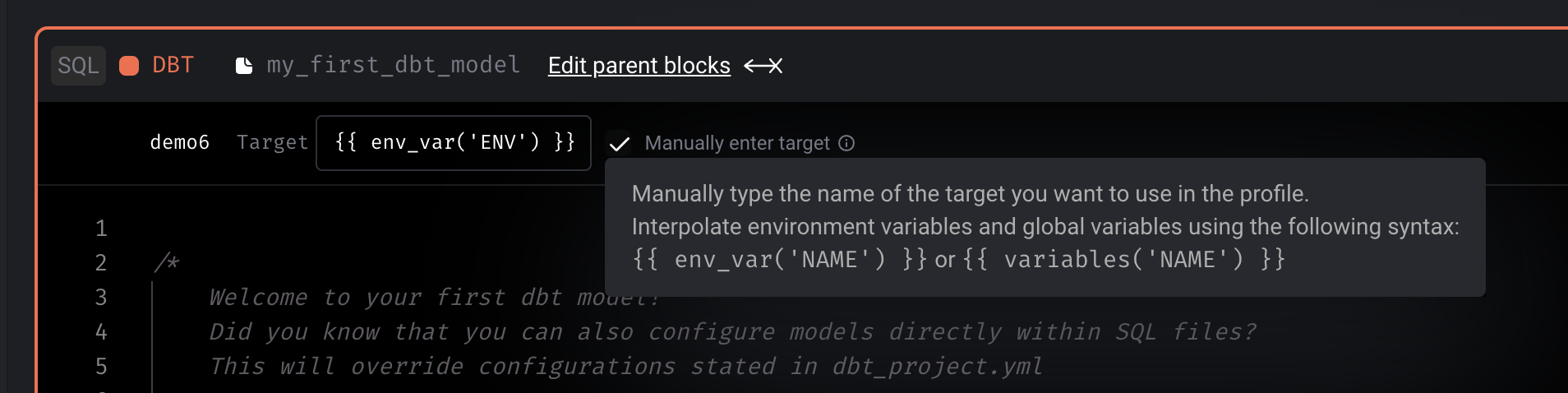

DBT improvements

- Interpolate environment variables and secrets in DBT profile

Git improvements

- Update git to support multiple users

Postgres exporter improvements

- Support reordering columns when exporting a dataframe to Postgres

- Support specifying unique constraints when exporting the dataframe

with Postgres.with_config(ConfigFileLoader(config_path, config_profile)) as loader:

loader.export(

df,

schema_name,

table_name,

index=False,

if_exists='append',

allow_reserved_words=True,

unique_conflict_method='UPDATE',

unique_constraints=['col'],

)

Other bug fixes & polish

- Fix chart loading errors.

- Allow pipeline runs to be canceled from UI.

- Fix raw SQL block trying to export upstream python block.

- Don’t require metadata for dynamic blocks.

- When editing a file in the file editor, disable keyboard shortcuts for notebook pipeline blocks.

- Increase autosave interval from 5 to 10 seconds.

- Improve vertical navigation fixed scrolling.

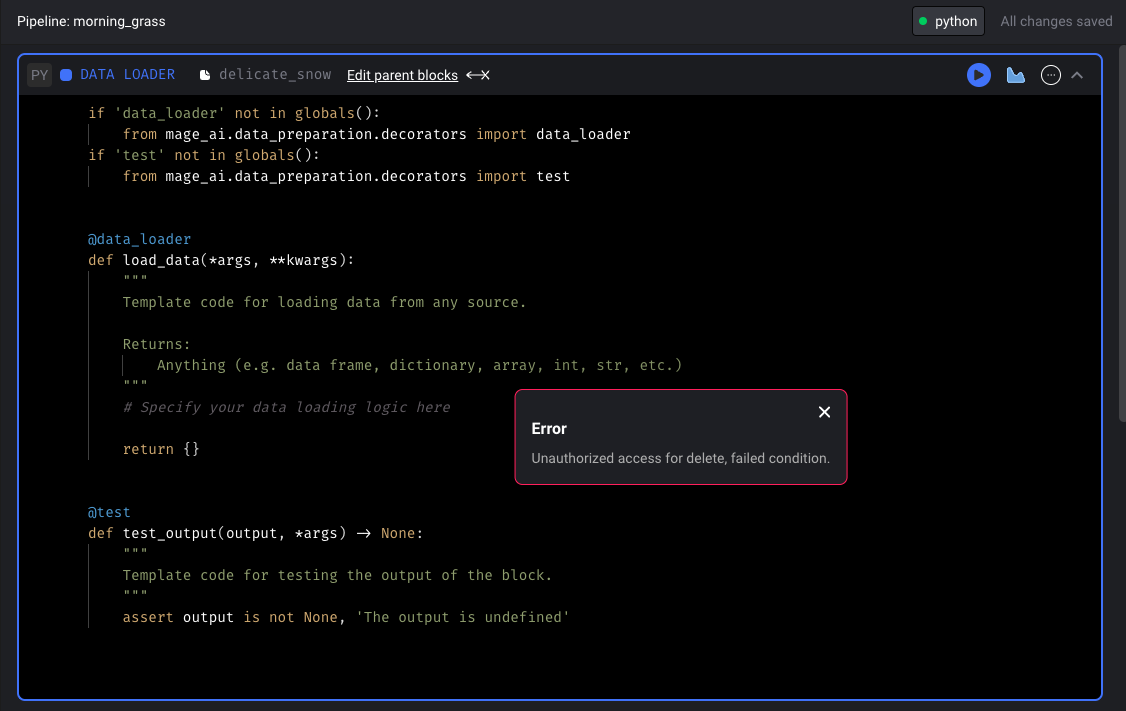

- Allow users to force delete block files. When attempting to delete a block file with downstream dependencies, users can now override the safeguards in place and choose to delete the block regardless.

View full Changelog

Published by thomaschung408 over 1 year ago

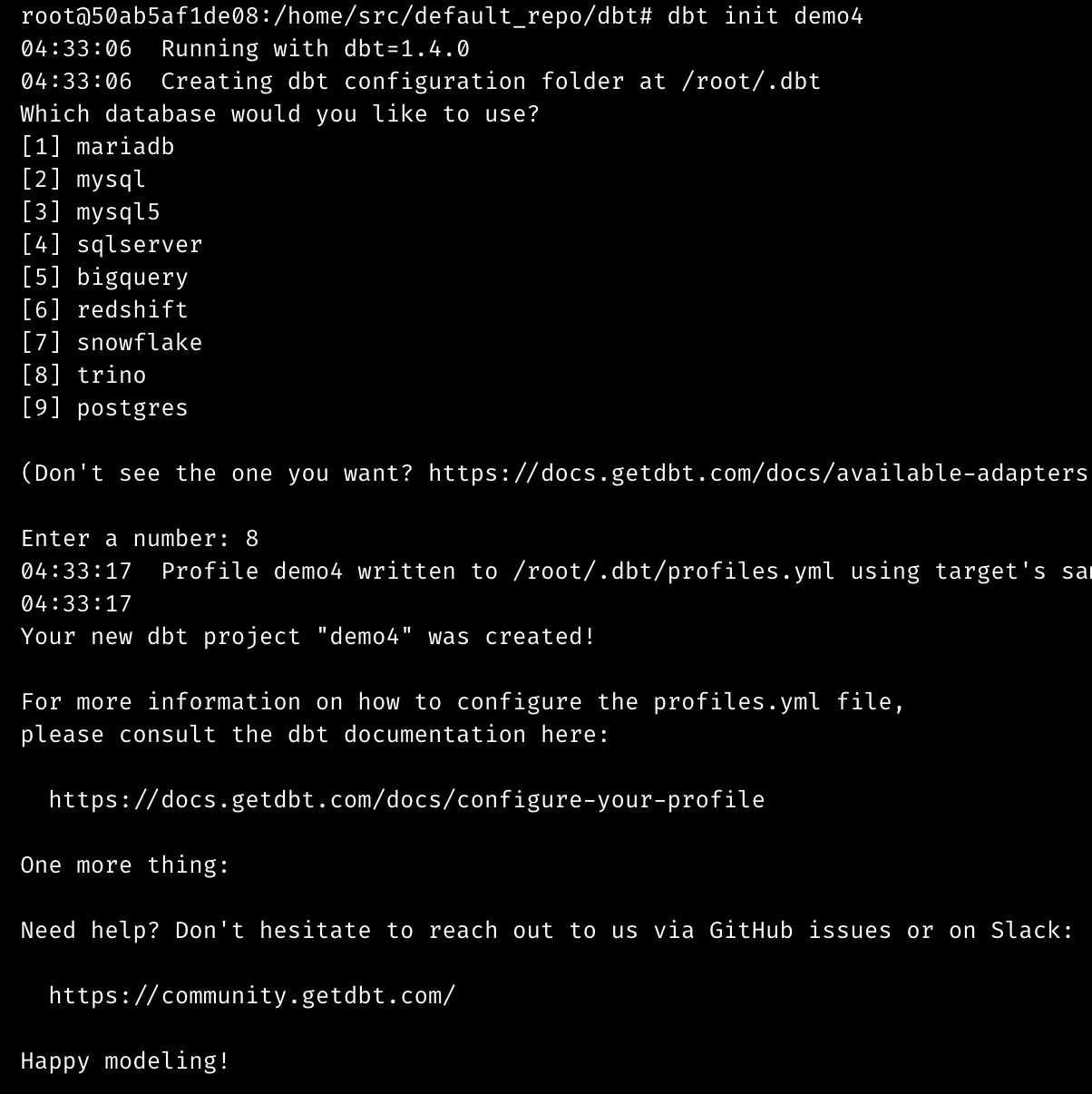

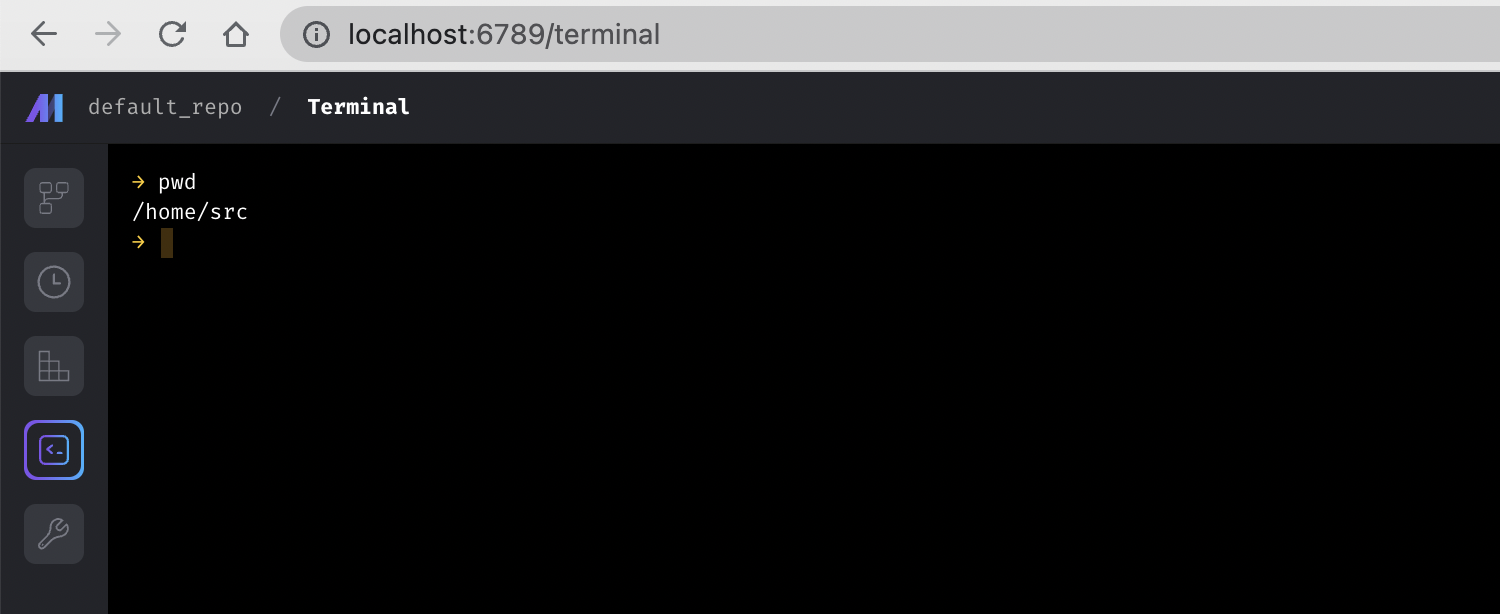

Interactive terminal

The terminal experience is improved in this release, which adds new interactive features and boosts performance. Now, you can use the following interactive commands and more:

git add -pdbt init demogreat_expectations init

Data integration pipeline

New source: Google Ads

Shout out to Luis Salomão for adding the Google Ads source.

New source: Snowflake

New destination: Amazon S3

Bug Fixes

- In the MySQL source, map the Decimal type to Number.

- In the MySQL destination, use

DOUBLE PRECISIONinstead ofDECIMALas the column type for float/double numbers.

Streaming pipeline

New sink: Amazon S3

Doc: https://docs.mage.ai/guides/streaming/destinations/amazon-s3

Other improvements

-

Enable the logging of custom exceptions in the transformer of a streaming pipeline. Here is an example code snippet:

@transformer def transform(messages: List[Dict], *args, **kwargs): try: raise Exception('test') except Exception as err: kwargs['logger'].error('Test exception', error=err) return messages -

Support cancelling running streaming pipeline (when pipeline is executed in PipelineEditor) after page is refreshed.

Alerting option for Google Chat

Shout out to Tim Ebben for adding the option to send alerts to Google Chat in the same way as Teams/Slack using a webhook.

Example config in project’s metadata.yaml

notification_config:

alert_on:

- trigger_failure

- trigger_passed_sla

slack_config:

webhook_url: ...

How to create webhook url: https://developers.google.com/chat/how-tos/webhooks#create_a_webhook

Other bug fixes & polish

-

Prevent a user from editing a pipeline if it’s stale. A pipeline can go stale if there are multiple tabs open trying to edit the same pipeline or multiple people editing the pipeline at different times.

-

Fix bug: Code block scrolls out of view when focusing on the code block editor area and collapsing/expanding blocks within the code editor.

-

Fix bug: Sync UI is not updating the "rows processed" value.

-

Fix the path issue of running dynamic blocks on a Windows server.

-

Fix index out of range error in data integration transformer when filtering data in the transformer.

-

Fix issues of loading sample data in Google Sheets.

-

Fix chart blocks loading data.

-

Fix Git integration bugs:

- The confirm modal after clicking “synchronize data” was sometimes not actually running the sync, so removed that.

- Fix various git related user permission issues.

- Create local repo git path if it doesn’t exists already.

-

Add preventive measures for saving a pipeline:

- If the content that is about to be saved to a YAML file is invalid YAML, raise an exception.

- If the block UUIDs from the current pipeline and the content that is about to be saved differs, raise an exception.

-

DBT block

- Support DBT staging. When a DBT model runs and if it’s configured to use a schema with a suffix, Mage will now take that into account when fetching a sample of the model at the end of the block run.

- Fix

Circular reference detectedissue with DBT variables. - Manually input DBT block profile to allow variable interpolation.

- Show DBT logs when running compile and preview.

-

SQL block

- Don’t limit raw SQL query; allow all rows to be retrieved.

- Support SQL blocks passing data to downstream SQL blocks with different data providers.

- Raise an exception if a raw SQL block is trying to interpolate an upstream raw SQL block from a different data provider.

- Fix date serialization from 1 block to another.

-

Add helper for using CRON syntax in trigger setting.

- Document internal API endpoints for development and contributions: https://docs.mage.ai/contributing/backend/api/overview

View full Changelog

Published by thomaschung408 over 1 year ago

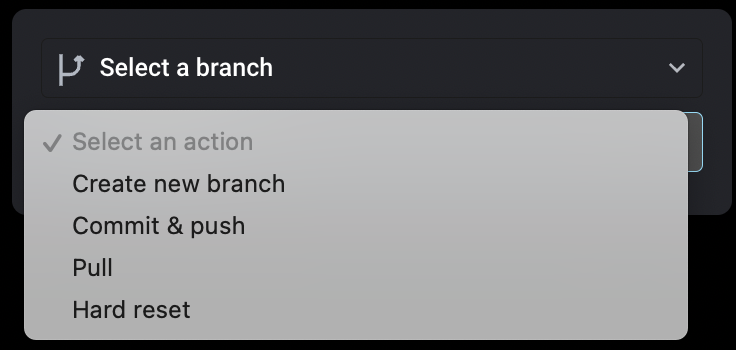

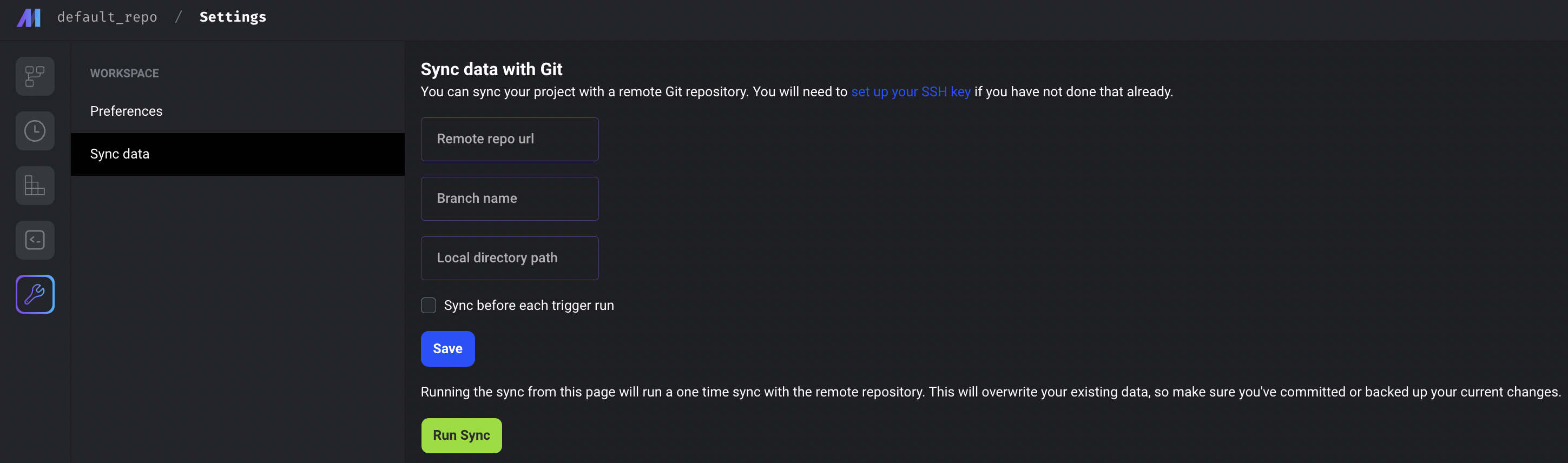

Commit, push and pull code changes between Mage and Github/Gitlab repository

Mage supports Github/Gitlab integration via UI now. You can perform the following actions with the UI.

- Create new branch

- Commit & push

- Pull

- Hard reset

Doc on setting up integration: https://docs.mage.ai/production/data-sync/git

Deploy Mage using AWS CodeCommit and AWS CodeDeploy

Add terraform templates for deploying Mage to ECS from a CodeCommit repo with AWS CodePipeline. It will create 2 separate CodePipelines, one for building a docker image to ECR from a CodeCommit repository, and another one for reading from ECR and deploying to ECS.

Docs on using the terraform templates: https://docs.mage.ai/production/deploying-to-cloud/aws/code-pipeline

Use ECS task roles for AWS authentication

When you run Mage on AWS, instead of using hardcoded API keys, you can also use ECS task role to authenticate with AWS services.

Opening http://localhost:6789/ automatically

Shout out to Bruno Gonzalez for his contribution of supporting automatically opening Mage in a browser tab when using mage start command in your laptop.

Github issue: https://github.com/mage-ai/mage-ai/issues/2233

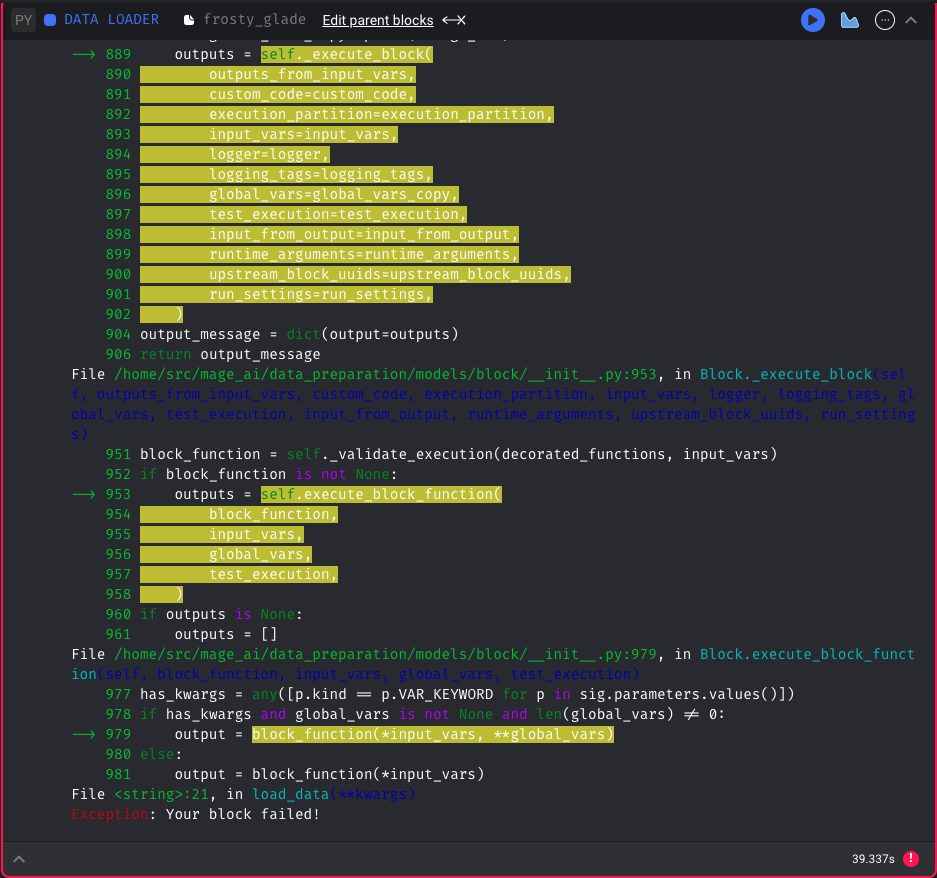

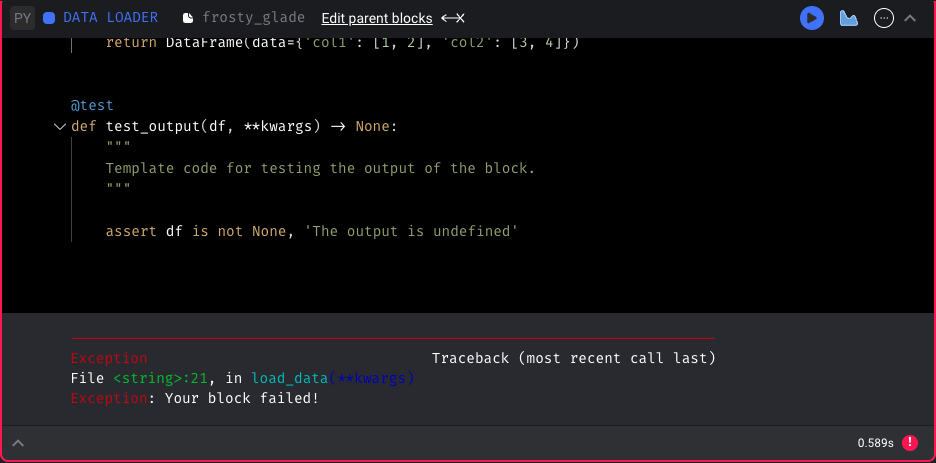

Update notebook error display

When executing a block in the notebook and an error occurs, show the stack trace of the error without including the custom code wrapper (useless information).

Before:

After:

Data integration pipeline improvements

MySQL

- Add Mage automatically created columns to destination table if table already exists in MySQL.

- Don’t lower case column names for MySQL destination.

Commercetools

- Add inventory stream for Commercetools source.

Outreach

- Fix outreach source rate limit issue.

Snowflake

- Fix Snowflake destination column comparison when altering table. Use uppercase for column names if

disable_double_quotesis True. - Escape single quote when converting array values.

Streaming pipeline improvements

- Truncate print messages in execution output to prevent freezing the browser.

- Disable keyboard shortcuts in streaming pipelines to run blocks.

- Add async handler to streaming source base class. You can set

consume_method = SourceConsumeMethod.READ_ASYNCin your streaming source class. Then it'll use read_async method.

Pass event variable to kwargs for event trigger pipelines

Mag supports triggering pipelines on AWS events. Now, you can access the raw event data in block method via kwargs['event'] . This enhancement enables you to easily customize your pipelines based on the event trigger and to handle the event data as needed within your pipeline code.

Other bug fixes & polish

- Fix “Circular import” error of using the

secret_varin repo's metadata.yaml - Fix bug: Tooltip at very right of Pipeline Runs or Block Runs graphs added a horizontal overflow.

- Fix bug: If an upstream dependency was added on the Pipeline Editor page, stale upstream connections would be updated for a block when executing a block via keyboard shortcut (i.e. cmd/ctrl+enter) inside the block code editor.

- Cast column types (int, float) when reading block output Dataframe.

- Fix block run status caching issue in UI. Mage UI sometimes fetched stale block run statuses from backend, which is misleading. Now, the UI always fetches the latest block run status without cache.

- Fix timezone mismatch issue for pipeline schedule execution date comparison so that there’re no duplicate pipeline runs created.

- Fix bug: Sidekick horizontal scroll bar not wide enough to fit 21 blocks when zoomed all the way out.

- Fix bug: When adding a block between two blocks, if the first block was a SQL block, it would use the SQL block content to create a block regardless of the block language.

- Fix bug: Logs for pipeline re-runs were not being filtered by timestamp correctly due to execution date of original pipeline run being used for filters.

- Increase canvas size of dependency graph to accommodate more blocks / blocks with long names.

View full Changelog

Published by thomaschung408 over 1 year ago

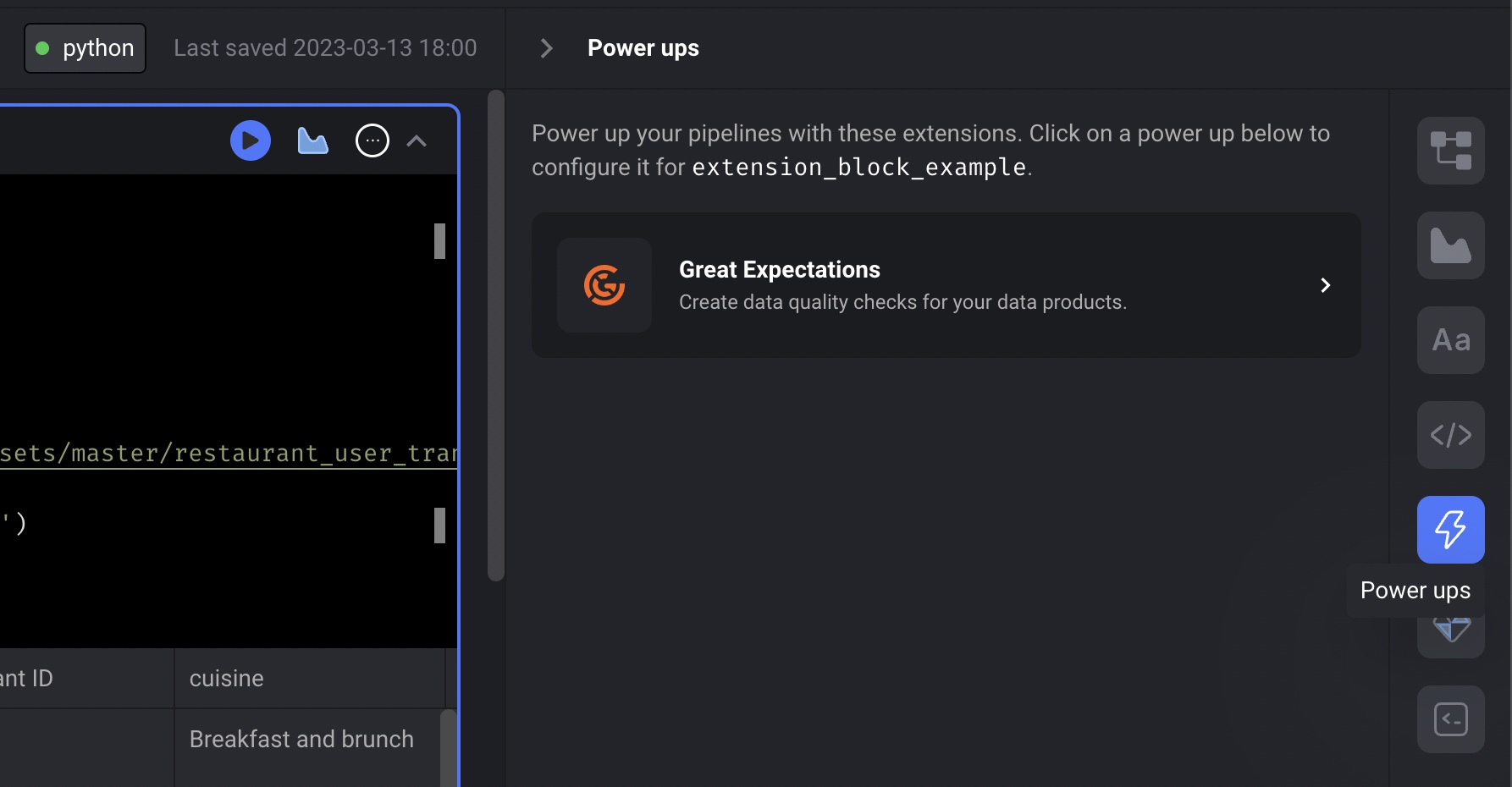

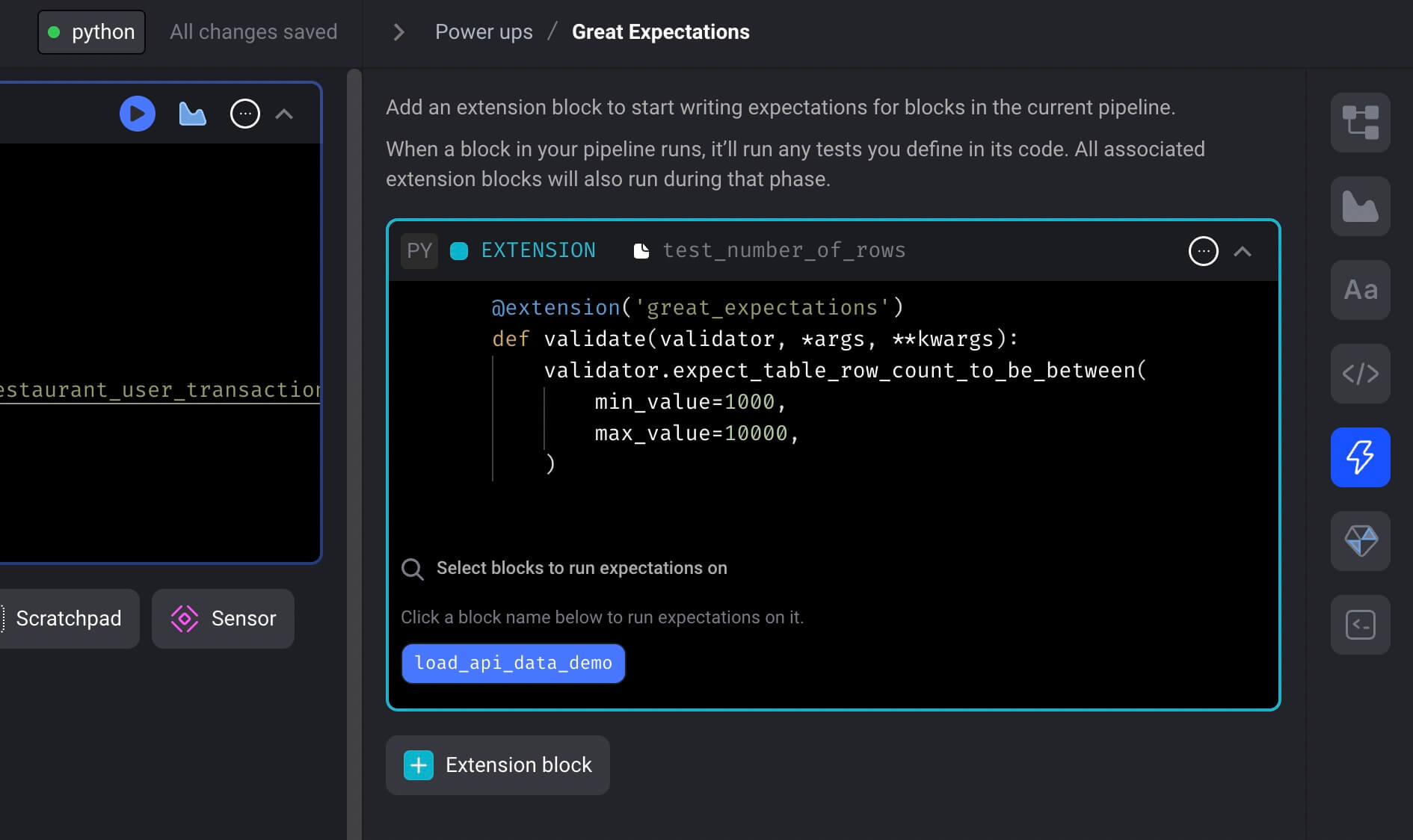

Great Expectations integration

Mage is now integrated with Great Expectations to test the data produced by pipeline blocks.

You can use all the expectations easily in your Mage pipeline to ensure your data quality.

Follow the doc to add expectations to your pipeline to run tests for your block output.

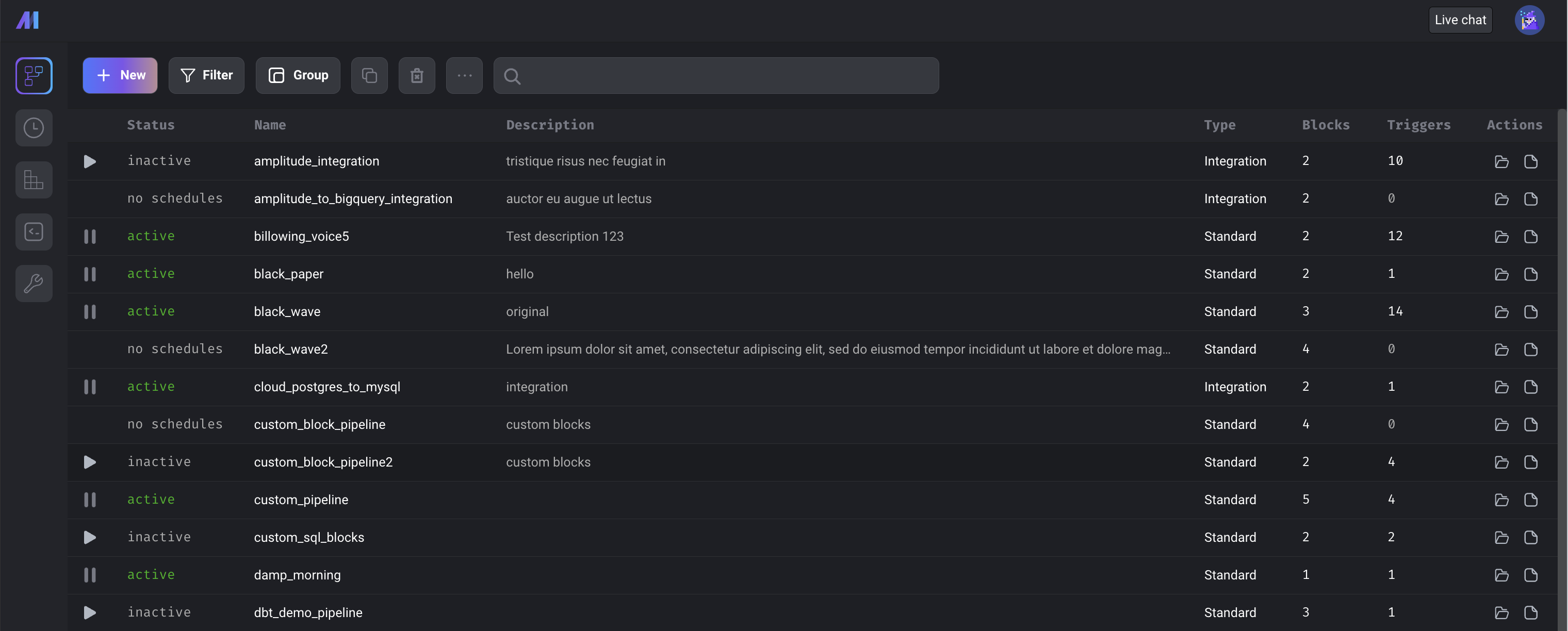

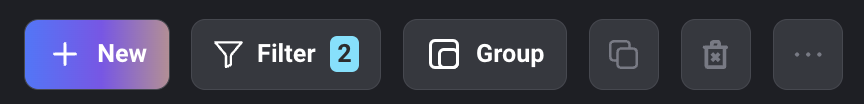

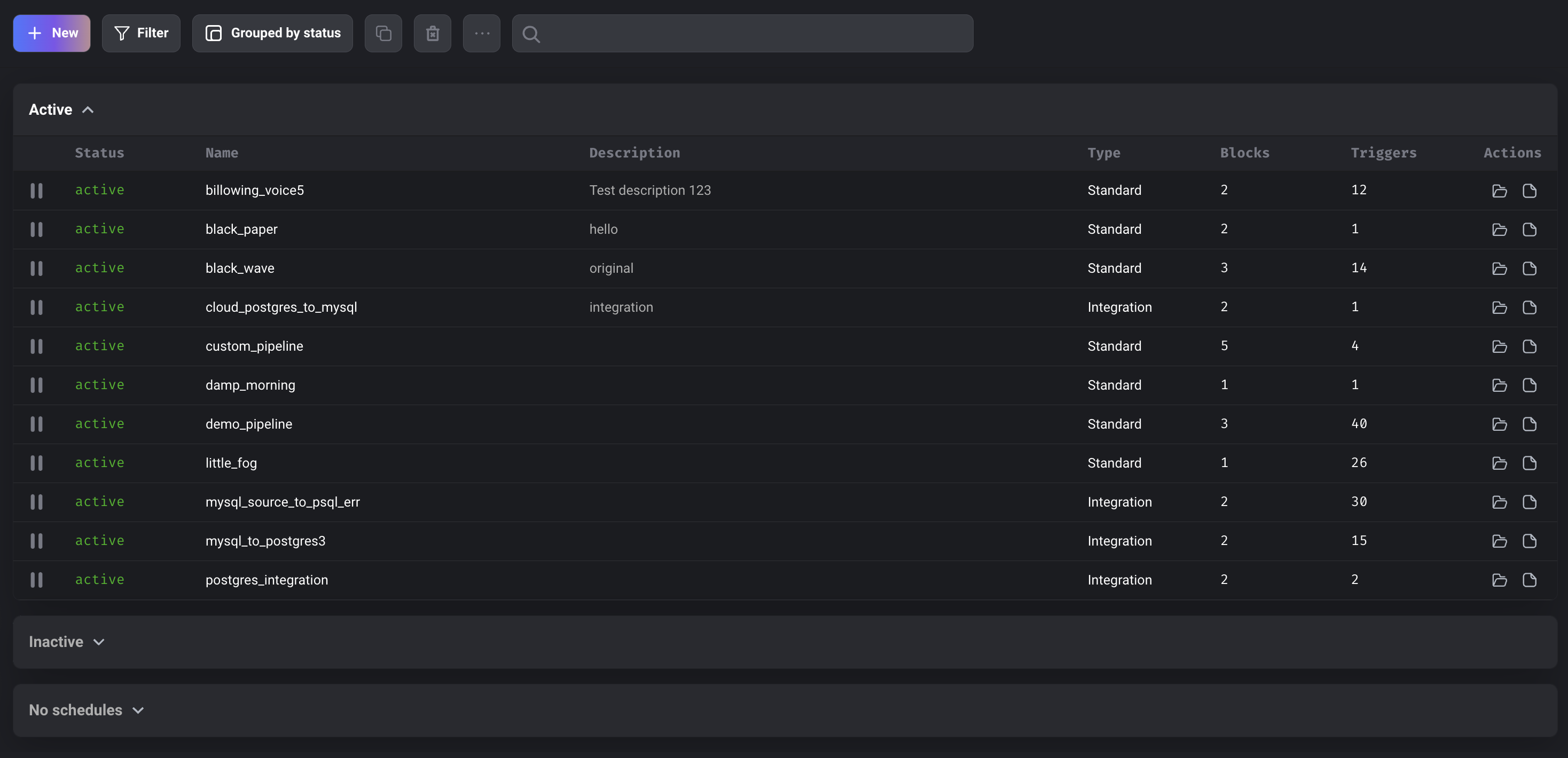

Pipeline dashboard updates

-

Added pipeline description.

-

Single click on a row no longer opens a pipeline. In order to open a pipeline now, users can double-click a row, click on the pipeline name, or click on the open folder icon at the end of the row.

-

Select a pipeline row to perform an action (e.g. clone, delete, rename, or edit description).

- Clone pipeline (icon with 2 overlapping squares) - Cloning the selected pipeline will create a new pipeline with the same configuration and code blocks. The blocks use the same block files as the original pipeline. Pipeline triggers, runs, backfills, and logs are not copied over to the new pipeline.

- Delete pipeline (trash icon) - Deletes selected pipeline

- Rename pipeline (item in dropdown menu under ellipsis icon) - Renames selected pipeline

- Edit description (item in dropdown menu under ellipsis icon) - Edits pipeline description. Users can hover over the description in the table to view more of it.

-

Users can click on the file icon under the

Actionscolumn to go directly to the pipeline's logs. -

Added search bar which searches for text in the pipeline

uuid,name, anddescriptionand filters the pipelines that match. -

The create, update, and delete actions are not accessible by Viewer roles.

-

Added badge in Filter button indicating number of filters applied.

-

Group pipelines by

statusortype.

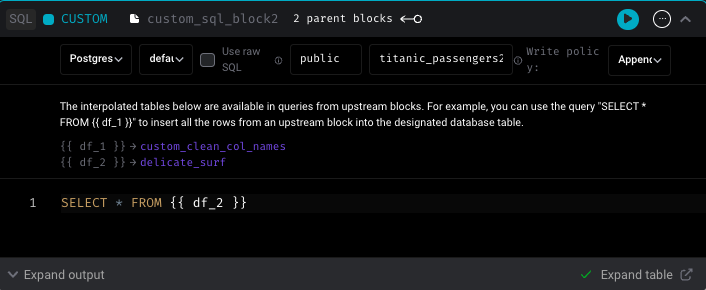

SQL block improvements

Toggle SQL block to not create table

Users can write raw SQL blocks and only include the INSERT statement. CREATE TABLE statement isn’t required anymore.

Support writing SELECT statements in SQL using raw SQL

Users can write SELECT statements using raw SQL in SQL blocks now.

Find all supported SQL statements using raw SQL in this doc.

Support for ssh tunnel in multiple blocks

When using SSH tunnel to connect to Postgres database, SSH tunnel was originally only supported in block run at a time due to port conflict. Now Mage supports SSH tunneling in multiple blocks by finding the unused port as the local port. This feature is also supported in Python block when using mage_ai.io.postgres module.

Data integration pipeline

New source: Pipedrive

Shout out to Luis Salomão for his continuous contribution to Mage. The new source Pipedrive is available in Mage now.

Fix BigQuery “query too large” error

Add check for size of query since that can potentially exceed the limit.

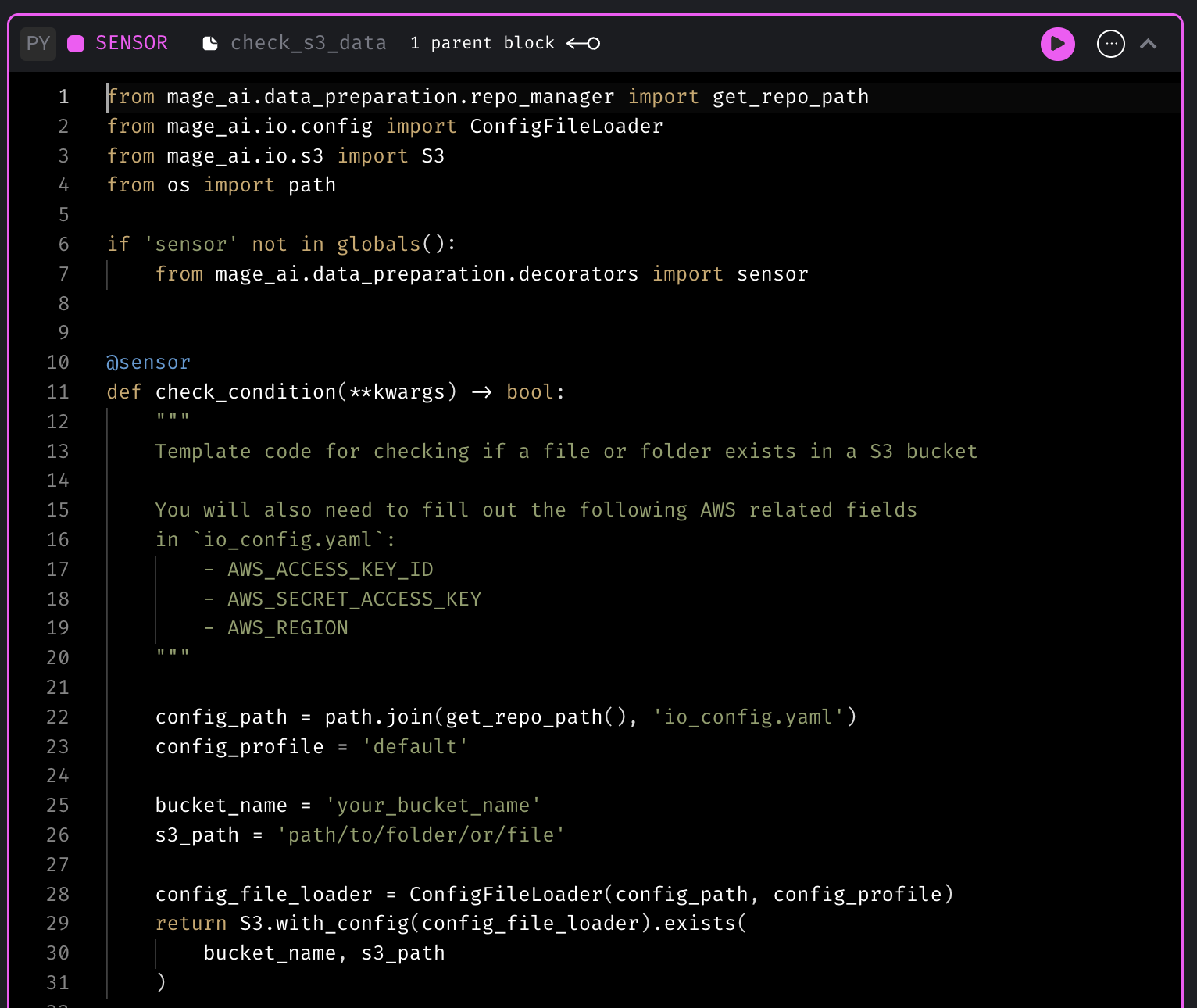

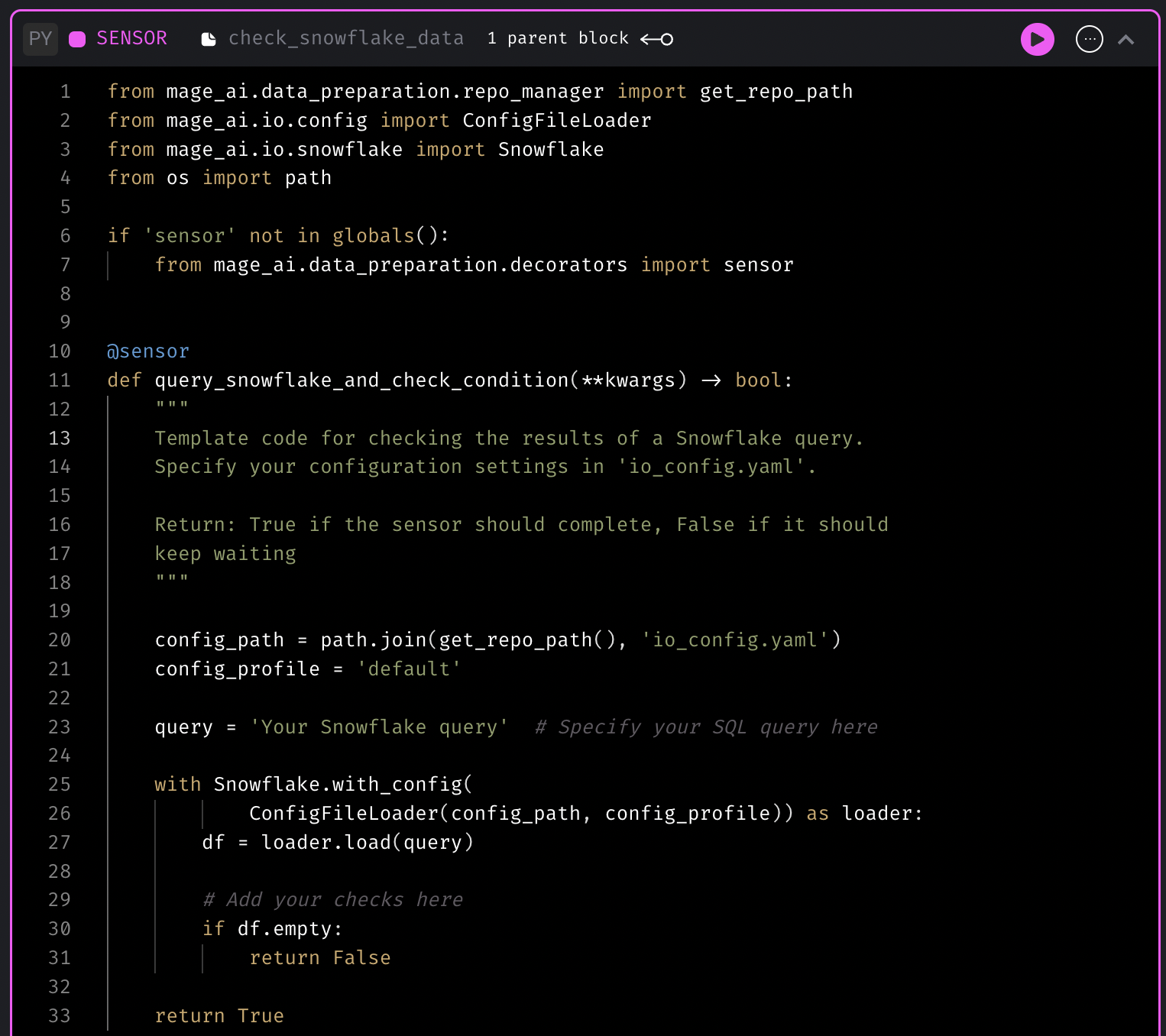

New sensor templates

Sensor block is used to continuously evaluate a condition until it’s met. Mage now has more sensor templates to check whether data lands in S3 bucket or SQL data warehouses.

Sensor template for checking if a file exists in S3

Sensor template for checking the data in SQL data warehouse

Support for Spark in standalone mode (self-hosted)

Mage can connect to a standalone Spark cluster and run PySpark code on it. You can set the environment variable SPARK_MASTER_HOST in your Mage container or instance. Then running PySpark code in a standard batch pipeline will work automagically by executing the code in the remote Spark cluster.

Follow this doc to set up Mage to connect to a standalone Spark cluster.

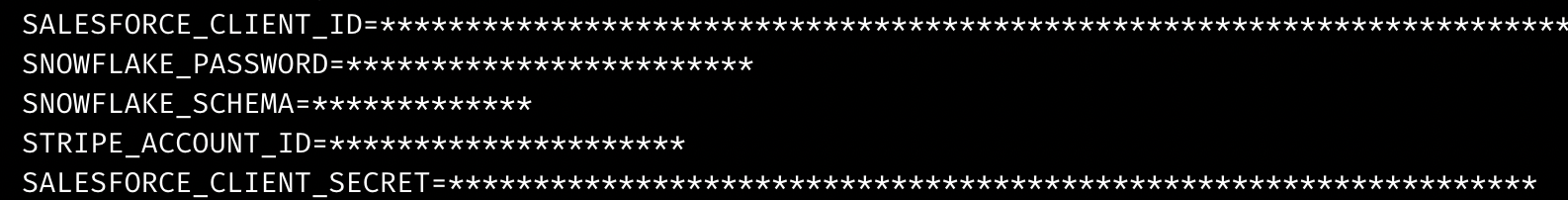

Mask environment variable values with stars in output

Mage now automatically masks environment variable values with stars in terminal output or block output to prevent showing sensitive data in plaintext.

Other bug fixes & polish

-

Improve streaming pipeline logging

- Show streaming pipeline error logging

- Write logs to multiple files

-

Provide the working NGINX config to allow Mage WebSocket traffic.

location / { proxy_pass http://127.0.0.1:6789; proxy_http_version 1.1; proxy_set_header Upgrade $http_upgrade; proxy_set_header Connection "Upgrade"; proxy_set_header Host $host; } -

Fix raw SQL quote error.

-

Add documentation for developer to add a new source or sink to streaming pipeline: https://docs.mage.ai/guides/streaming/contributing

View full Changelog

Published by thomaschung408 over 1 year ago

Disable editing files or executing code in production environment

You can configure Mage to not allow any edits to pipelines or blocks in production environment. Users will only be able to create triggers and view the existing pipelines.

Doc: https://docs.mage.ai/production/configuring-production-settings/overview#read-only-access

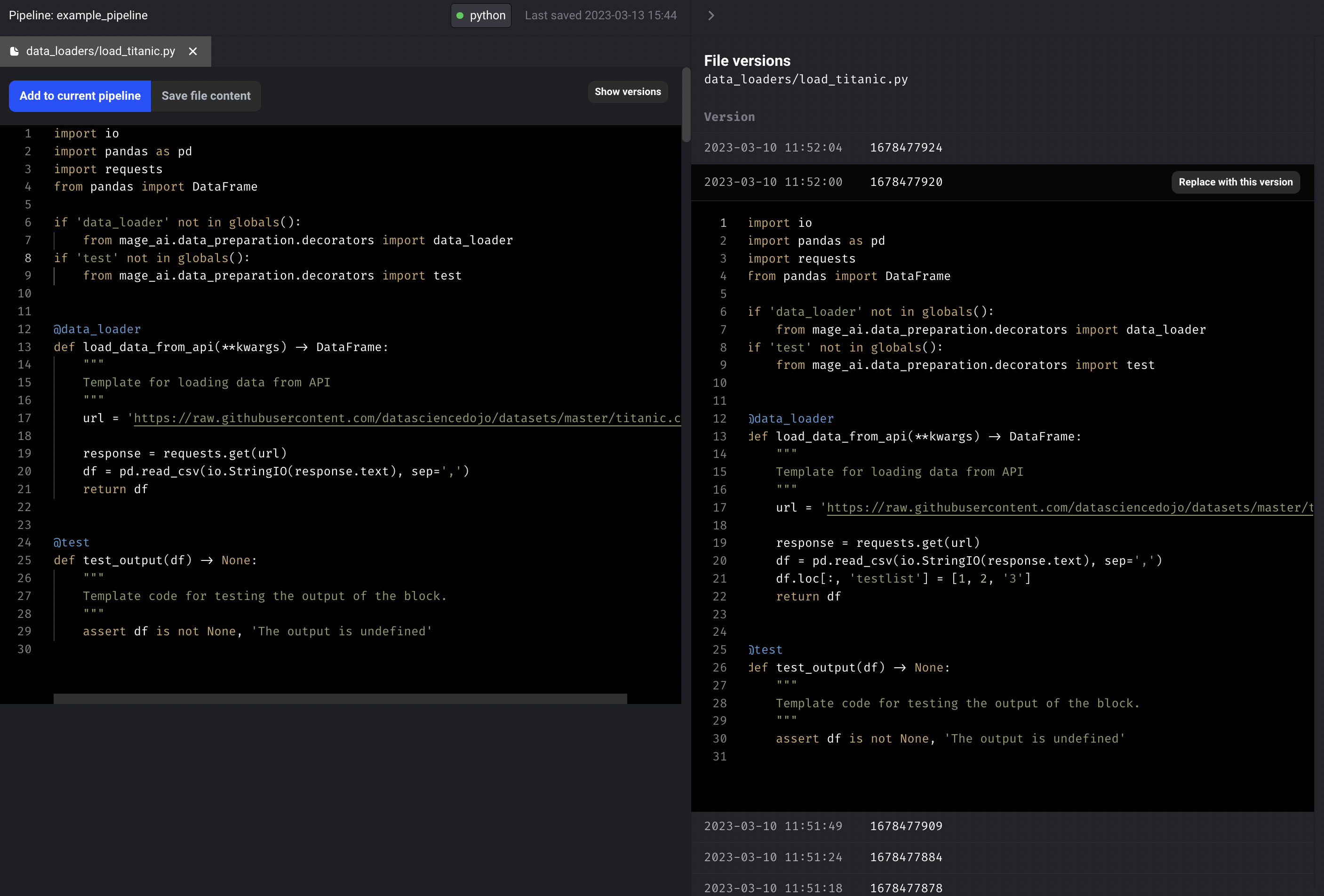

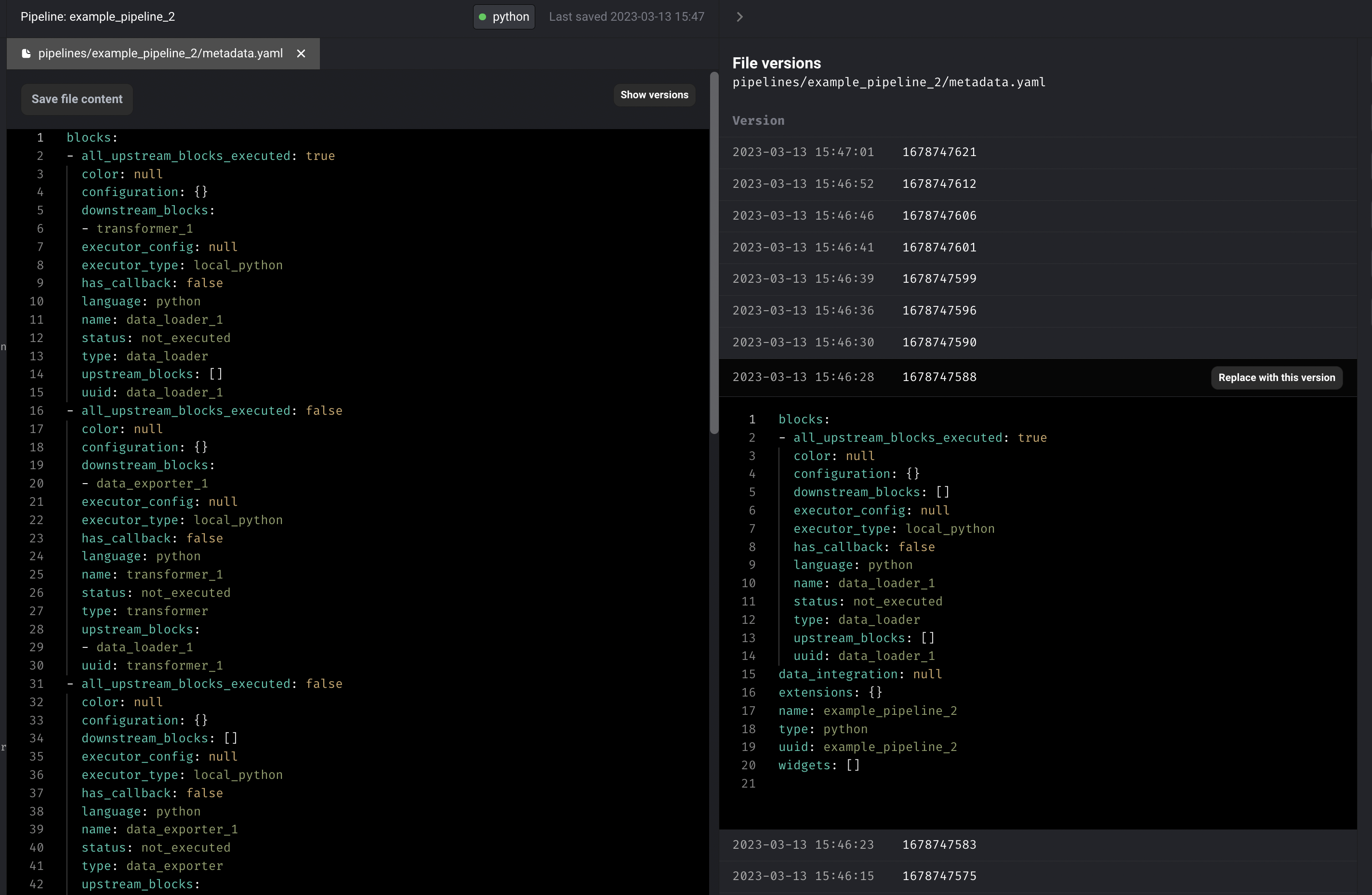

Pipeline and file versioning

- Create versions of a file for every create or update to the content. Display the versions and allow user to update current file content to a specific file version. Doc: https://docs.mage.ai/development/versioning/file-versions

- Support pipeline file versioning. Display the historical pipeline versions and allow user to roll back to a previous pipeline version if pipeline config is messed up.

Support LDAP authentication

Shout out to Dhia Eddine Gharsallaoui for his contribution of adding LDAP authentication method to Mage. When LDAP authentication is enabled, users will need to provide their LDAP credentials to log in to the system. Once authenticated, Mage will use the authorization filter to determine the user’s permissions based on their LDAP group membership.

Follow the guide to set up LDAP authentication.

DBT support for SQL Server

Support running SQL Server DBT models in Mage.

Tutorial for setting up a DBT project in Mage: https://docs.mage.ai/tutorials/setup-dbt

Helm deployment

Mage can now be deployed to Kubernetes with Helm: https://mage-ai.github.io/helm-charts/

How to install Mage Helm charts

helm repo add mageai https://mage-ai.github.io/helm-charts

helm install my-mageai mageai/mageai

To customize the mount volume for Mage container, you’ll need to customize the values.yaml

-

Get the

values.yamlwith the commandhelm show values mageai/mageai > values.yaml -

Edit the

volumesconfig invalues.yamlto mount to your Mage project path

Doc: https://docs.mage.ai/production/deploying-to-cloud/using-helm

Integration with Spark running in the same Kubernetes cluster

When you run Mage and Spark in the same Kubernetes cluster, you can set the environment variable SPARK_MASTER_HOST to the url of the master node of the Spark cluster in Mage container. Then you’ll be able to connect Mage to your Spark cluster and execute PySpark code in Mage.

Follow this guide to use Mage with Spark in Kubernetes cluster.

Improve Kafka source and sink for streaming pipeline

- Set api_version in Kafka source and Kafka destination

- Allow passing raw message value to transformer so that custom deserialization logic can be applied in transformer (e.g. custom Protobuf deserialization logic).

Data integration pipeline

- Add more streams to Front app source

- Channels

- Custom Fields

- Conversations

- Events

- Rules

- Fix Snowflake destination alter table command errors

- Fix MySQL source bytes decode error

Pipeline table filtering

Add filtering (by status and type) for pipelines.

Renaming a pipeline transfers all the existing triggers, variables, pipeline runs, block runs, etc to the new pipeline

- When renaming a pipeline, transfer existing triggers, backfills, pipeline runs, and block runs to the new pipeline name.

- Prevent users from renaming pipeline to a name already in use by another pipeline.

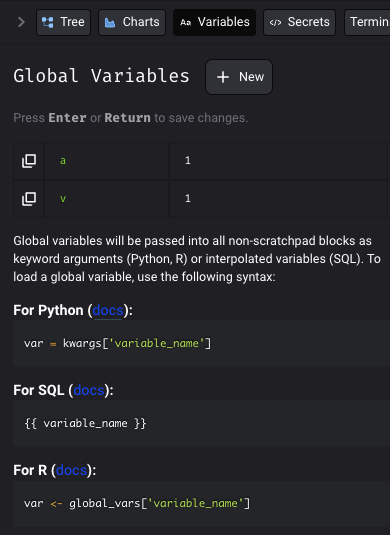

Update the variable tab with more instruction for SQL and R variables

- Update the Variables tab with more instruction for SQL and R variables.

- Improve SQL/R block upstream block interpolation helper hints.

Other bug fixes & polish

- Update sidekick to have a vertical navigation

- Fix

Allow blocks to failsetting for pipelines with dynamic blocks. - Git sync: Overwrite origin url with the user's remote_repo_link if it already exists.

- Resolve DB model refresh issues in pipeline scheduler

- Fix bug: Execute pipeline in Pipeline Editor gets stuck at first block.

- Use the upstream dynamic block’s block metadata as the downstream child block’s kwargs.

- Fix using reserved words as column names in mage.io Postgres export method

- Fix error

sqlalchemy.exc.PendingRollbackError: Can't reconnect until invalid transaction is rolled back.in API middleware - Hide "custom" add block button in streaming pipelines.

- Fix bug: Paste not working in Firefox browser (https) (Error: "navigator.clipboard.read is not a function").

View full Changelog

Published by thomaschung408 over 1 year ago

Allow pipeline to keep running even if other unrelated blocks fail

Mage pipeline used to stop running if any of the block run failed. A setting was added to continue running the pipeline even if a block in the pipeline fails during the execution.

Check out the doc to learn about the additional settings of a trigger.

Sync project with Github

If you have your pipeline data stored in a remote repository in Github, you can sync your local project with the remote repository through Mage.

Follow the doc to set up the sync with Github.

Data integration pipeline

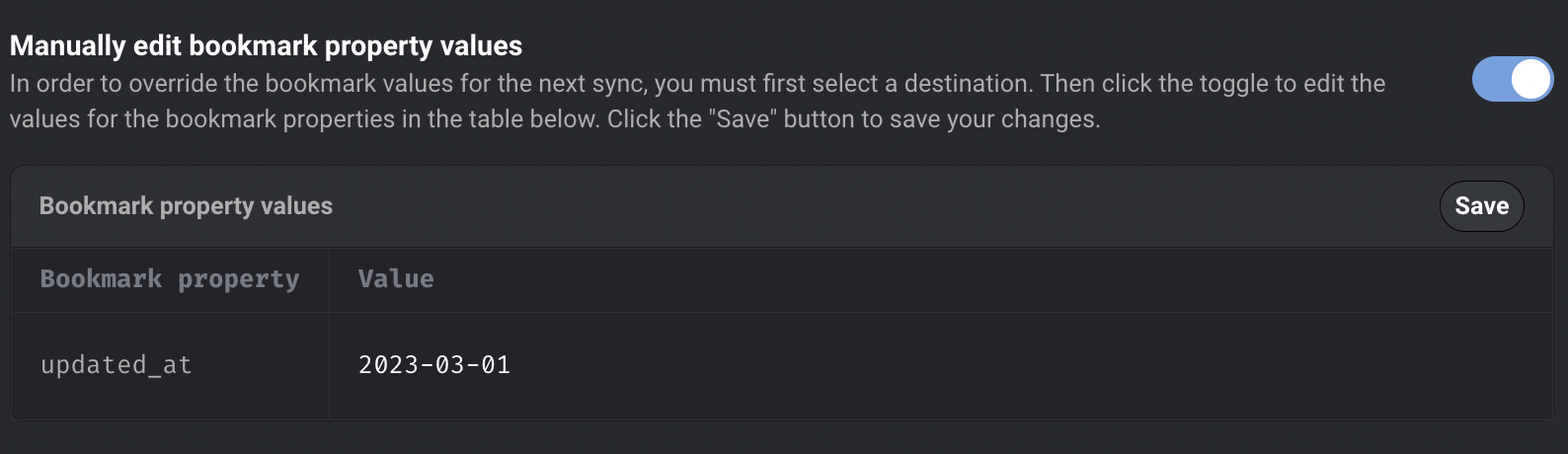

Edit bookmark property values for data integration pipeline from the UI

Edit bookmark property values from UI. User can edit the bookmark values, which will be used as a bookmark for the next sync. The bookmark values will automatically update to the last record synced after the next sync is completed. Check out the doc to learn about how to edit bookmark property values.

Improvements on existing sources and destinations

-

Use TEXT instead of VARCHAR with character limit as the column type in Postgres destination

-

Show a loader on a data integration pipeline while the list of sources and destinations are still loading

Streaming pipeline

Deserialize Protobuf messages in Kafka’s streaming source

Specify the Protobuf schema class path in the Kafka source config so that Mage can deserialize the Protobuf messages from Kafka.

Doc: https://docs.mage.ai/guides/streaming/sources/kafka#deserialize-message-with-protobuf-schema

Add Kafka as streaming destination

Doc: https://docs.mage.ai/guides/streaming/destinations/kafka

Ingest data to Redshift via Kinesis

Mage doesn’t directly stream data into Redshift. Instead, Mage can stream data to Kinesis. You can configure streaming ingestion for your Amazon Redshift cluster and create a materialized view using SQL statements.

Doc: https://docs.mage.ai/guides/streaming/destinations/redshift

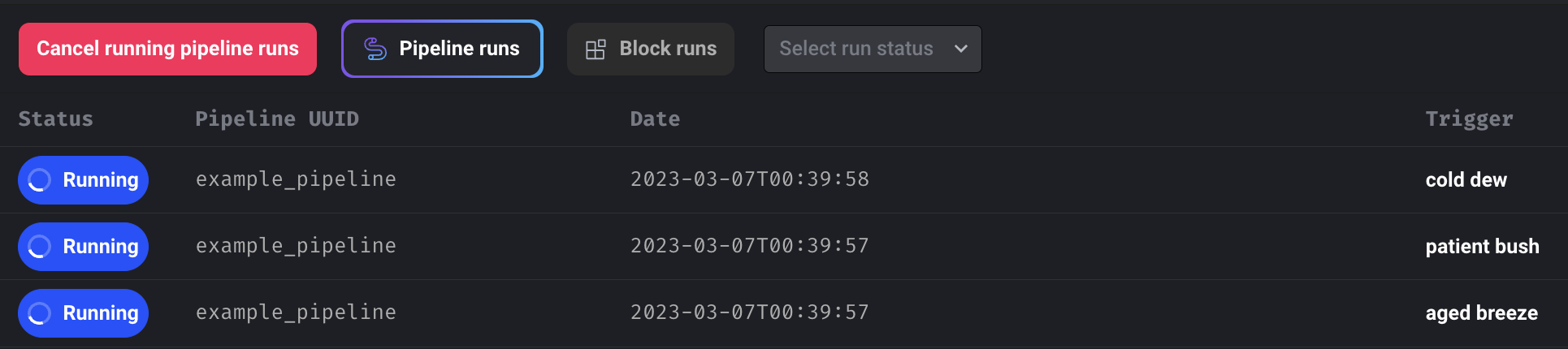

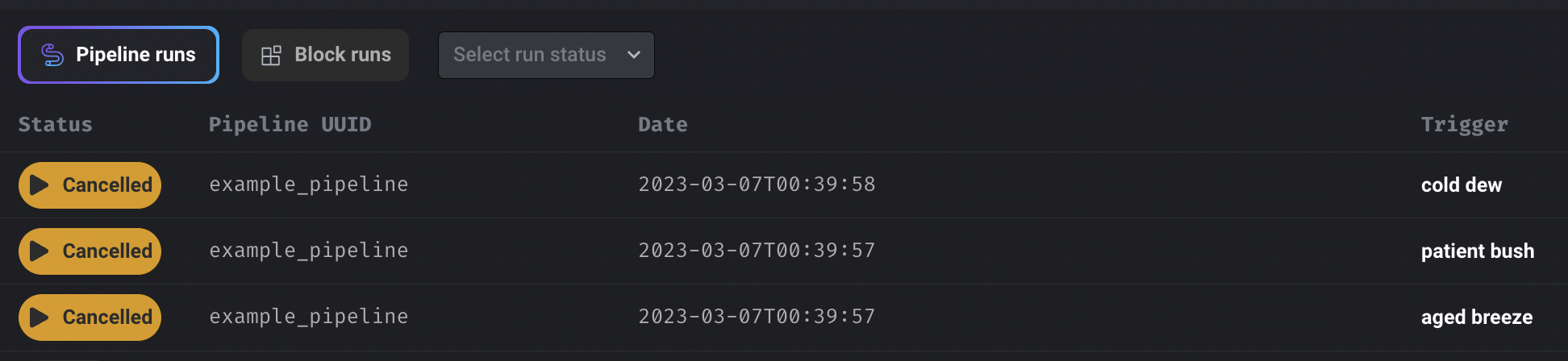

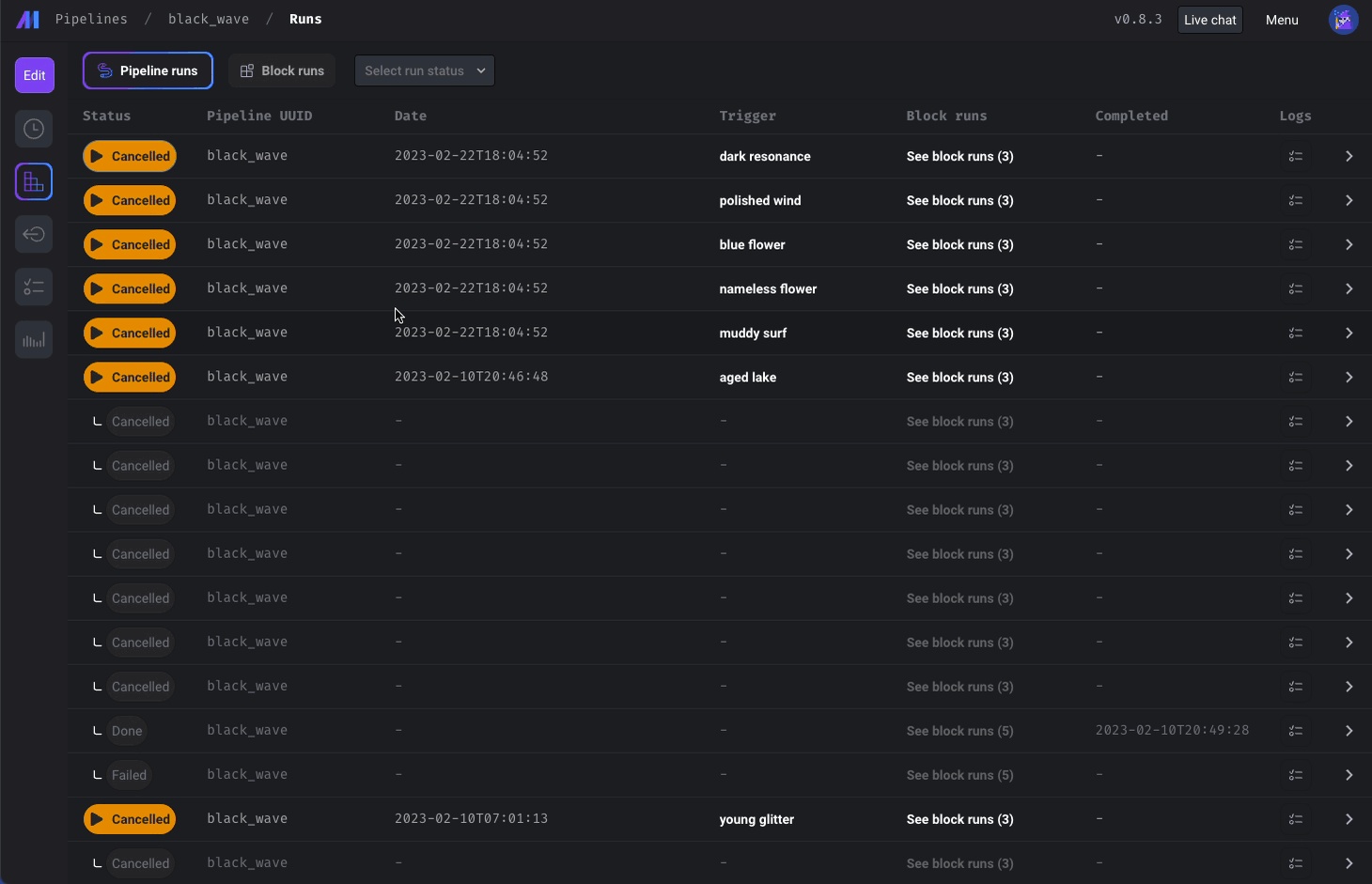

Cancel all running pipeline runs for a pipeline

Add the button to cancel all running pipeline runs for a pipeline.

Other bug fixes & polish

-

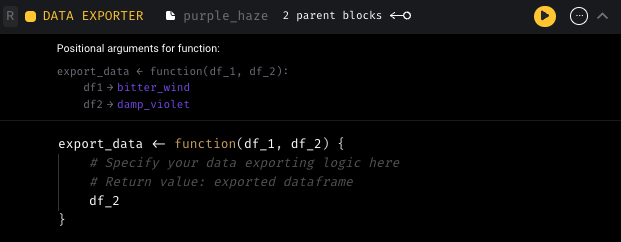

For the viewer role, don’t show the edit options for the pipeline

-

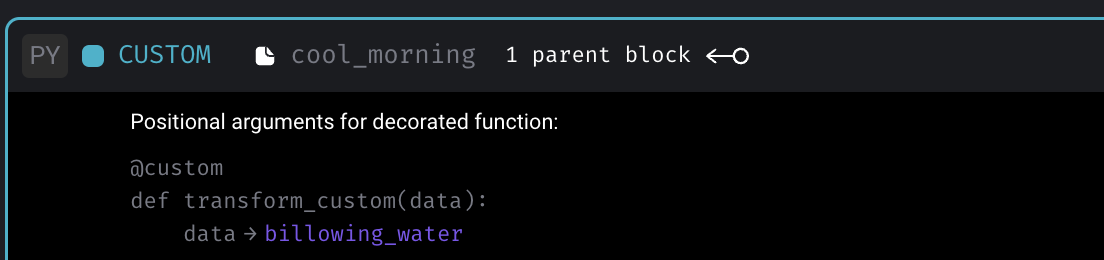

Show “Positional arguments for decorated function” preview for custom blocks

-

Disable notebook keyboard shortcuts when typing in input fields in the sidekick

View full Changelog

Published by thomaschung408 over 1 year ago

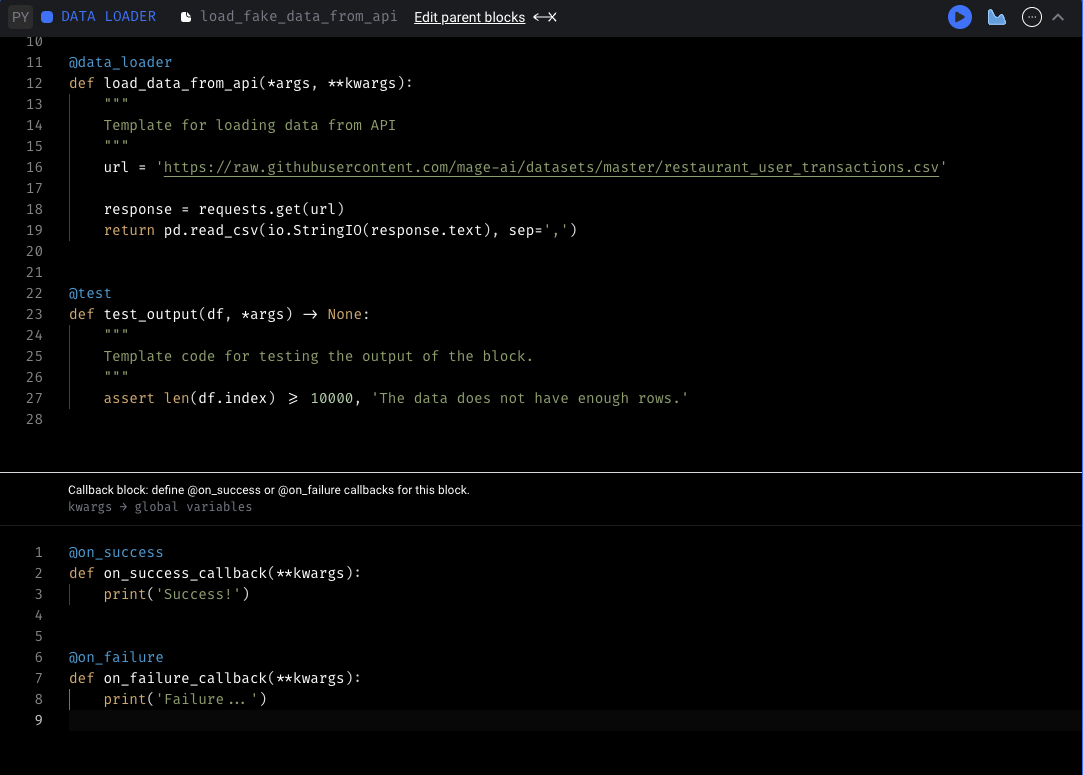

Configure callbacks on block success or failure

-

Add callbacks to run after your block succeeds or fails. You can add a callback by clicking “Add callback” in the “More actions” menu of the block (the three dot icon in the top right).

-

For more information about callbacks, check out the Mage documentation

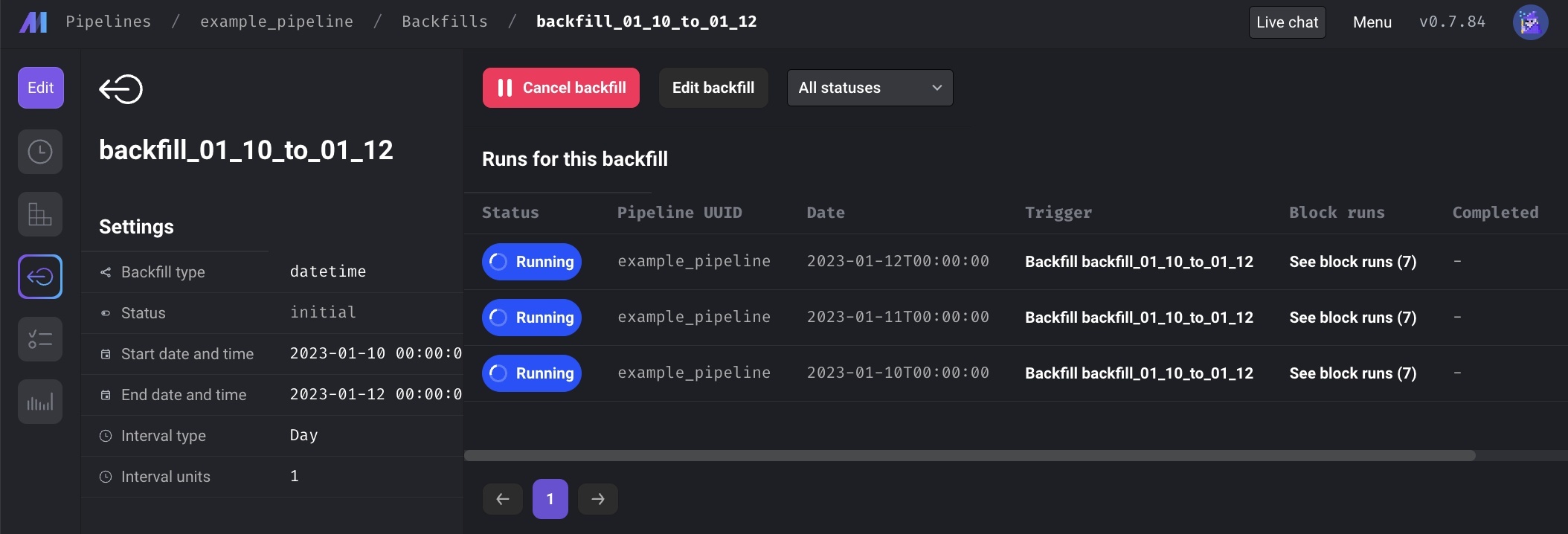

Backfill improvements

-

Show preview of total pipeline runs created and timestamps of pipeline runs that will be created before starting backfill.

-

Misc UX improvements with the backfills pages (e.g. disabling or hiding irrelevant items depending on backfill status, updating backfill table columns that previously weren't updating as needed)

Dynamic block improvements

- Support dynamic block to dynamic block

- Block outputs for dynamic blocks don’t show when clicking on the block run

DBT improvements

- View DBT block run sample model outputs

- Compile + preview, show compiled SQL, run/test/build model options, view lineage for single model, and more.

- When clicking a DBT block in the block runs view, show a sample query result of the model

- Only create upstream source if its used

- Don’t create upstream block SQL table unless DBT block reference it.

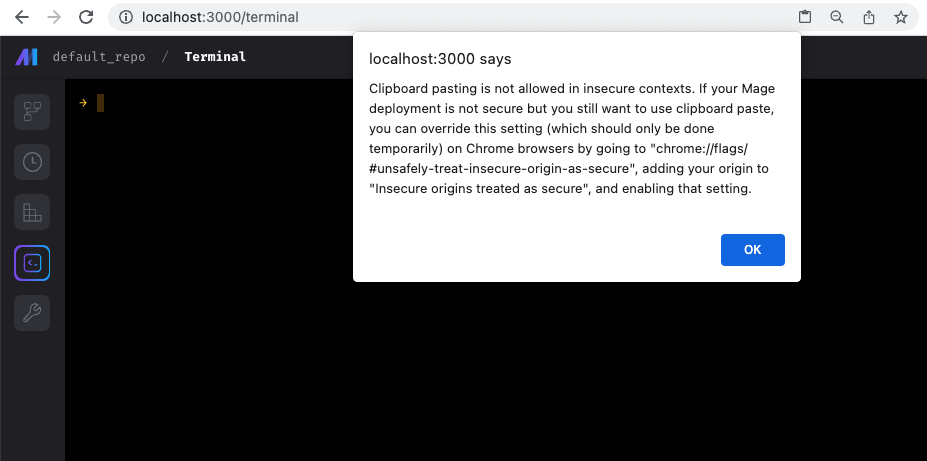

Handle multi-line pasting in terminal

File browser improvements

- Upload files and create new files in the root project directory

- Rename and delete any file from file browser

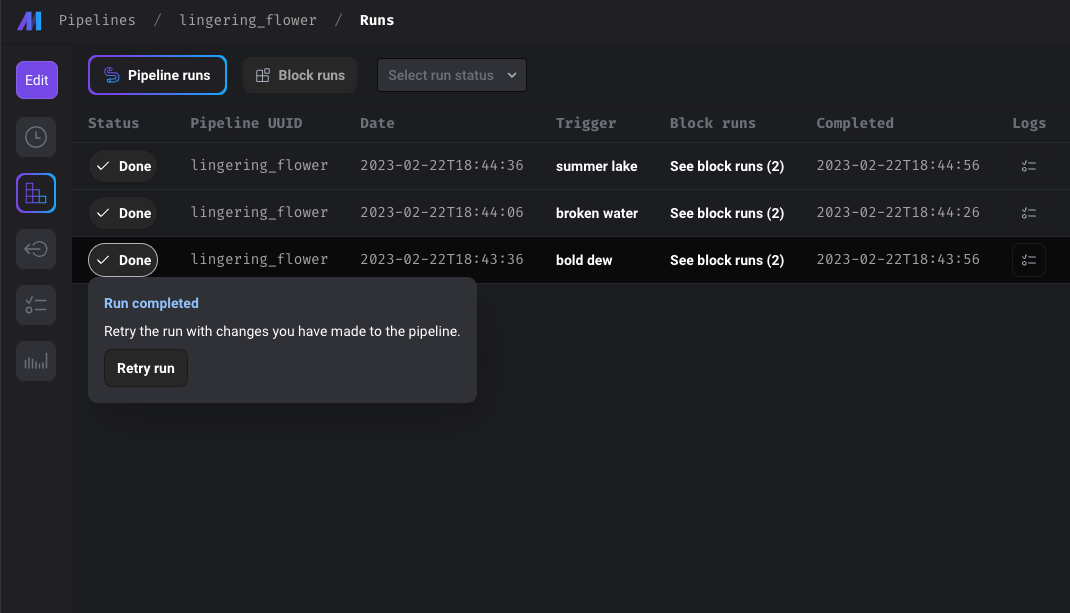

Other bug fixes & polish

-

Show pipeline editor main content header on Firefox. The header for the Pipeline Editor main content was hidden for Firefox browsers specifically (which prevented users from being able to change their pipeline names on Firefox).

-

Make retry run popup fully visible. Fix issue with Retry pipeline run button popup being cutoff.

-

Add alert with details on how to allow clipboard paste in insecure contexts

-

Show canceling status only for pipeline run being canceled. When multiple runs were being canceled, the status for other runs was being updated to "canceling" even though those runs weren't being canceled.

-

Remove table prop from destination config. The

tableproperty is not needed in the data integration destination config templates when building integration pipelines through the UI, so they've been removed. -

Update data loader, transformer, and data exporter templates to not require DataFrame.

-

Fix PyArrow issue

-

Fix data integration destination row syncing count

-

Fix emoji encode for BigQuery destination

-

Fix dask memory calculation issue

-

Fix Nan being display for runtime value on Syns page

-

Odd formatting on Trigger edit page dropdowns (e.g. Fequency) on Windows

-

Not fallback to empty pipeline when failing to reading pipeline yaml

View full Changelog

Published by thomaschung408 over 1 year ago

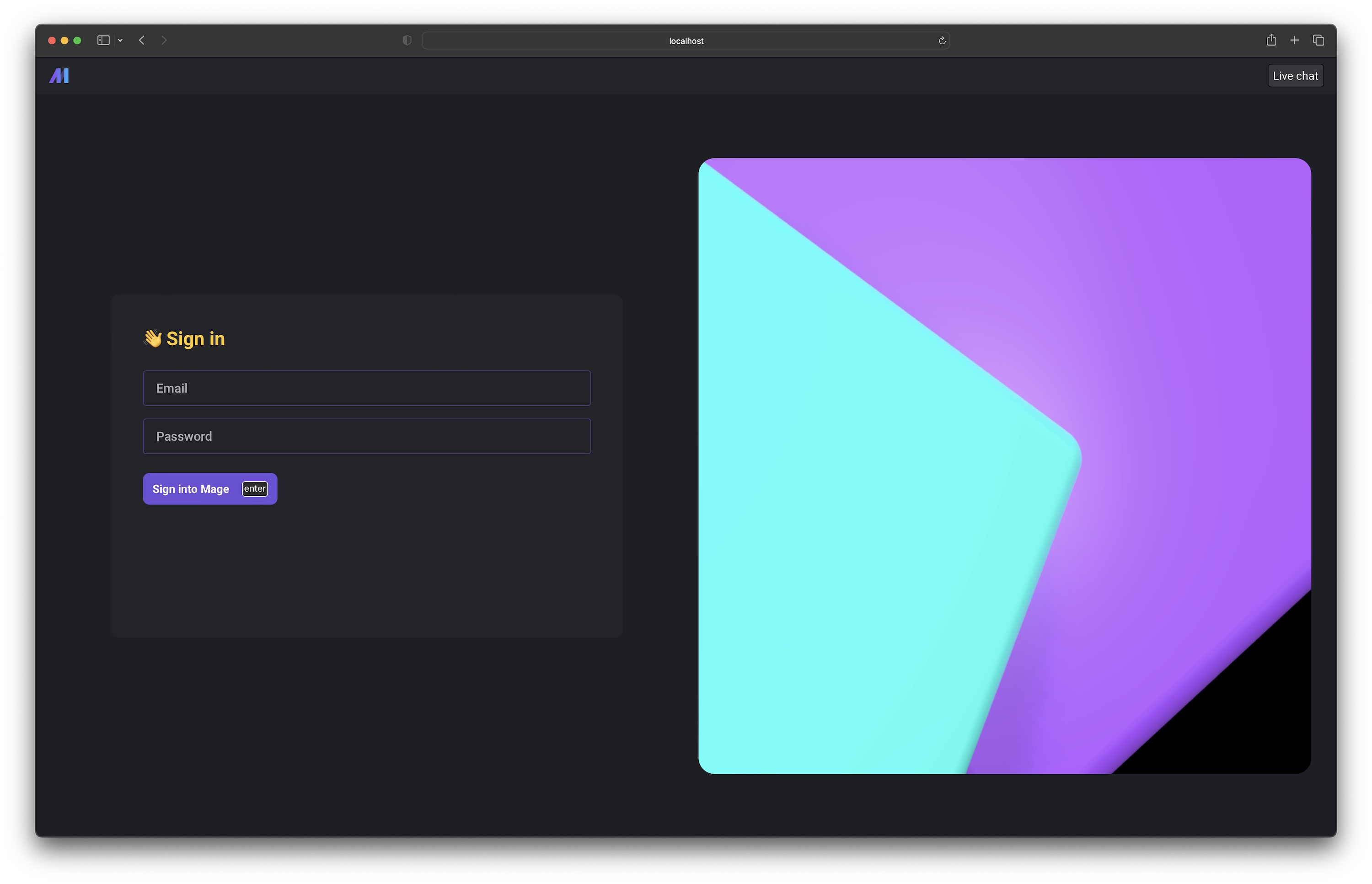

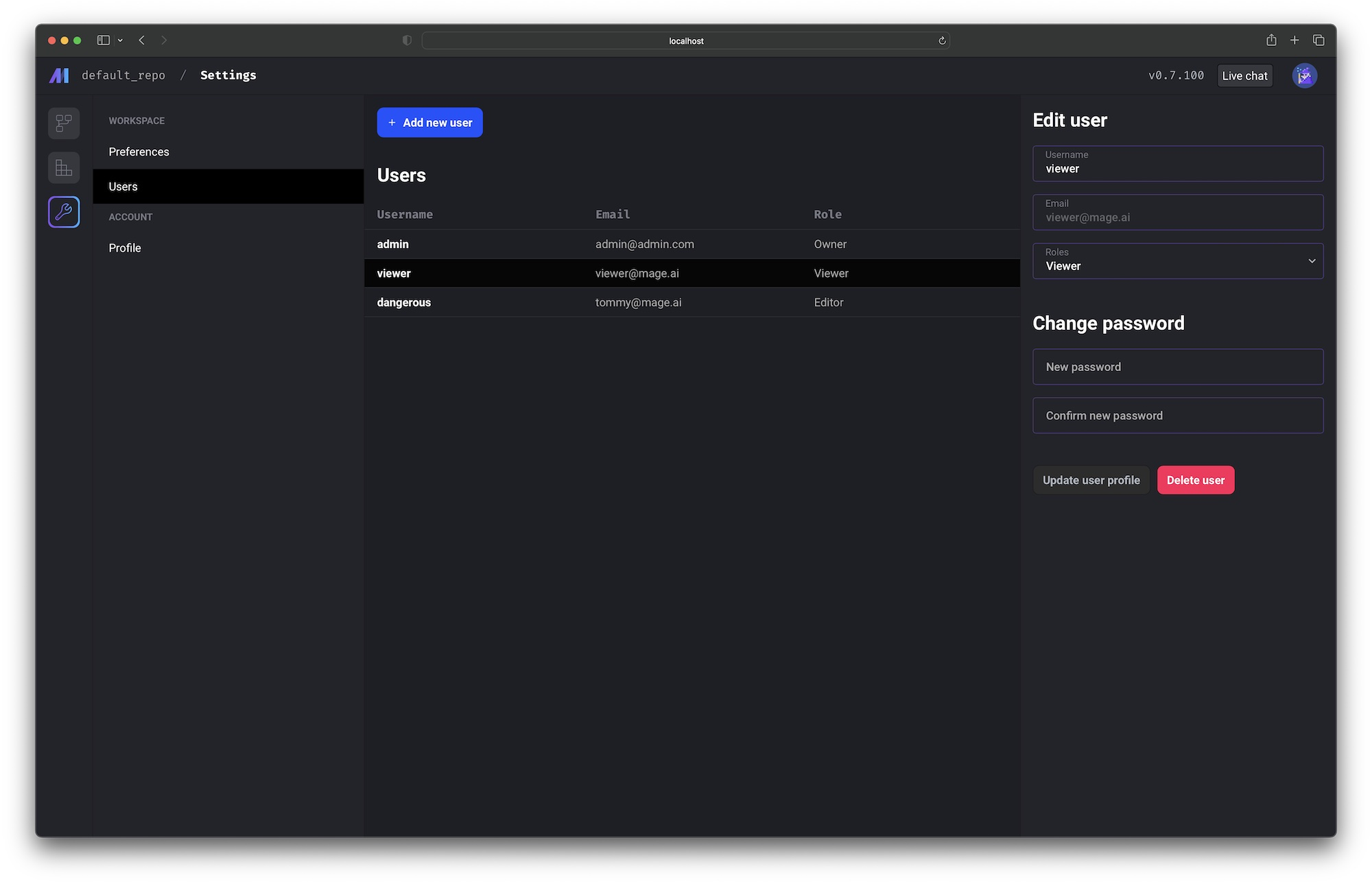

User login, management, authentication, roles, and permissions

User login and user level permission control is supported in mage-ai version 0.8.0 and above.

Setting the environment variable REQUIRE_USER_AUTHENTICATION to 1 to turn on user authentication.

Check out the doc to learn more about user authentication and permission control: https://docs.mage.ai/production/authentication/overview

Data integration

New sources

New destinations

Full lists of available sources and destinations can be found here:

- Sources: https://docs.mage.ai/data-integrations/overview#available-sources

- Destinations: https://docs.mage.ai/data-integrations/overview#available-destinations

Improvements on existing sources and destinations

- Update Couchbase source to support more unstructured data.

- Make all columns optional in the data integration source schema table settings UI; don’t force the checkbox to be checked and disabled.

- Batch fetch records in Facebook Ads streams to reduce number of requests.

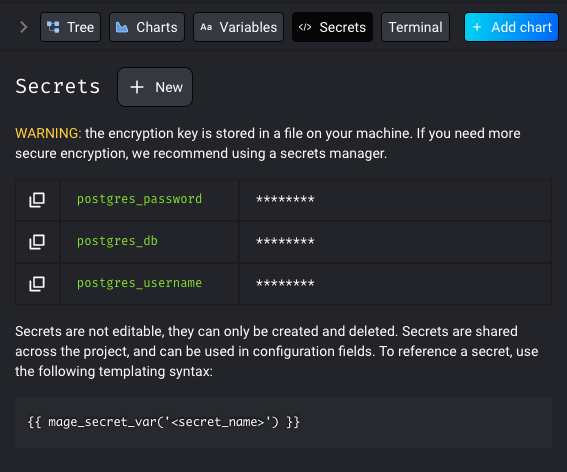

Add connection credential secrets through the UI and store encrypted in Mage’s database

In various surfaces in Mage, you may be asked to input config for certain integrations such as cloud databases or services. In these cases, you may need to input a password or an api key, but you don’t want it to be shown in plain text. To get around this issue, we created a way to store your secrets in the Mage database.

Check out the doc to learn more about secrets management in Mage: https://docs.mage.ai/development/secrets/secrets

Configure max number of concurrent block runs

Mage now supports limiting the number of concurrent block runs by customizing queue config, which helps avoid mage server being overloaded by too many block runs. User can configure the maximum number of concurrent block runs in project’s metadata.yaml via queue_config.

queue_config:

concurrency: 100

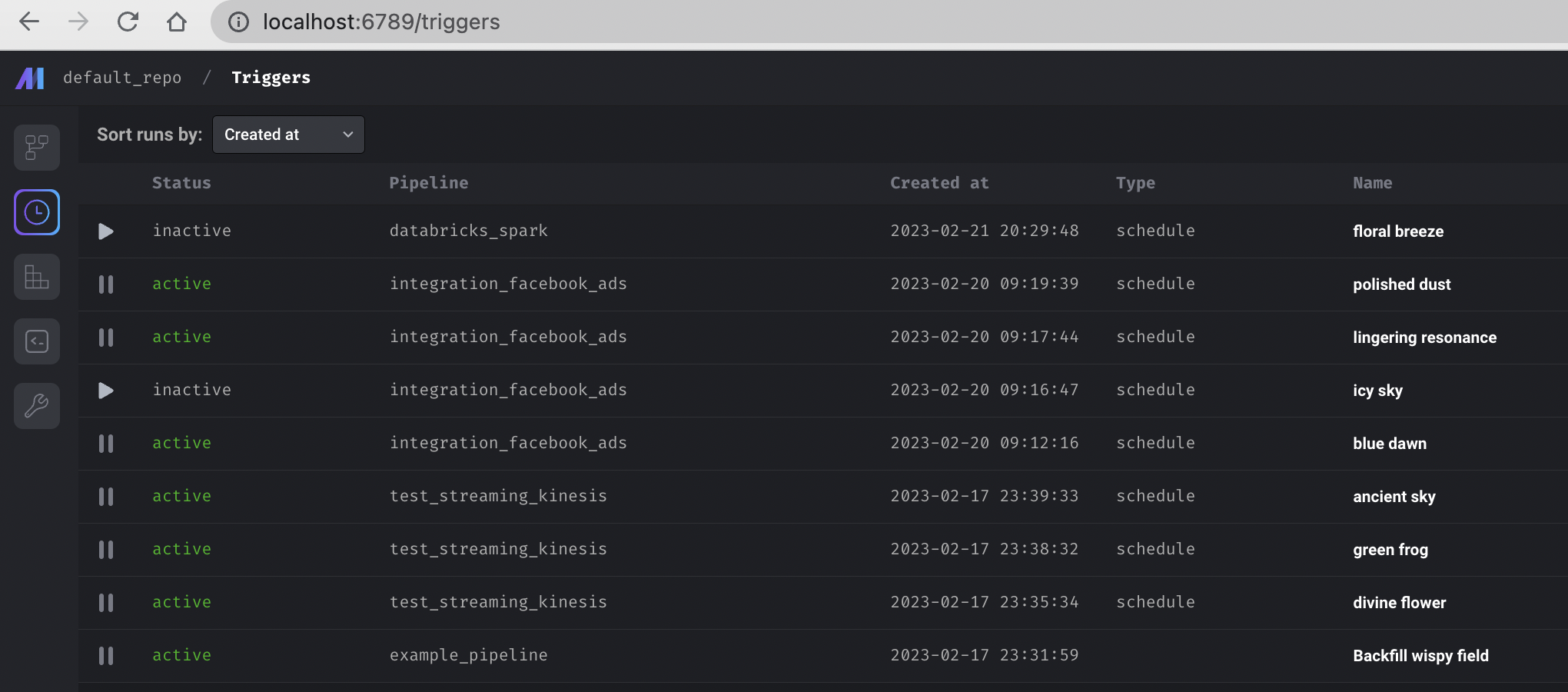

Add triggers list page and terminal tab

- Add a dedicated page to show all triggers.

- Add a link to the terminal in the main dashboard left vertical navigation and show the terminal in the main view of the dashboard.

Support running PySpark pipeline locally

Support running PySpark pipelines locally without custom code and settings.

If you have your Spark cluster running locally, you can just build your standard batch pipeline with PySpark code same as other Python pipelines. Mage handles data passing between blocks automatically for Spark DataFrames. You can use kwargs['spark'] in Mage blocks to access the Spark session.

Other bug fixes & polish

- Add MySQL data exporter template

- Add MySQL data loader template

- Upgrade Pandas version to 1.5.3

- Improve K8s executor

- Pass environment variables to k8s job pods

- Use the same image from main mage server in k8s job pods

- Store and return sample block output for large json object

- Support SASL authentication with Confluent Cloud Kafka in streaming pipeline

View full Changelog

Published by thomaschung408 over 1 year ago

Data integration

New sources

Full lists of available sources and destinations can be found here:

- Sources: https://docs.mage.ai/data-integrations/overview#available-sources

- Destinations: https://docs.mage.ai/data-integrations/overview#available-destinations

Improvements on existing sources and destinations

- Support deltalake connector in Trino destination

- Fix Outreach source bookmark comparison error

- Fix Facebook Ads source “User request limit reached” error

- Show more HubSpot source sync print log statements to give the user more information on the progress and activity

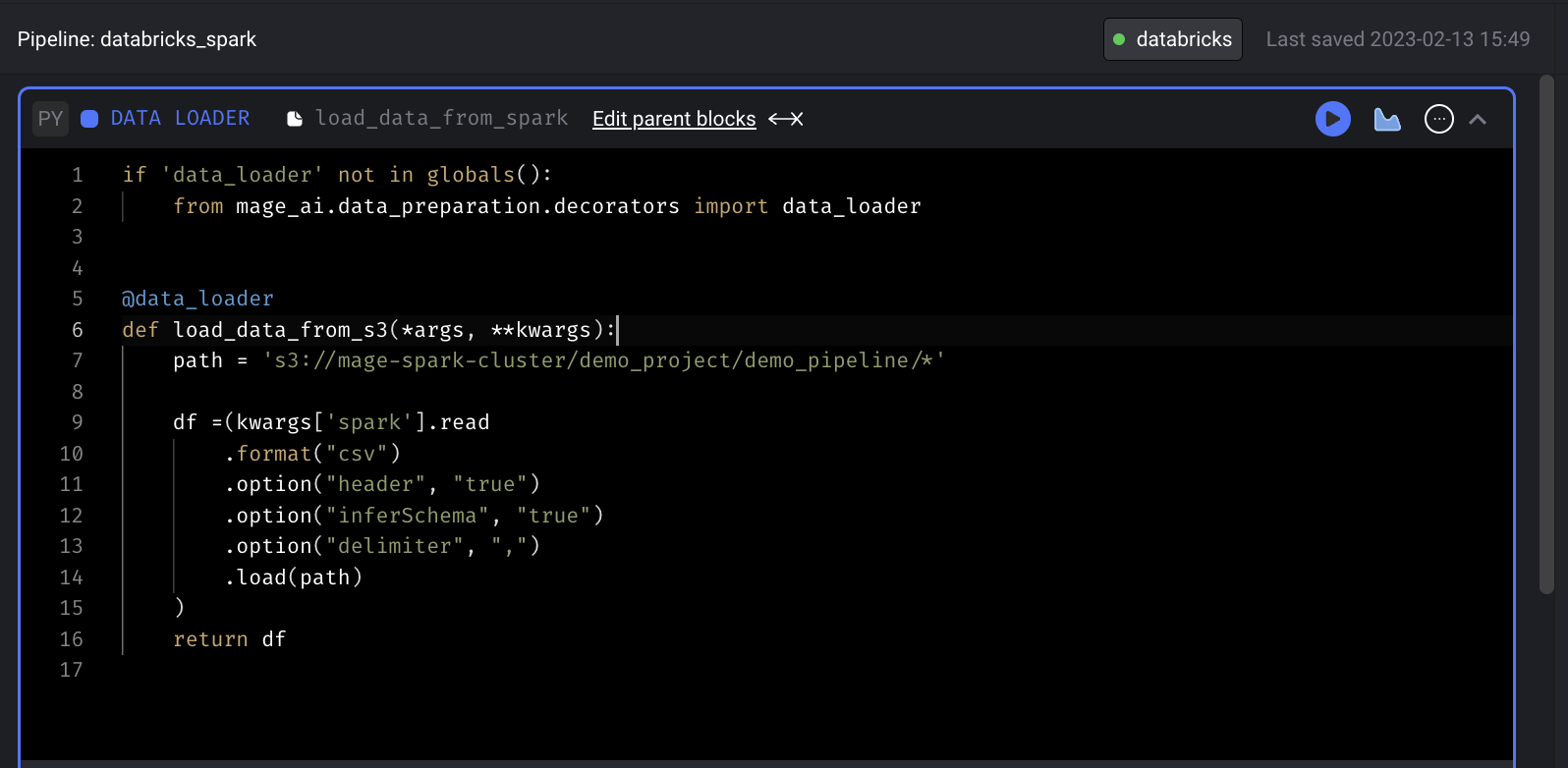

Databricks integration for Spark

Mage now supports building and running Spark pipelines with remote Databricks Spark cluster.

Check out the guide to learn about how to use Databricks Spark cluster with Mage.

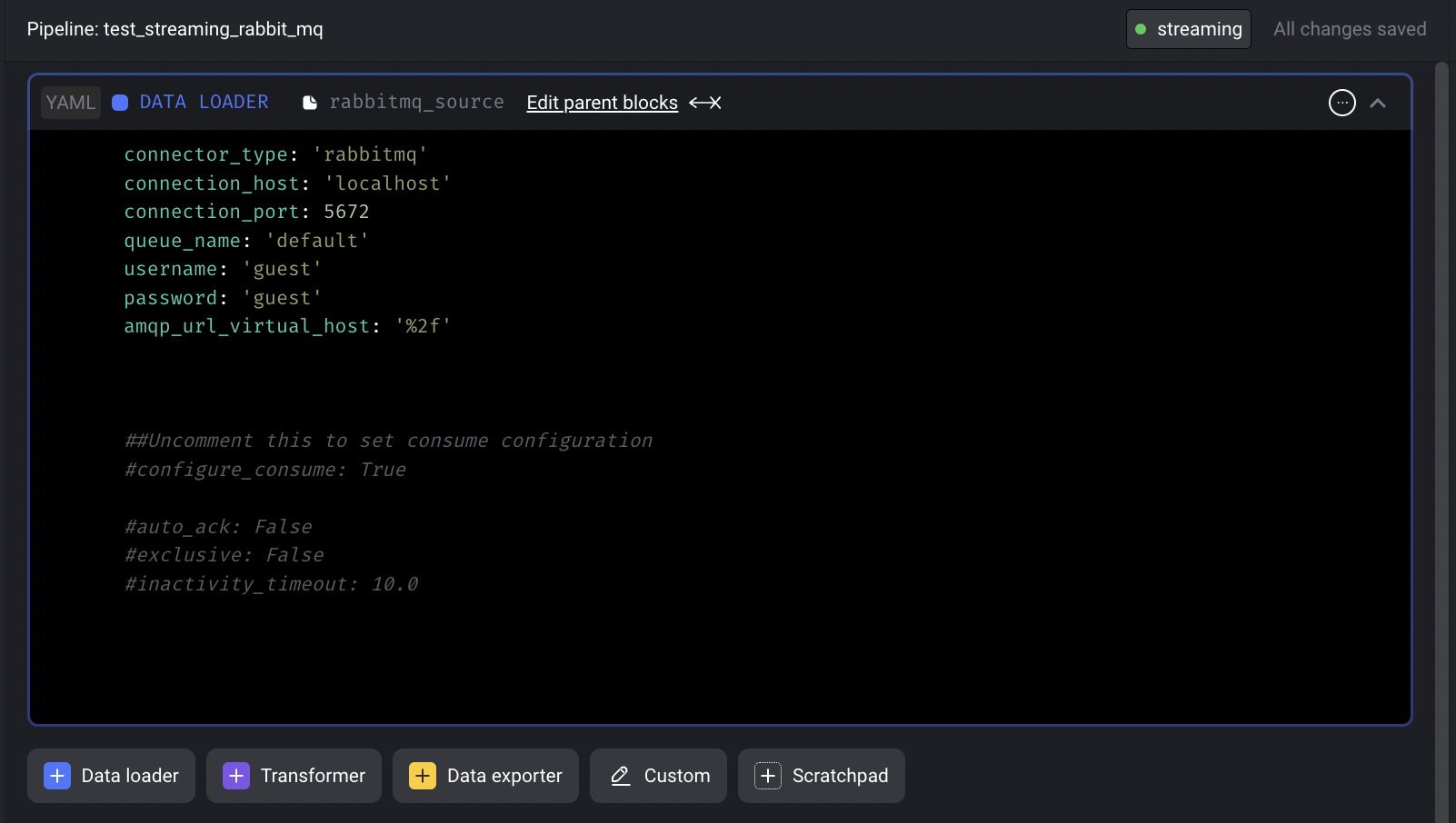

RabbitMQ streaming source

Shout out to Luis Salomão for his contribution of adding the RabbitMQ streaming source to Mage! Check out the doc to set up a streaming pipeline with RabbitMQ source.

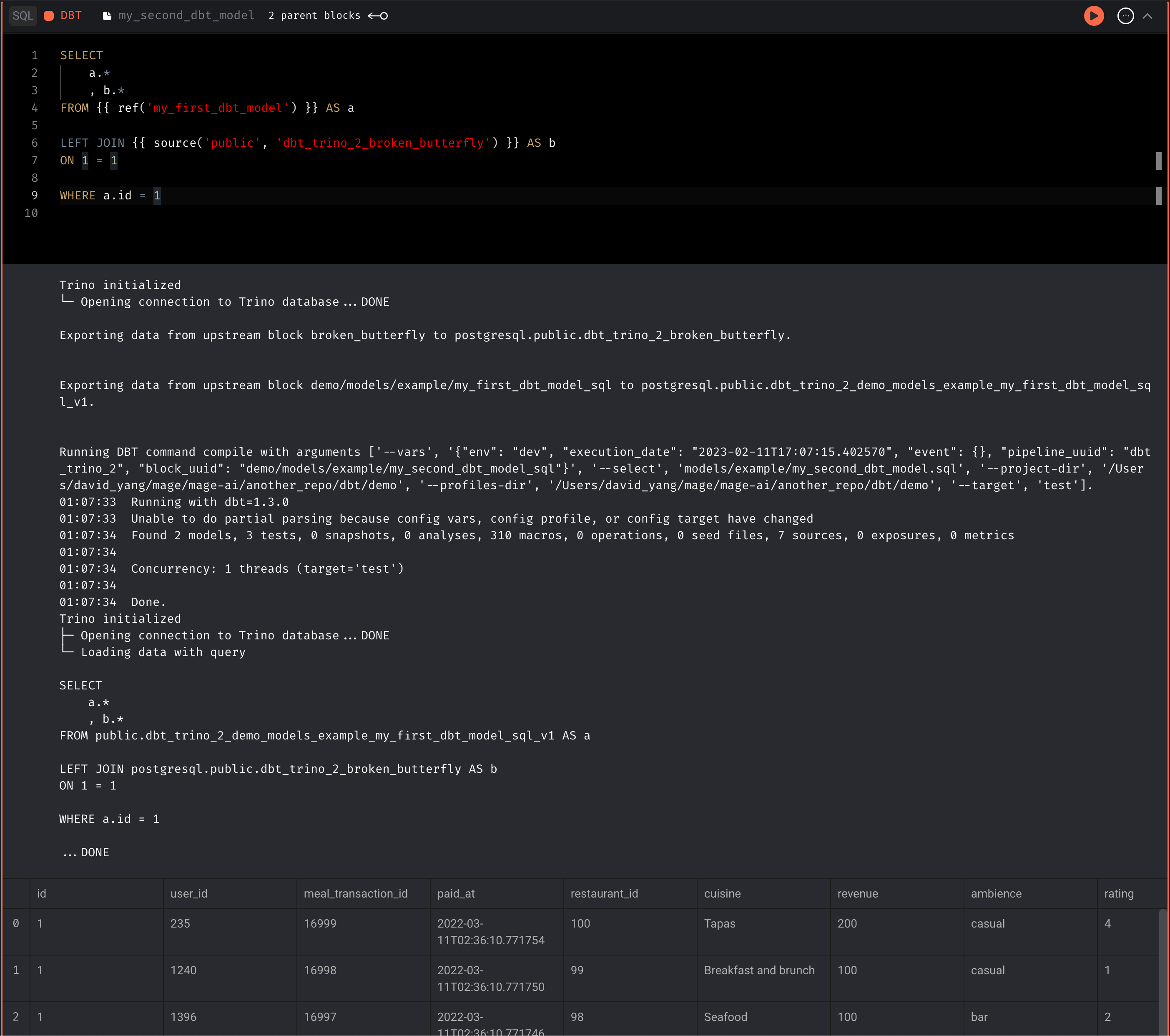

DBT support for Trino

Support running Trino DBT models in Mage.

More K8s support

- Allow customizing namespace by setting the

KUBE_NAMESPACEenvironment variable. - Support K8s executor on AWS EKS cluster.

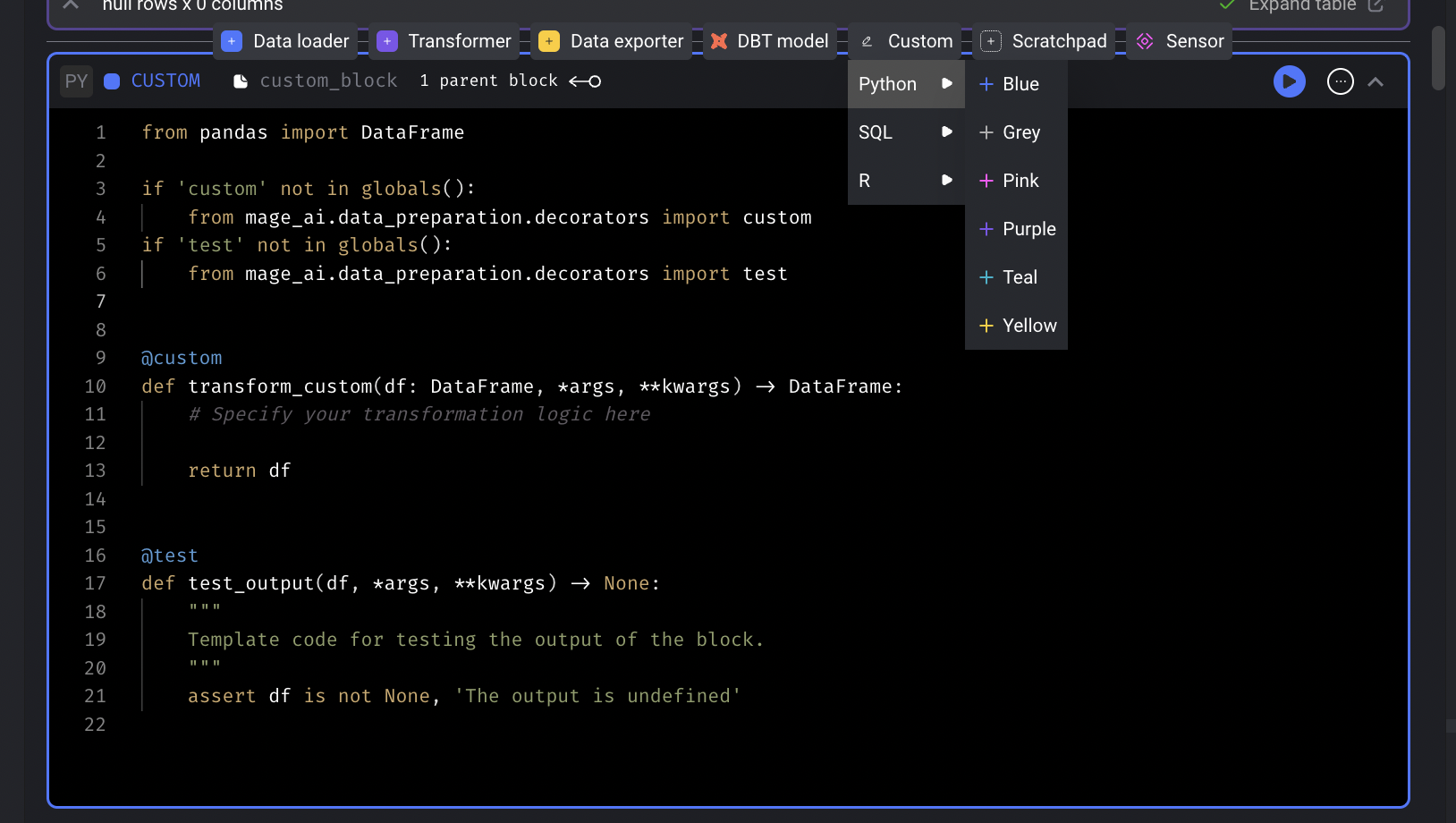

Generic block

Add a generic block that can run in a pipeline, optionally accept inputs, and optionally return outputs but not a data loader, data exporter, or transformer block.

Other bug fixes & polish

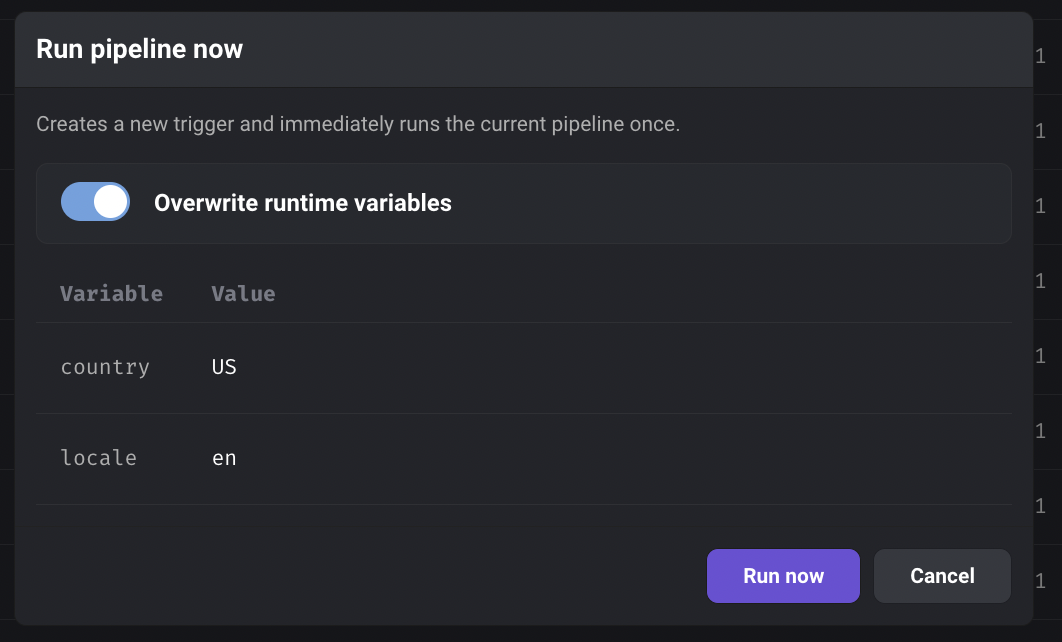

- Support overriding runtime variables when clicking the Run now button on the triggers list page.

- Support MySQL SQL block

- Fix the serialization for the column that is a dictionary or list of dictionaries when saving the output dataframe of a block.

- Allow selecting multiple partition keys for Delta Lake destination.

- Support copy and paste into/from Mage terminal.

View full Changelog

Published by thomaschung408 over 1 year ago

Data integration

New sources

New destinations

Full lists of available sources and destinations can be found here:

- Sources: https://docs.mage.ai/data-integrations/overview#available-sources

- Destinations: https://docs.mage.ai/data-integrations/overview#available-destinations

Improvements on existing sources and destinations

- Trino destination

- Support

MERGEcommand in Trino connector to handle conflict. - Allow customizing

query_max_lengthto adjust batch size.

- Support

- MSSQL source

- Fix datetime column conversion and comparison for MSSQL source.

- BigQuery destination

- Fix BigQuery error “Deadline of 600.0s exceeded while calling target function”.

- Deltalake destination

- Upgrade delta library from version from 0.6.4 to 0.7.0 to fix some errors.

- Allow datetime columns to be used as bookmark properties.

- When clicking apply button in the data integration schema table, if a bookmark column is not a valid replication key for a table or a unique column is not a valid key property for a table, don’t apply that change to that stream.

New command line tool

Mage has a newly revamped command line tool, with better formatting, clearer help commands, and more informative error messages. Kudos to community member @jlondonobo, for your awesome contribution!

DBT block improvements

- Support running Redshift DBT models in Mage.

- Raise an error if there is a DBT compilation error when running DBT blocks in a pipeline.

- Fix duplicate DBT source names error with same source name across multiple

mage_sources.ymlfiles in different model subfolders: use only 1 sources file for. all models instead of nesting them in subfolders.

Notebook improvements

- Support editing global variables in UI: https://docs.mage.ai/production/configuring-production-settings/runtime-variable#in-mage-editor

- Support creating or edit global variables in code by editing the pipeline

metadata.yamlfile. https://docs.mage.ai/production/configuring-production-settings/runtime-variable#in-code - Add a save file button when editing a file not in the pipeline notebook.

- Support Windows keyboard shortcuts: CTRL+S to save the files.

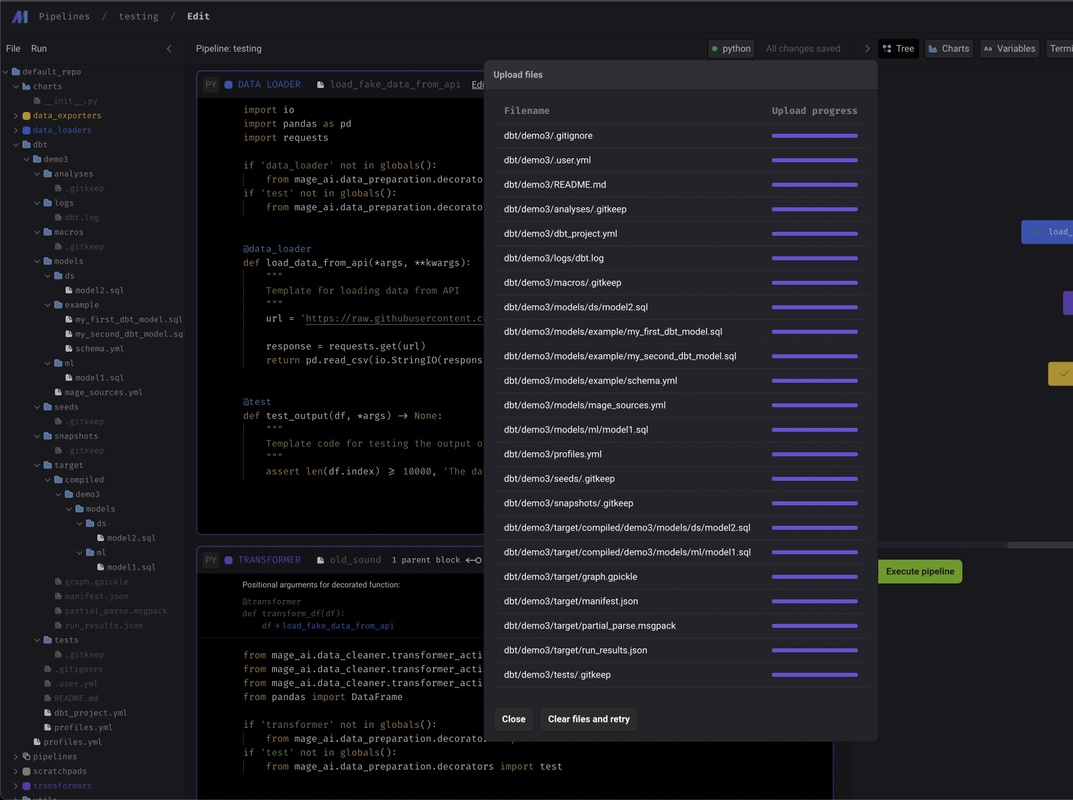

- Support uploading files through UI.

Store logs in GCP Cloud Storage bucket

Besides storing logs on the local disk or AWS S3, we now add the option to store the logs in GCP Cloud Storage by adding logging config in project’s metadata.yaml like below:

logging_config:

type: gcs

level: INFO

destination_config:

path_to_credentials: <path to gcp credentials json file>

bucket: <bucket name>

prefix: <prefix path>

Check out the doc for details: https://docs.mage.ai/production/observability/logging#google-cloud-storage

Other bug fixes & improvements

- SQL block improvements

- Support writing raw SQL to customize the create table and insert commands.

- Allow editing SQL block output table names.

- Support loading files from a directory when using

mage_ai.io.file.FileIO. Example:

from mage_ai.io.file import FileIO

file_directories = ['default_repo/csvs']

FileIO().load(file_directories=file_directories)

View full Changelog

Published by thomaschung408 over 1 year ago

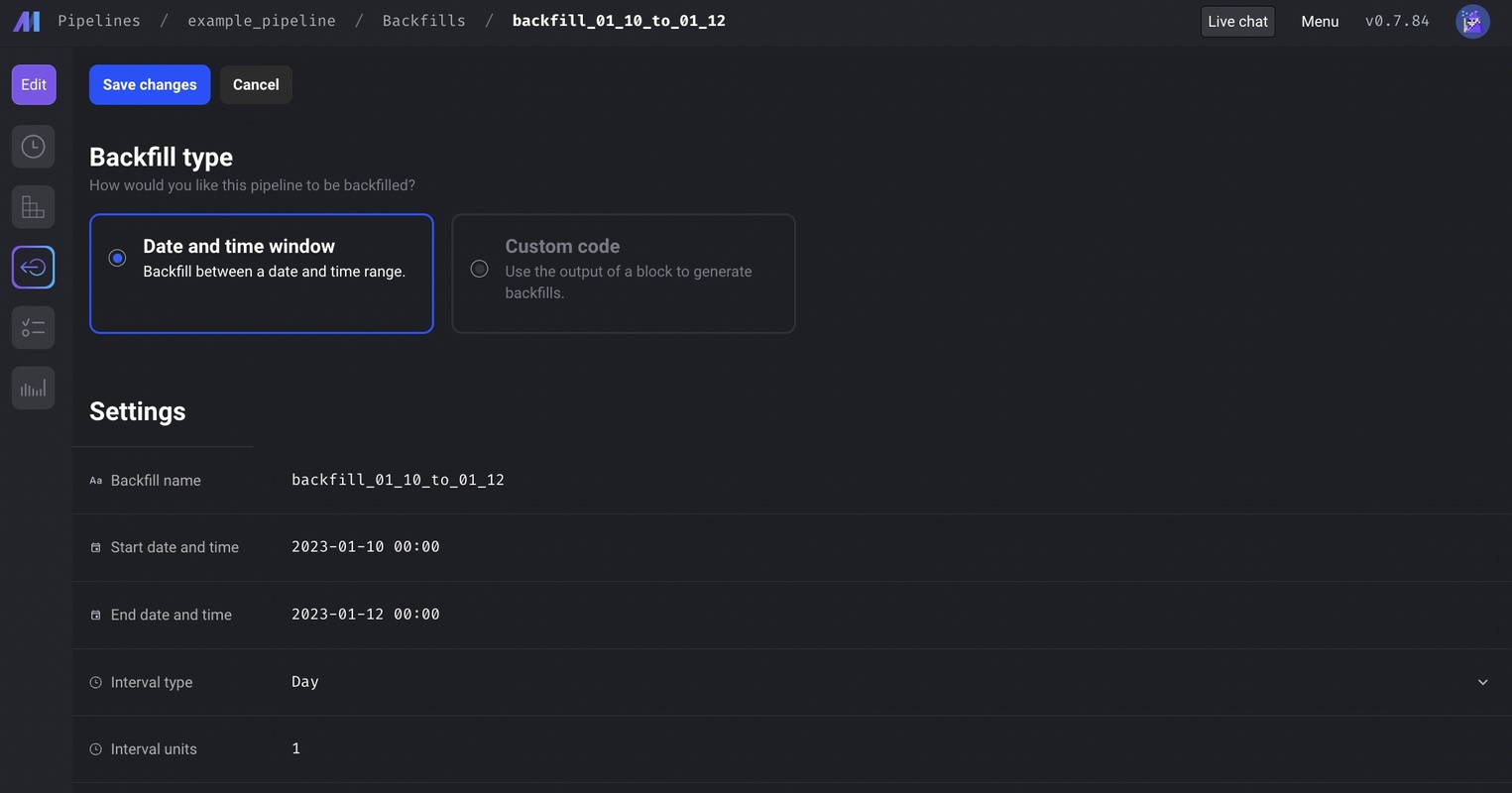

Backfill framework 2.0

Mage launched a new backfill framework to make backfills a lot easier. User can select a date range and date interval for backfill. Mage will automatically create the pipeline runs within the date range, and run them concurrently to backfill the data.

Docs

- Backfill framework overview: https://docs.mage.ai/orchestration/backfills/overview

- Backfill guide: https://docs.mage.ai/orchestration/backfills/guides

Data integration

New sources

New destinations

- Trino (all connectors)

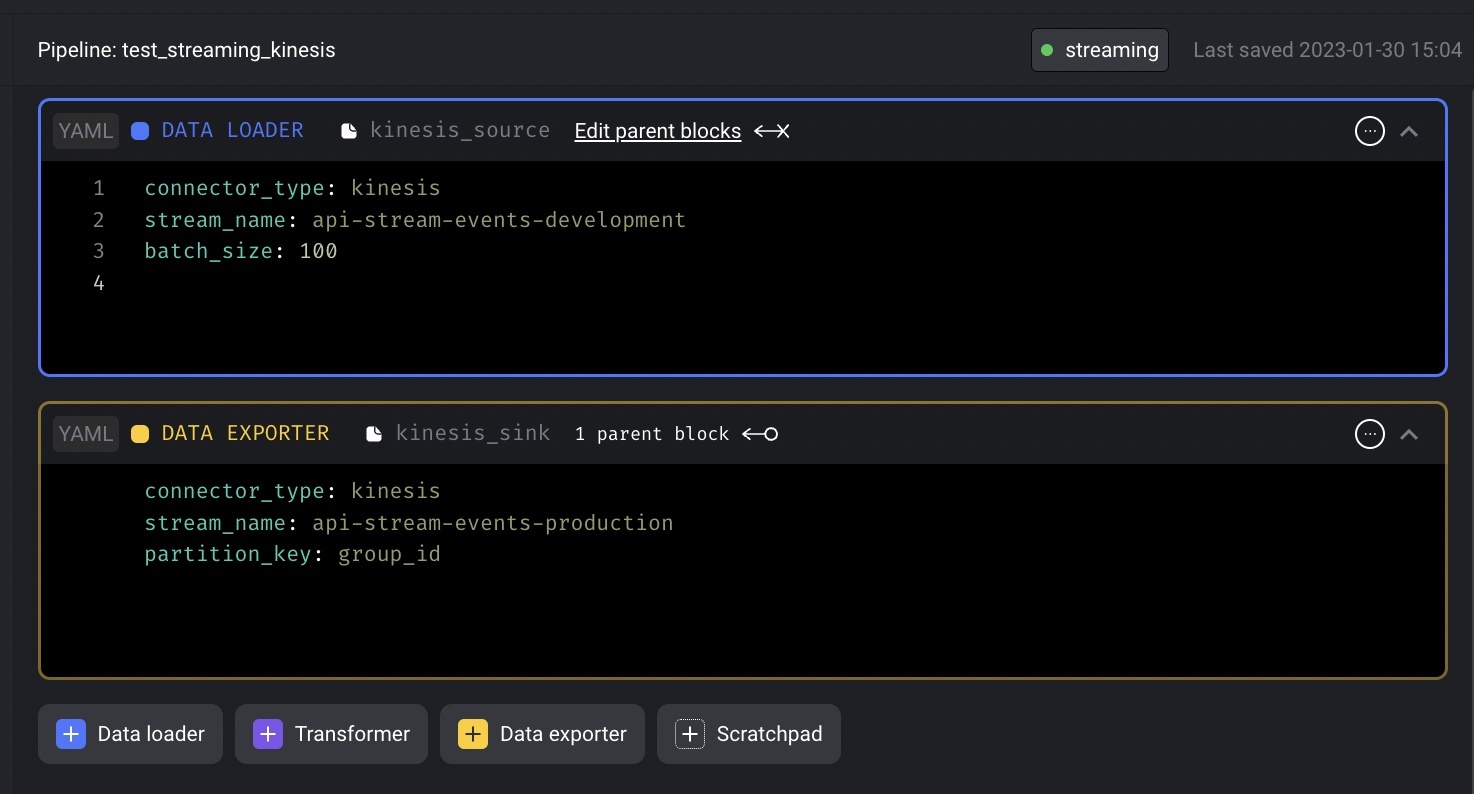

Streaming pipeline

Add Kinesis as streaming source and destination(sink) to streaming pipeline.

- For Kinesis streaming source, configure the source stream name and batch size.

- For Kinesis streaming destination(sink), configure the destination stream name and partition key.

- To use Kinesis streaming source and destination, make sure the following environment variables exist:

AWS_ACCESS_KEY_IDAWS_SECRET_ACCESS_KEYAWS_REGION

Kubernetes support

- Support running Mage in Kubernetes locally: https://docs.mage.ai/getting-started/setup#using-kubernetes

- Support executing blocks in separate Kubernetes jobs: https://docs.mage.ai/production/configuring-production-settings/compute-resource#kubernetes-executor

blocks:

- uuid: example_data_loader

type: data_loader

upstream_blocks: []

downstream_blocks: []

executor_type: k8s

...

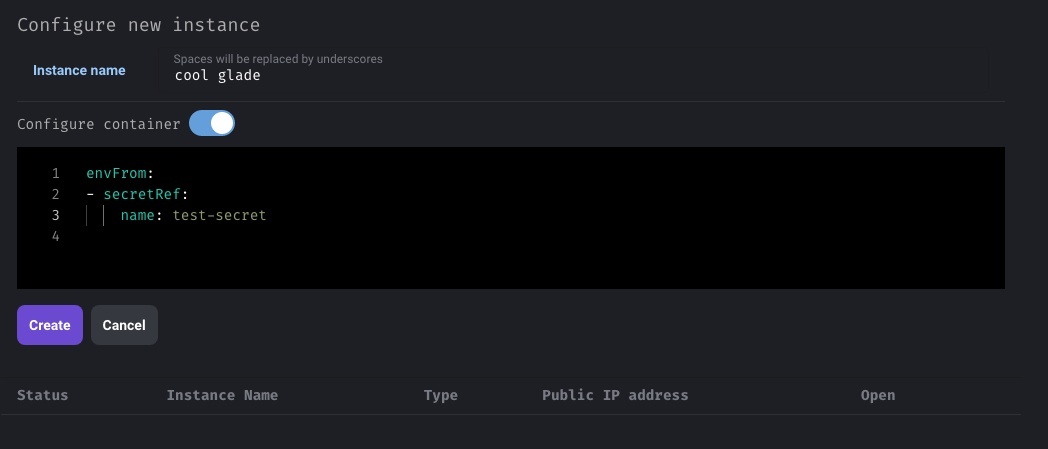

- When managing dev environment in Kubernetes cluster, allow adding custom config for the mage container in Kubernetes.

DBT improvements

- Support running MySQL DBT models in Mage.

- When adding a DBT block to run all/multiple models, allow manual naming of the block.

Metaplane integration

Mage can run monitors in Metaplane via API integration. Check out the guide to learn about how to run monitors in Metaplane and poll statuses of the monitors.

Other bug fixes & polish

- SQL block: support SSH tunnel connection in Postgres SQL block

- Follow this guide to configure Postgres SQL block to use SSH tunnel

- R block: support accessing runtime variables in R block

- Follow this guide to use runtime variables in R blocks

- Added setting to skip current pipeline run if previous pipeline run hasn’t finished.

- Pass runtime variables to test functions. You can access runtime variables via

kwargs['key']in test functions.

View full Changelog

Published by thomaschung408 over 1 year ago

Data integration

New sources

Improvements on existing sources and destinations

- S3 source

- Automatically add

_s3_last_modifiedcolumn from LastModified key, and enable_s3_last_modifiedcolumn as a bookmark property. - Allow filtering objects using regex syntax by configuring

search_patternkey. - Support multiple streams by configuring a list of table configs in

table_configskey. https://github.com/mage-ai/mage-ai/blob/master/mage_integrations/mage_integrations/sources/amazon_s3/README.md

- Automatically add

- Postgres source log based replication

- Automatically add a

_mage_deleted_atcolumn to record the source row deletion time. - When operation is update and unique conflict method is ignore, create a new record in destination.

- Automatically add a

- In source or destination yaml config, interpolate secret values from AWS Secrets Manager using syntax

{{ aws_secret_var('some_name_for_secret') }}. Here is the full guide: https://docs.mage.ai/production/configuring-production-settings/secrets#yaml

Full lists of available sources and destinations can be found here:

- Sources: https://docs.mage.ai/data-integrations/overview#available-sources

- Destinations: https://docs.mage.ai/data-integrations/overview#available-destinations

Customize pipeline alerts

Customize alerts to only send when pipeline fails or succeeds (or both) via alert_on config

notification_config:

alert_on:

- trigger_failure

- trigger_passed_sla

- trigger_success

Here are the guides for configuring the alerts

- Email alerts: https://docs.mage.ai/production/observability/alerting-email#create-notification-config

- Slack alerts: https://docs.mage.ai/production/observability/alerting-slack#update-mage-project-settings

- Teams alerts: https://docs.mage.ai/production/observability/alerting-teams#update-mage-project-settings

Deploy Mage on AWS using AWS Cloud Development Kit (CDK)

Besides using Terraform scripts to deploy Mage to cloud, Mage now also supports managing AWS cloud resources using AWS Cloud Development Kit in Typescript.

Follow this guide to deploy Mage app to AWS using AWS CDK scripts.

Stitch integration

Mage can orchestrate the sync jobs in Stitch via API integration. Check out the guide to learn about how to trigger the jobs in Stitch and poll statuses of the jobs.

Bug fixes & polish

-

Allow pressing

escapekey to close error message popup instead of having to click on thexbutton in the top right corner. -

If a data integration source has multiple streams, select all streams with one click instead of individually selecting every single stream.

-

Make pipeline runs pages (both the overall

/pipeline-runsand the trigger/pipelines/[trigger]/runspages) more efficient by avoiding individual requests for pipeline schedules (i.e. triggers). -

In order to avoid confusion when using the drag and drop feature to add dependencies between blocks in the dependency tree, the ports (white circles on blocks) on other blocks disappear when the drag feature is active. The dependency lines must be dragged from one block’s port onto another block itself, not another block’s port, which is what some users were doing previously.

-

Fix positioning of newly added blocks. Previously when adding a new block with a custom block name, the blocks were being added to the bottom of the pipeline, so these new blocks should appear immediately after the block where it was added now.

-

Popup error messages include both the stack trace and traceback to help with debugging (previously did not include the traceback).

-

Update links to docs in code block comments (links were broken due to recent docs migration to a different platform).

View full Changelog