MLJLinearModels.jl

Generalized Linear Regressions Models (penalized regressions, robust regressions, ...)

MIT License

MLJLinearModels.jl

| [Linux] | Coverage | Documentation |

|---|---|---|

|

This is a package gathering functionalities to solve a number of generalised linear regression/classification problems which, inherently, correspond to an optimisation problem of the form

$$ L(y, X\theta) + P(\theta) $$

where:

- $L$ is a loss function

- $X$ is the $n \times p$ matrix of training observations, where $n$ is the number of observations (sample size) and $p$ is the number of features (dimension)

- $\theta$ the length $p$ vector of weights to be optimized

- $P$ is a penalty function

Additional regression/classification methods which do not directly correspond to this formulation may be added in the future.

The core aims of this package are:

- make these regressions models "easy to call" and callable in a unified way,

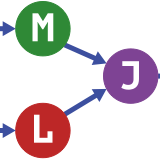

- interface with

MLJ.jl, - focus on performance including in "big data" settings exploiting packages such as

Optim.jl,IterativeSolvers.jl, - use a "machine learning" perspective, i.e.: focus essentially on prediction, hyper-parameters should be obtained via a data-driven procedure such as cross-validation.

Head to the quickstart section of the docs to see how to use this package.

NOTES

This section is only useful if you're interested in implementation details or would like to help extend the library. For usage instruction please head to the docs.

Implemented

| Regressors | Formulation¹ | Available solvers | Comments |

|---|---|---|---|

| OLS & Ridge | L2Loss + 0/L2 | Analytical² or CG³ | |

| Lasso & Elastic-Net | L2Loss + 0/L2 + L1 | (F)ISTA⁴ | |

| Robust 0/L2 | RobustLoss⁵ + 0/L2 | Newton, NewtonCG, LBFGS, IWLS-CG⁶ | no scale⁷ |

| Robust L1/EN | RobustLoss + 0/L2 + L1 | (F)ISTA | |

| Quantile⁸ + 0/L2 | RobustLoss + 0/L2 | LBFGS, IWLS-CG | |

| Quantile L1/EN | RobustLoss + 0/L2 + L1 | (F)ISTA |

- "0" stands for no penalty

- Analytical means the solution is computed in "one shot" using the

\solver, - CG = conjugate gradient

- (Accelerated) Proximal Gradient Descent

- Huber, Andrews, Bisquare, Logistic, Fair and Talwar weighing functions available.

- Iteratively re-Weighted Least Squares where each system is solved iteratively via CG

- In other packages such as Scikit-Learn, a scale factor is estimated along with the parameters, this is a bit ad-hoc and corresponds more to a statistical perspective, further it does not work well with penalties; we recommend using cross-validation to set the parameter of the Huber Loss.

- Includes as special case the least absolute deviation (LAD) regression when

δ=0.5.

| Classifiers | Formulation | Available solvers | Comments |

|---|---|---|---|

| Logistic 0/L2 | LogisticLoss + 0/L2 | Newton, Newton-CG, LBFGS | yᵢ∈{±1} |

| Logistic L1/EN | LogisticLoss + 0/L2 + L1 | (F)ISTA | yᵢ∈{±1} |

| Multinomial 0/L2 | MultinomialLoss + 0/L2 | Newton-CG, LBFGS | yᵢ∈{1,...,c} |

| Multinomial L1/EN | MultinomialLoss + 0/L2 + L1 | ISTA, FISTA | yᵢ∈{1,...,c} |

Unless otherwise specified:

- Newton-like solvers use Hager-Zhang line search (default in

Optim.jl) - ISTA, FISTA solvers use backtracking line search and a shrinkage factor of

β=0.8

Note: these models were all tested for correctness whenever a direct comparison with another package was possible, usually by comparing the objective function at the coefficients returned (cf. the tests):

- (against scikit-learn): Lasso, Elastic-Net, Logistic (L1/L2/EN), Multinomial (L1/L2/EN)

- (against quantreg): Quantile (0/L1)

Systematic timing benchmarks have not been run yet but it's planned (see this issue).

Current limitations

- The models are built and tested assuming

n > p; if this doesn't hold, tricks should be employed to speed up computations; these have not been implemented yet. - CV-aware code not implemented yet (code that re-uses computations when fitting over a number of hyper-parameters); "Meta" functionalities such as One-vs-All or Cross-Validation are left to other packages such as MLJ.

- No support yet for sparse matrices.

- Stochastic solvers have not yet been implemented.

- All computations are assumed to be done in Float64.

Possible future models

Future

| Model | Formulation | Comments |

|---|---|---|

| Group Lasso | L2Loss + ∑L1 over groups | ⭒ |

| Adaptive Lasso | L2Loss + weighted L1 | ⭒ A |

| SCAD | L2Loss + SCAD | A, B, C |

| MCP | L2Loss + MCP | A |

| OMP | L2Loss + L0Loss | D |

| SGD Classifiers | *Loss + No/L2/L1 and OVA | SkL |

- (⭒) should be added soon

Other regression models

There are a number of other regression models that may be included in this package in the longer term but may not directly correspond to the paradigm Loss+Penalty introduced earlier.

In some cases it will make more sense to just use GLM.jl.

Sklearn's list: https://scikit-learn.org/stable/supervised_learning.html#supervised-learning

| Model | Note | Link(s) |

|---|---|---|

| LARS | -- | |

| Quantile Regression | -- | Yang et al, 2013, QuantileRegression.jl |

| L∞ approx (Logsumexp) | -- | slides |

| Passive Agressive | -- | Crammer et al, 2006 SkL |

| Orthogonal Matching Pursuit | -- | SkL |

| Least Median of Squares | -- | Rousseeuw, 1984 |

| RANSAC, Theil-Sen | Robust reg | Overview RANSAC, SkL, SkL, More Ransac |

| Ordinal regression | need to figure out how they work | E |

| Count regression | need to figure out how they work | R |

| Robust M estimators | -- | F |

| Perceptron, MIRA classifier | Sklearn just does OVA with binary in SGDClassif | H |

| Robust PTS and LTS | -- | PTS LTS |

What about other packages

While the functionalities in this package overlap with a number of existing packages, the hope is that this package will offer a general entry point for all of them in a way that won't require too much thinking from an end user (similar to how someone would use the tools from sklearn.linear_model).

If you're looking for specific functionalities/algorithms, it's probably a good idea to look at one of the packages below:

- SparseRegression.jl

- Lasso.jl

- QuantileRegression.jl

- (unmaintained) Regression.jl

- (unmaintained) LARS.jl

- (unmaintained) FISTA.jl

- (unmaintained) RobustLeastSquares.jl

There's also GLM.jl which is more geared towards statistical analysis for reasonably-sized datasets and does (as far as I'm aware) lack a few key functionalities for ML such as penalised regressions or multinomial regression.

References

- Minka, Algorithms for Maximum Likelihood Regression, 2003. For a review of numerical methods for the binary Logistic Regression.

- Beck and Teboulle, A Fast Iterative Shrinkage-Thresholding Algorithm for Linear Inverse Problems, 2009. For the ISTA and FISTA algorithms.

- Raman et al, DS-MLR: Exploiting Double Separability for Scaling up DistributedMultinomial Logistic Regression, 2018. For a discussion of multinomial regression.

-

Robust regression

- Mastronardi, Fast Robust Regression Algorithms for Problems with Toeplitz Structure, 2007. For a discussion on algorithms for robust regression.

- Fox and Weisberg, Robust Regression, 2013. For a discussion on robust regression and the IWLS algorithm.

- Statsmodels, M Estimators for Robust Linear Modeling. For a list of weight functions beyond Huber's.

- O'Leary, Robust Regression Computation using Iteratively Reweighted Least Squares, 1990. Discussion of a few common robust regressions and implementation with IWLS.

Dev notes

- Probit Loss --> via StatsFuns // Φ(x) (normcdf); ϕ(x) (normpdf); -xϕ(x)

- Newton, LBFGS take linesearches, seems NewtonCG doesn't

- several ways of doing backtracking (e.g. https://archive.siam.org/books/mo25/mo25_ch10.pdf); for FISTA many though see http://www.seas.ucla.edu/~vandenbe/236C/lectures/fista.pdf; probably best to have "decent safe defaults"; also this for FISTA http://150.162.46.34:8080/icassp2017/pdfs/0004521.pdf ; https://github.com/tiepvupsu/FISTA#in-case-lf-is-hard-to-find ; https://hal.archives-ouvertes.fr/hal-01596103/document; not so great https://github.com/klkeys/FISTA.jl/blob/master/src/lasso.jl ;

- https://www.ljll.math.upmc.fr/~plc/prox.pdf

- proximal QN http://www.stat.cmu.edu/~ryantibs/convexopt-S15/lectures/24-prox-newton.pdf; https://www.cs.utexas.edu/~inderjit/public_papers/Prox-QN_nips2014.pdf; https://github.com/yuekai/PNOPT; https://arxiv.org/pdf/1206.1623.pdf

- group lasso http://myweb.uiowa.edu/pbreheny/7600/s16/notes/4-27.pdf